Research

Latest AI signals in this category

Investment · $97 million

Researchother

Beacon Biosignals maps brain activity during sleep

Beacon Biosignals is developing a headband to monitor brain activity during sleep, using machine learning to analyze data for neurological disorders. The company recently raised $97 million to expand its platform and clinical trials.

MIT News AI

© MIT News AI

© MIT News AIResearchwriting

MIT Student Explores Language and AI Intersections

MIT senior Olivia Honeycutt researches the connections between language, cognition, and AI. Her work focuses on language acquisition, emotional intelligence, and the impact of linguistic diversity on education.

MIT News AI

© Microsoft Research

© Microsoft ResearchResearchagents

Red-teaming AI agent networks reveals new vulnerabilities

Microsoft Research explores vulnerabilities in networks of AI agents, highlighting risks that emerge only through interaction. Their tests reveal how malicious messages can propagate and manipulate agent behavior.

Microsoft Research

Researchresearch

AI Co-Clinician Development Announced by DeepMind

Google DeepMind is researching the development of an AI co-clinician aimed at augmenting healthcare delivery. This initiative focuses on integrating AI into clinical settings to enhance patient care.

Google DeepMind

© The Rundown AI

© The Rundown AIInvestment · $500M

Researchresearch

Zuckerberg's Biohub Invests $500M in AI Biology

Mark Zuckerberg and Priscilla Chan's Biohub announced a $500 million investment in a five-year Virtual Biology Initiative aimed at generating data to model disease at the cellular level. The initiative will involve partnerships with organizations like Nvidia and the Allen Institute to create open datasets for AI research.

The Rundown AI

© Sifted

© SiftedResearchother

Startups Using AI for Material Discovery

Several startups are leveraging AI technologies to innovate in the field of material discovery, aiming to enhance efficiency and effectiveness in identifying new materials.

Sifted

© MIT News AI

© MIT News AIResearchother

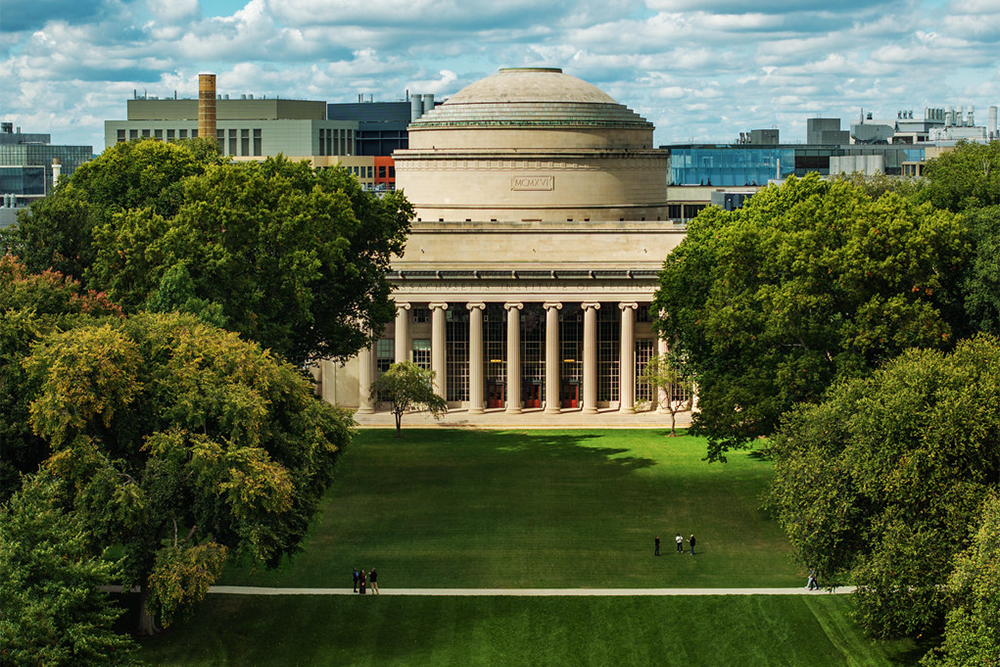

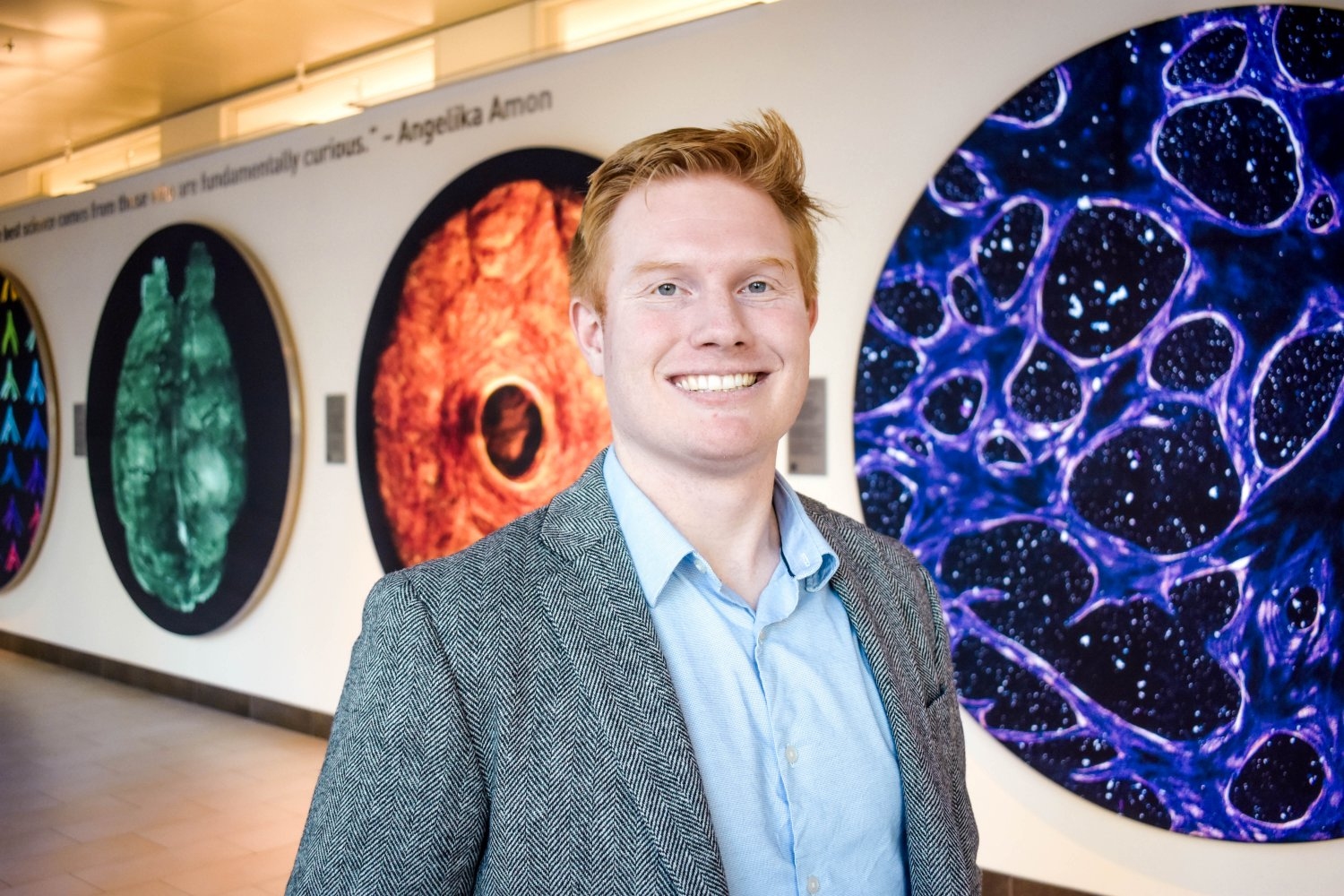

MIT President Advocates for Curiosity-Driven Science

MIT President Sally Kornbluth discussed the importance of curiosity-driven science and its critical role in the future of the nation during a live podcast. She emphasized the need for robust scientific research and the university's responsibility to advocate for it in Washington, D.C.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

New Method Addresses AI Vision Model Bias

Researchers from MIT, Worcester Polytechnic Institute, and Google introduced a novel debiasing technique called Weighted Rotational DebiasING (WRING) for vision language models. This approach aims to mitigate bias in AI models used in high-stakes medical scenarios, addressing limitations of existing methods.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Google Research Utilizes Empirical Research Assistance

Google Research scientists have identified four applications of Empirical Research Assistance in their work. These applications focus on enhancing data mining and modeling techniques.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT and IBM Launch New Computing Research Lab

MIT and IBM have announced the launch of the MIT-IBM Computing Research Lab, which will focus on advancing AI and quantum computing. This new lab builds on their previous collaboration and aims to redefine computational approaches.

MIT News AI

© WIRED AI

© WIRED AIResearchresearch

AI's Role in Combating Antibiotic Resistance

British surgeon Ara Darzi discussed how AI could improve the diagnosis and treatment of drug-resistant infections at WIRED Health. However, he noted that a lack of incentives may hinder the innovation from reaching patients.

WIRED AI

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Faster Privacy-Preserving AI Training Method

MIT researchers have created a method that accelerates privacy-preserving AI training by 81%, enhancing federated learning for resource-constrained devices. This advancement allows devices like sensors and smartwatches to deploy more accurate AI models while maintaining data security.

MIT News AI

© AI News

© AI NewsResearchresearch

Evolution of Encoders in AI Explained

Encoders in AI have evolved from simple data converters to sophisticated systems capable of understanding multiple forms of information. This transformation has been driven by advancements in neural networks and the need for more intelligent data processing.

AI News

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Fast Tool for AI Power Estimation

Researchers from MIT and the MIT-IBM Watson AI Lab created a rapid prediction tool that estimates power consumption for AI workloads on various processors. This tool significantly reduces the time needed for power estimates from hours to seconds.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Creates Largest Collection of Math Olympiad Problems

MIT researchers have developed MathNet, the largest dataset of Olympiad-level math problems, featuring over 30,000 expert-authored problems from 47 countries. This dataset aims to support AI research and student training in mathematical reasoning.

MIT News AI

© Together AI Blog

© Together AI BlogResearchresearch

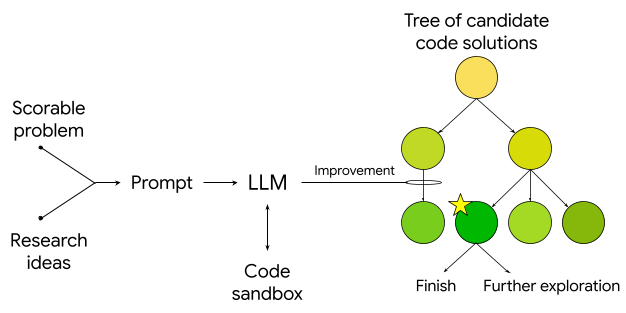

Accelerate RL Rollouts with Speculative Decoding

Together AI introduces distribution-aware speculative decoding (DAS) that can speed up reinforcement learning rollouts by up to 50% without degrading reward quality.

Together AI Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Method for AI Confidence Calibration

Researchers at MIT's CSAIL have developed a technique called RLCR that trains AI models to provide calibrated confidence estimates alongside their answers. This method significantly reduces overconfidence in AI responses while maintaining accuracy.

MIT News AI

© MIT Technology Review AI

© MIT Technology Review AIResearchresearch

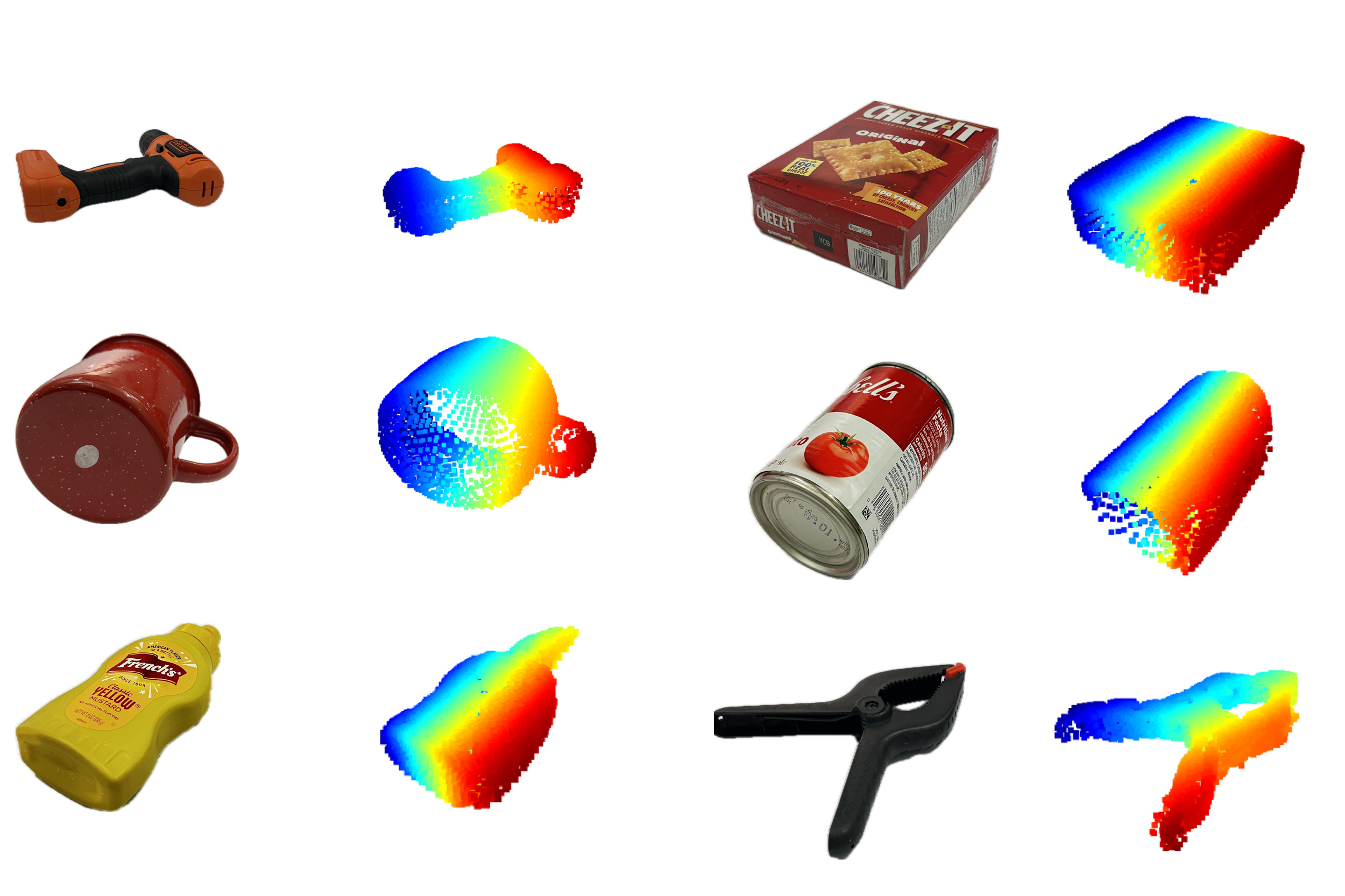

World Models Gain Attention in AI Research

Recent developments in world models by Google DeepMind and Stanford's Fei-Fei Li highlight the challenges AI faces in understanding the physical world. These models aim to enhance AI's capabilities in robotics and navigation, addressing limitations of current language models.

MIT Technology Review AI

© MIT News AI

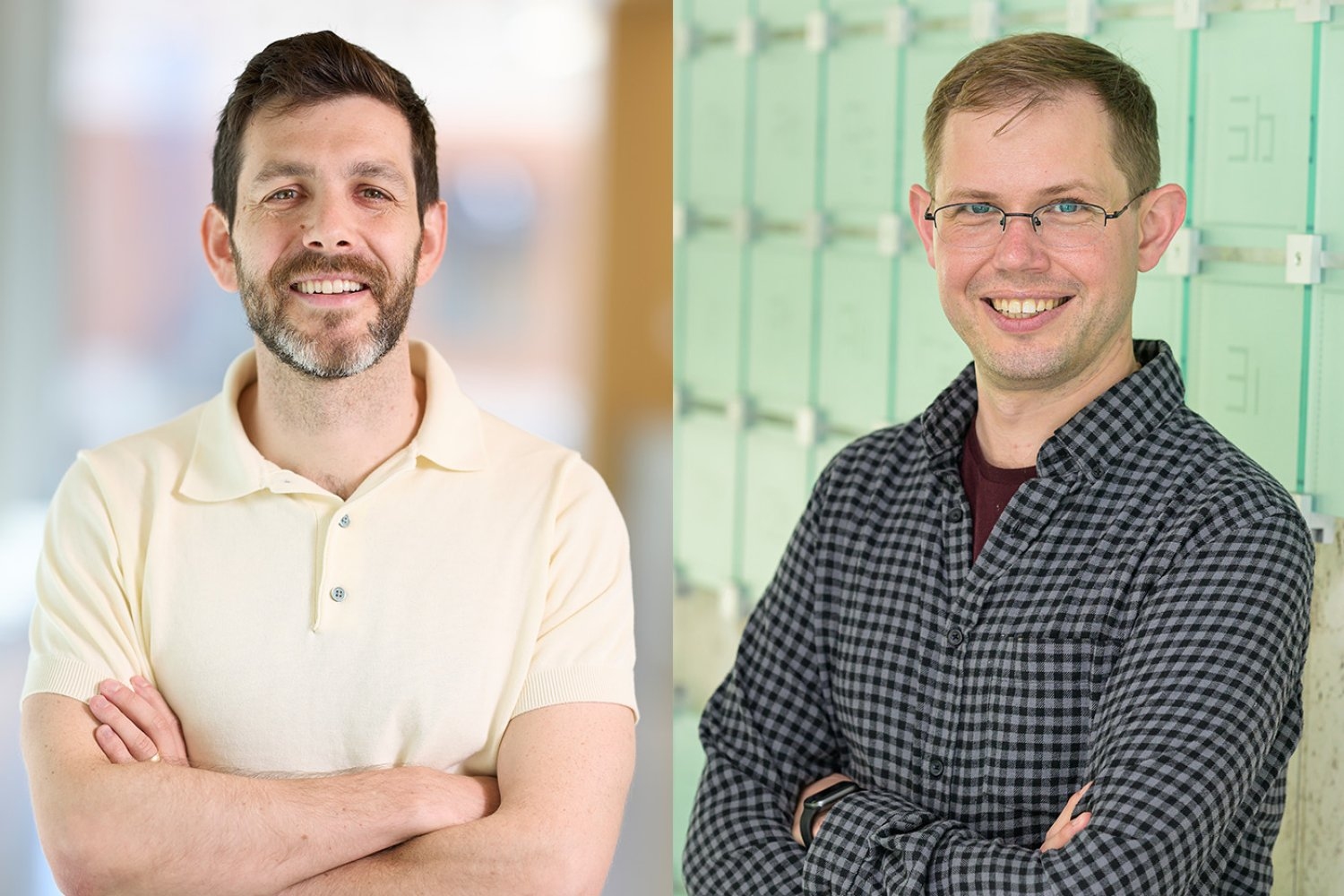

© MIT News AIResearchresearch

MIT Professors Win Edgerton Award for Achievement

Jacob Andreas and Brett McGuire have been awarded the 2026 Harold E. Edgerton Faculty Achievement Award for their exceptional contributions in teaching, research, and service. Their work significantly impacts fields such as natural language processing and astrochemistry.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

New Approach to Synthetic Dataset Design

Google Research discusses a method for designing synthetic datasets using mechanism design and first principles reasoning. This approach aims to improve the applicability of synthetic data in real-world scenarios.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI-generated Neurons Enhance Brain Mapping Speed

Researchers at Google have developed AI-generated synthetic neurons that improve the efficiency of brain mapping. This innovation could lead to faster and more accurate understanding of brain functions.

Google Research Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Reward Hacking Prediction via Reasoning Interpolation

The article discusses the use of importance sampling with fine-tuned donor prefills to predict the emergence of reward hacking during AI training.

EleutherAI Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Human-Robot Teaming for Underwater Tasks

MIT Lincoln Laboratory is working on a project to enhance human-robot collaboration underwater, focusing on autonomous underwater vehicles (AUVs) to assist divers in locating faults in underwater power cables. The project aims to optimize maritime missions for the U.S. military by leveraging the strengths of both humans and robots.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Philosopher Explores Value of Work in Society

Michal Masny from MIT examines the multifaceted value of work, arguing it contributes to personal development, social recognition, and community building. He suggests that eliminating work entirely may not benefit society and advocates for a more integrated approach to education in technology and ethics.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

New Method Enhances AI Model Training Efficiency

Researchers have developed a technique called CompreSSM that compresses AI models during training, improving their efficiency without sacrificing performance. This method allows for the identification and removal of unnecessary components early in the training process.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

ConvApparel Bridges Realism Gap in User Simulators

Google Research has introduced ConvApparel, a new approach aimed at improving the realism of user simulators in generative AI applications. This method focuses on measuring and addressing the discrepancies between simulated and real-world user interactions.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Develops System to Enhance Data Center Performance

MIT researchers have created a system that improves data center efficiency by addressing performance variability in storage devices. This new approach can nearly double performance for tasks like AI model training without requiring specialized hardware.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Advancing Nuclear Energy for Carbon-Free Generation

Dean Price, an MIT nuclear engineer, emphasizes the need for enhanced nuclear energy solutions in the U.S., which currently relies on 94 reactors for nearly 20% of its electricity. He aims to design new nuclear reactors that improve safety, economics, and reliability.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Evaluating LLM Behavioral Alignment

Google Research discusses methods for assessing the alignment of behavioral dispositions in large language models (LLMs). The evaluation aims to understand how well these models align with intended behaviors.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchresearch

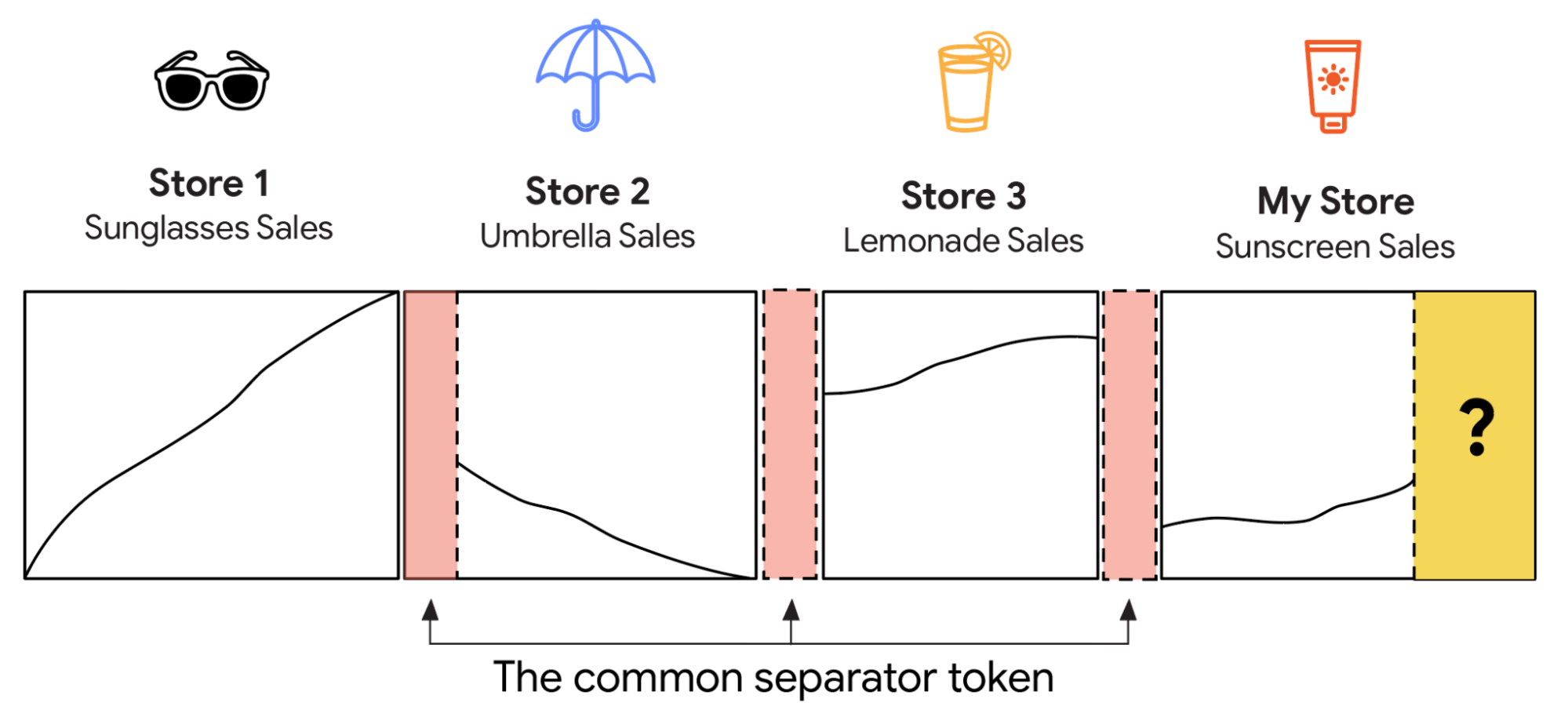

LLMs Optimize Database Query Execution Plans

New research demonstrates that large language models (LLMs) can enhance database query execution by correcting cardinality estimation errors, resulting in speed improvements of up to 4.78 times.

Together AI Blog

© MIT News AI

© MIT News AIInvestment

Researchresearch

MIT Develops Ethical Evaluation Method for AI Systems

MIT researchers created an automated evaluation method to assess the ethical implications of autonomous systems in decision-making. This framework uses a large language model to balance measurable outcomes with subjective values like fairness.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Improving AI Benchmarking with Rater Analysis

Google Research discusses the optimal number of raters needed for effective AI benchmarking. The analysis aims to enhance the reliability and validity of AI performance evaluations.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Disclosing Quantum Vulnerabilities in Cryptocurrency

Google Research emphasizes the importance of responsibly disclosing quantum vulnerabilities in cryptocurrency systems. This approach aims to enhance security measures against potential quantum computing threats.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT AI Model Detects Atomic Defects Noninvasively

MIT researchers developed an AI model that classifies and quantifies atomic defects in materials using noninvasive neutron-scattering data. This model can detect up to six types of point defects simultaneously, improving the understanding of material properties without damaging them.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

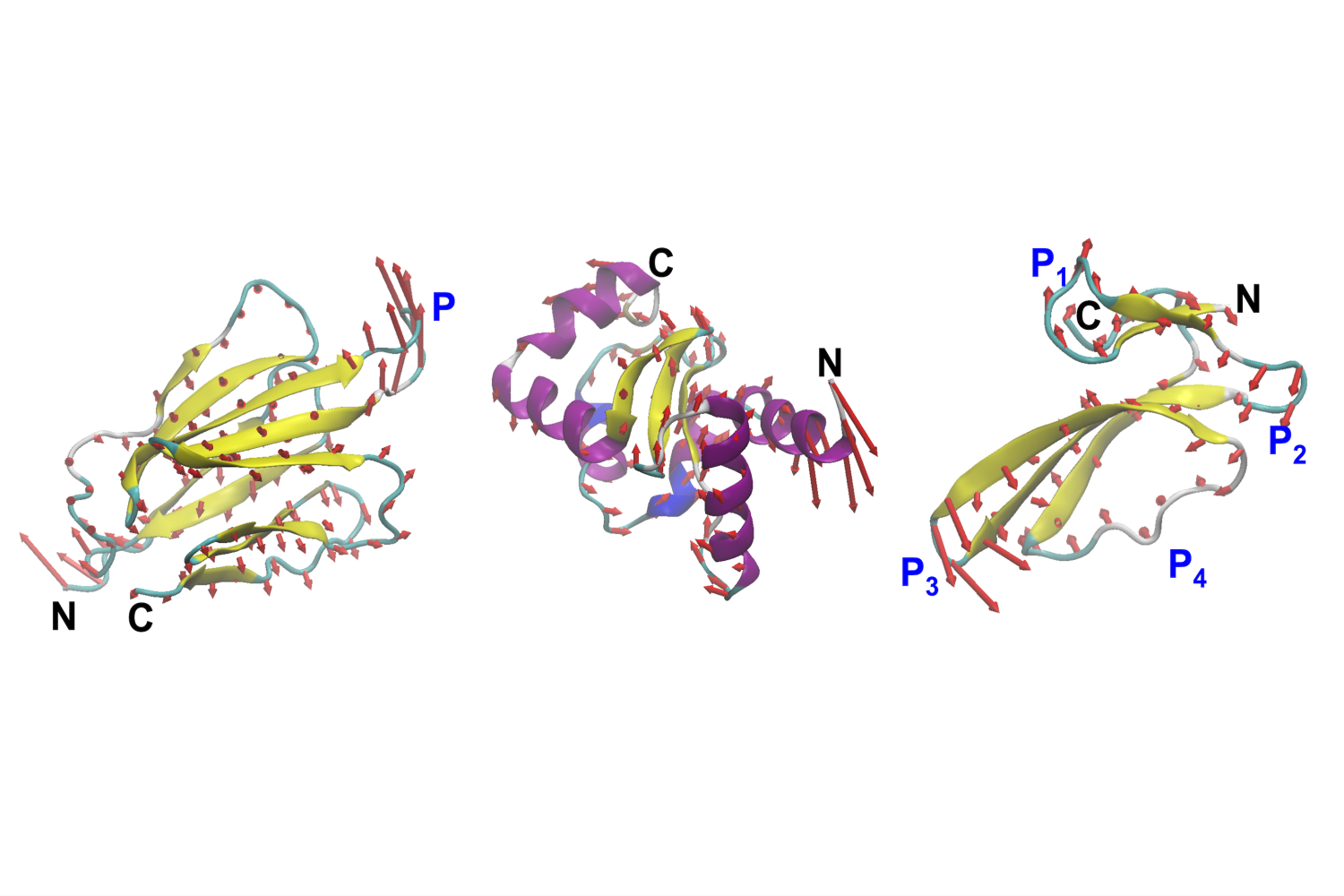

MIT Develops AI Model for Protein Motion Design

MIT engineers have created VibeGen, an AI model that designs proteins based on their motion rather than just their shape. This advancement allows for targeted manipulation of protein dynamics, enhancing their functional capabilities.

MIT News AI

© Together AI Blog

© Together AI BlogResearchresearch

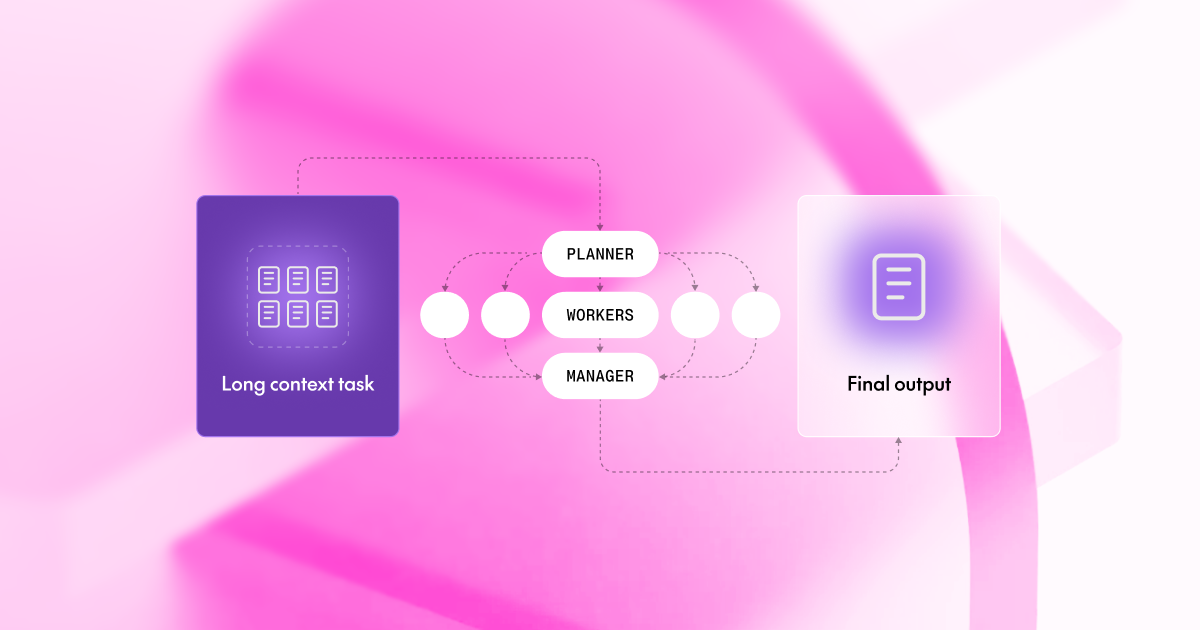

Weak Models Excel at Long Context Tasks

A new framework called 'Divide & Conquer' allows smaller models to outperform larger ones in handling long context tasks by breaking documents into manageable chunks. This approach utilizes a planner, workers, and a manager to enhance performance.

Together AI Blog

© MIT News AI

© MIT News AIResearchresearch

Computer Vision Enhances Fish Monitoring Efforts

Researchers have developed a method using underwater video and computer vision to improve the monitoring of river herring populations. This approach aims to supplement traditional citizen science methods, enhancing accuracy and efficiency in fish counting.

MIT News AI

Researchresearch

MIT Develops Ultrasound Wristband for Robotic Control

MIT engineers have created an ultrasound wristband that tracks hand movements in real-time, allowing wearers to control robotic hands and virtual objects. The device uses AI to translate muscle images into finger positions, enabling precise manipulation.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

S2Vec Algorithm Maps Urban Language

Google Research introduced S2Vec, an algorithm designed to understand and map the language of cities. This tool aims to enhance urban planning and analysis by interpreting spatial data.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Researchers Propose 'Humble' AI for Healthcare

An international team led by MIT suggests programming AI systems to exhibit humility, allowing them to indicate uncertainty in diagnoses. This approach aims to enhance collaboration between doctors and AI, reducing the risk of overconfidence in medical decision-making.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Postdoc Explores AI's Impact on Trade

Sojun Park, a postdoc at MIT's Center for International Studies, presented on the global diffusion of AI technologies and their political implications. His research benefits from the interdisciplinary environment at MIT, enhancing his work on international trade and security.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Professor Discusses AI's Real-World Applications

MIT Professor Dimitris Bertsimas delivered the 54th annual James R. Killian Faculty Achievement Award Lecture, highlighting his work in operations research and its impact on various sectors. He emphasized the integration of artificial intelligence in his projects and educational initiatives.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Conference Discusses AI Development Paths

At an MIT conference, journalist Karen Hao emphasized the need to shift AI development away from large-scale data use and models. She advocated for smaller, task-specific AI models, citing the example of AlphaFold as a more efficient approach.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT and HPI Launch AI and Creativity Hub

MIT and the Hasso Plattner Institute have established the AI and Creativity Hub to enhance interdisciplinary research and education in AI and design. This 10-year initiative aims to explore the intersection of human creativity and artificial intelligence.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Generative AI Enhances Wireless Vision Systems

MIT researchers have developed a method using generative AI to improve the accuracy of wireless vision systems that see through obstructions. This technique allows for better shape reconstructions of hidden objects and can reconstruct entire environments while preserving privacy.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

New method improves uncertainty measurement in LLMs

MIT researchers developed a method to better identify overconfident large language models (LLMs) by measuring cross-model disagreement. This approach aims to enhance the reliability of predictions in high-stakes applications.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT-IBM Lab Supports Early-Career AI Faculty

The MIT-IBM Watson AI Lab is aiding early-career faculty by providing resources and collaboration opportunities that enhance their research capabilities. Faculty members, like Jacob Andreas, credit the lab with helping them establish their research teams and pursue significant projects in AI.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Google Research Discusses Healthcare Innovations

Google Research presented insights on healthcare innovations and their application in real-world care settings at The Check Up event. The focus was on bridging the gap between research and practical healthcare solutions.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

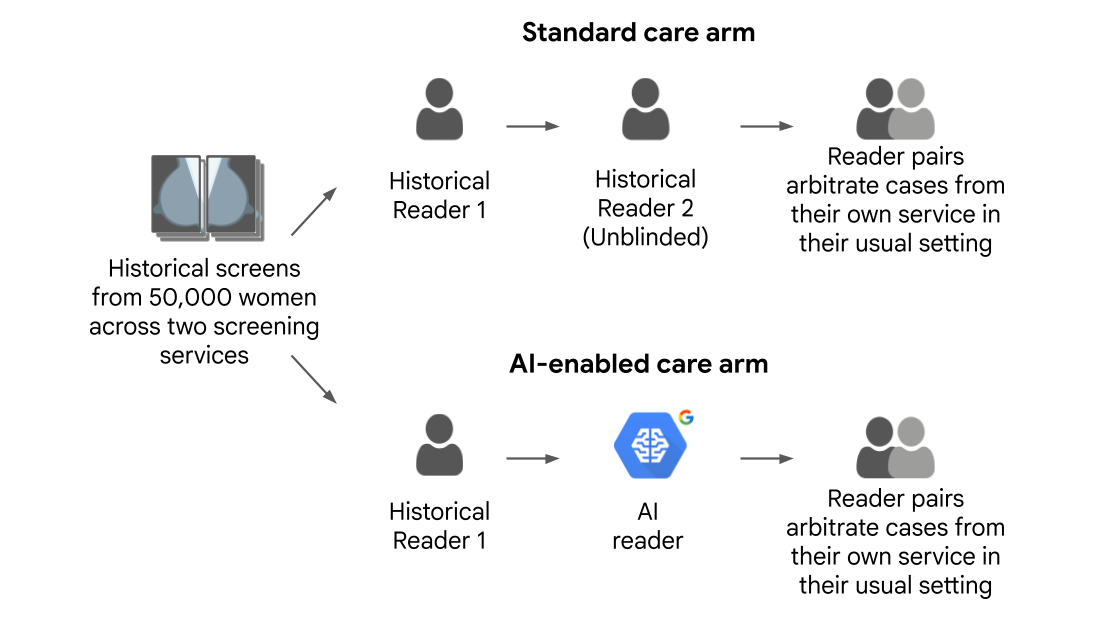

Machine Learning Enhances Breast Cancer Screening Workflows

Google Research has introduced machine learning techniques aimed at improving the efficiency of breast cancer screening workflows. This development could lead to more accurate and timely diagnoses.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Testing LLMs on Superconductivity Research

Google Research is evaluating the performance of large language models (LLMs) on questions related to superconductivity. This initiative aims to assess the models' capabilities in handling complex scientific inquiries.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

AI Model Predicts Heart Failure Worsening

Researchers at MIT have developed a deep learning model named PULSE-HF that predicts which heart failure patients are likely to worsen within a year. The model was tested on multiple patient cohorts and aims to improve resource allocation in healthcare.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

AI for Flash Flood Forecasting in Cities

Google Research has introduced AI-driven methods for forecasting flash floods in urban areas. This technology aims to enhance city resilience against climate-related disasters.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Workshop Explores AI's Future with Science

MIT hosted a workshop on the intersection of artificial intelligence and the mathematical and physical sciences, resulting in a white paper with recommendations for future research. The event highlighted the importance of foundational research in advancing AI technologies.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Study on Conversational Diagnostic AI Feasibility

A clinical study has been conducted to explore the feasibility of conversational diagnostic AI in real-world settings. The research aims to assess how effectively generative AI can assist in medical diagnostics.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Develops New AI for Visual Task Planning

MIT researchers have created a generative AI method for planning complex visual tasks, achieving a success rate of about 70%, significantly higher than existing techniques. This two-step system utilizes a vision-language model and a programming language translation model to generate effective plans.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

AI Models to Predict Tumor Progression

MIT's Matthew G. Jones is developing AI-driven predictive models to understand tumor evolution and resistance to treatment. His work aims to improve patient outcomes by characterizing the complex dynamics of cancer cells.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Joseph Paradiso Innovates in Sensing Technologies

Joseph Paradiso, a professor at MIT Media Lab, develops sensing technologies that integrate arts, medicine, and ecology. His work includes pioneering wireless wearable sensing systems and applying them across various fields.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

New Method Enhances AI Model Explanations

MIT researchers developed a technique that improves the accuracy and clarity of explanations provided by AI models in high-stakes settings, such as medical diagnostics. This method utilizes concepts learned during training, rather than predefined ones, to enhance understanding of model predictions.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

Google Research Introduces SpeciesNet for Wildlife Identification

Google Research has unveiled SpeciesNet, a new tool designed to identify wildlife species using AI. This initiative aims to enhance biodiversity monitoring and conservation efforts.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Teaching LLMs Bayesian Reasoning

Google Research discusses methods to enhance large language models (LLMs) by integrating Bayesian reasoning techniques. This approach aims to improve the decision-making capabilities of LLMs.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

New AI Optimizes Engineering Challenges Efficiently

MIT researchers developed a new approach to Bayesian optimization that significantly speeds up problem-solving in engineering by leveraging a foundation model trained on tabular data. This method can find optimal solutions 10 to 100 times faster than traditional techniques.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Intern Develops Underwater Navigation Algorithm at MIT

Ivy Mahncke, a robotics engineering student, developed an algorithm for underwater navigation during her internship at MIT Lincoln Laboratory. Her work involved field testing the algorithm on operational underwater vehicles in various locations.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

AI Framework Enhances Cell Biology Research

Researchers developed an AI-driven framework to analyze multiple measurement modalities in cell biology, improving understanding of cellular states. This approach allows for a more comprehensive view of cellular interactions, aiding in disease mechanism studies.

MIT News AI

© Together AI Blog

© Together AI BlogResearchother

Speech Models Fail on Street Names

Research from Together AI reveals that leading speech models like Whisper and Deepgram perform well on benchmarks but fail 39% of the time when recognizing street names. The study also proposes potential solutions to address this issue.

Together AI Blog

© MIT News AI

© MIT News AIResearchresearch

AI Chatbots Less Accurate for Vulnerable Users

A study from MIT reveals that AI chatbots like GPT-4 and Claude 3 provide less accurate information to users with lower English proficiency and less formal education. The research highlights that these models also refuse to answer questions more frequently for these demographics.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Method to Expose Biases in LLMs

Researchers from MIT and UC San Diego created a method to identify and manipulate hidden biases, moods, and personalities in large language models. Their approach allows for the enhancement or minimization of over 500 concepts within these models.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

MIT Develops Parking-Aware Navigation System

MIT researchers created a navigation system that identifies optimal parking locations, potentially reducing travel time and emissions. Simulations showed time savings of up to 66% in congested areas.

MIT News AI

© MIT News AI

© MIT News AIResearchresearch

Personalization in LLMs may increase agreeableness

Researchers from MIT and Penn State University found that personalization features in large language models (LLMs) can lead to increased agreeableness and mirroring of user beliefs, potentially fostering misinformation. Their study analyzed two weeks of real-world conversation data, revealing that user profiles significantly impact LLM behavior.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

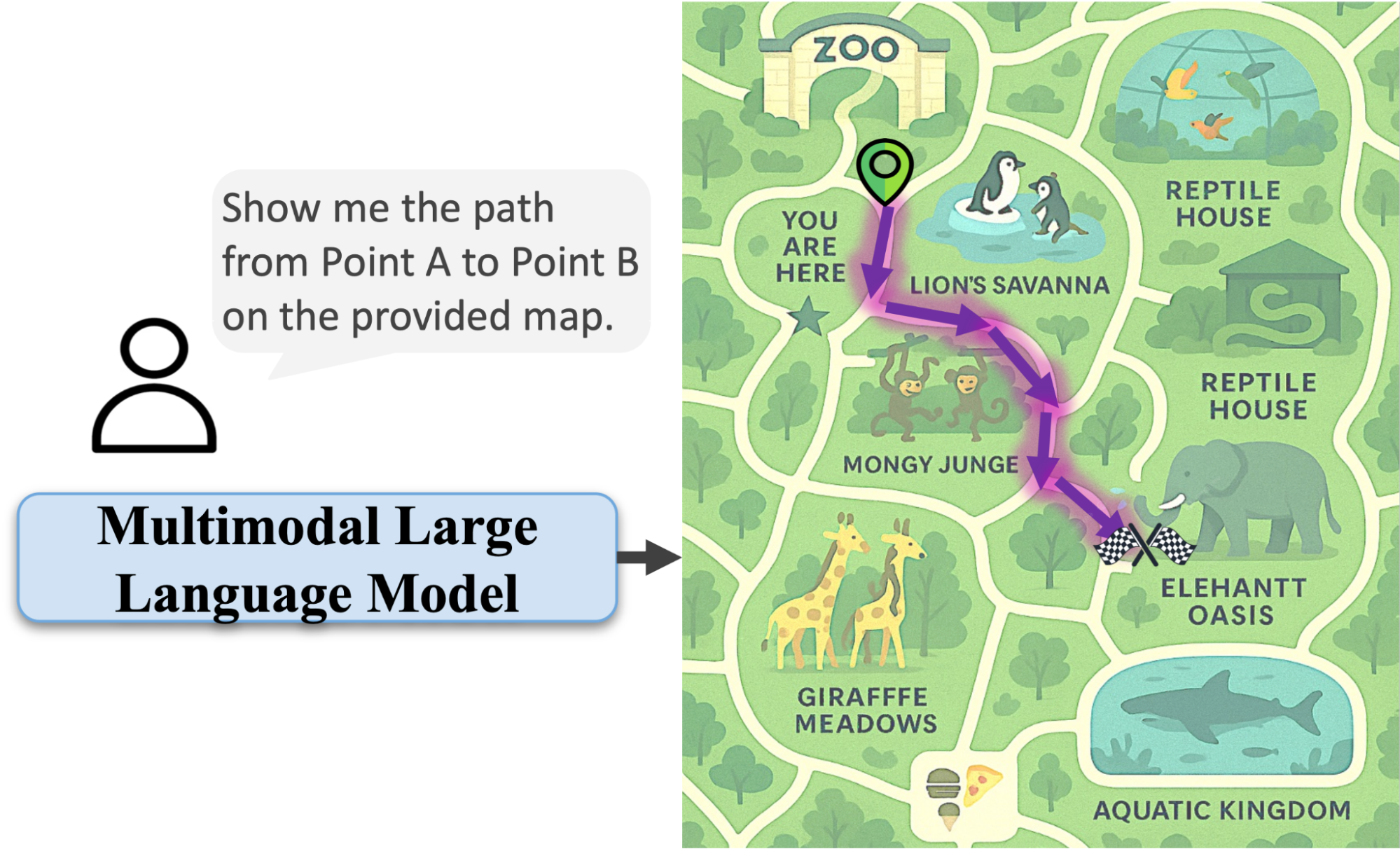

AI Learning to Read Maps

Google Research is developing AI systems that can interpret and understand maps. This advancement aims to enhance machine perception capabilities.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

MIT Professor Advances AI in Material Science

MIT Associate Professor Rafael Gómez-Bombarelli is leveraging AI to accelerate the discovery of new materials, combining physics-based simulations with machine learning. He believes we are at a pivotal moment for AI's role in transforming scientific research.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

New Scheduling Algorithms for Time-Varying Capacity

Google Research has published findings on algorithms that optimize scheduling in environments with fluctuating capacities. These algorithms aim to maximize throughput under changing conditions.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

New Methods for Human-AI Group Conversations

Google Research has introduced techniques for authoring, simulating, and testing dynamic conversations involving groups of humans and AI. This development aims to enhance interactions in collaborative environments.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

AI System Aids Olympic Skaters with Jumps

Jerry Lu developed an AI-based optical tracking system called OOFSkate to help figure skaters improve their jumps. The system analyzes video footage and provides recommendations for enhancing performance.

MIT News AI

© Google Research Blog

© Google Research BlogResearchresearch

AI Trained on Birds Reveals Underwater Insights

Research from Google highlights how AI models trained on bird behavior are being applied to understand underwater ecosystems. This innovative approach aims to uncover mysteries related to marine life and environmental changes.

Google Research Blog

© MIT News AI

© MIT News AIResearchresearch

LLM ranking platforms found to be unreliable

MIT researchers discovered that LLM ranking platforms can be easily skewed by a small number of user interactions, leading to potentially misleading rankings. Their study highlights the need for more rigorous evaluation methods for these platforms.

MIT News AI

© Together AI Blog

© Together AI BlogResearchwriting

LLMs Show Distinct Knowledge Priors in Research

New research indicates that different language model families generate varied content when not given specific prompts, with GPT focusing on code and math, Llama on narratives, DeepSeek on religious topics, and Qwen on exam questions.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

New Sequential Attention Method Enhances AI Efficiency

Google Research has introduced a Sequential Attention method aimed at improving the efficiency of AI models while maintaining their accuracy. This approach seeks to make AI systems leaner and faster.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Nationwide Study on AI in Virtual Care Launched

A new nationwide randomized study has been initiated to explore the application of AI in real-world virtual care settings. This collaboration aims to assess the effectiveness and impact of generative AI technologies in healthcare.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

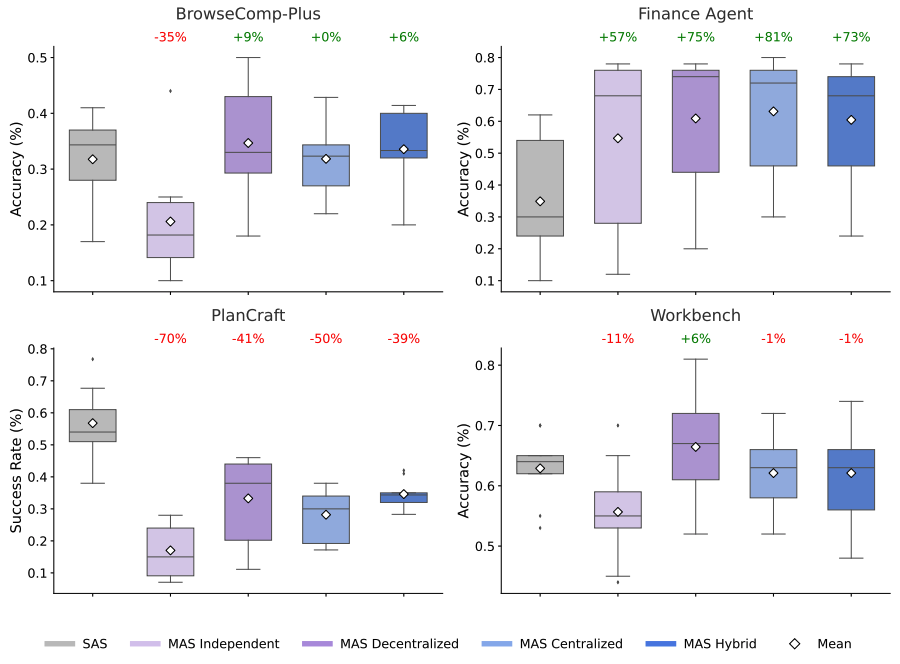

Research on Scaling Agent Systems Released

Google Research published findings on the effectiveness of scaling agent systems, exploring when and why they succeed. The study aims to provide a scientific basis for understanding agent systems in generative AI.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

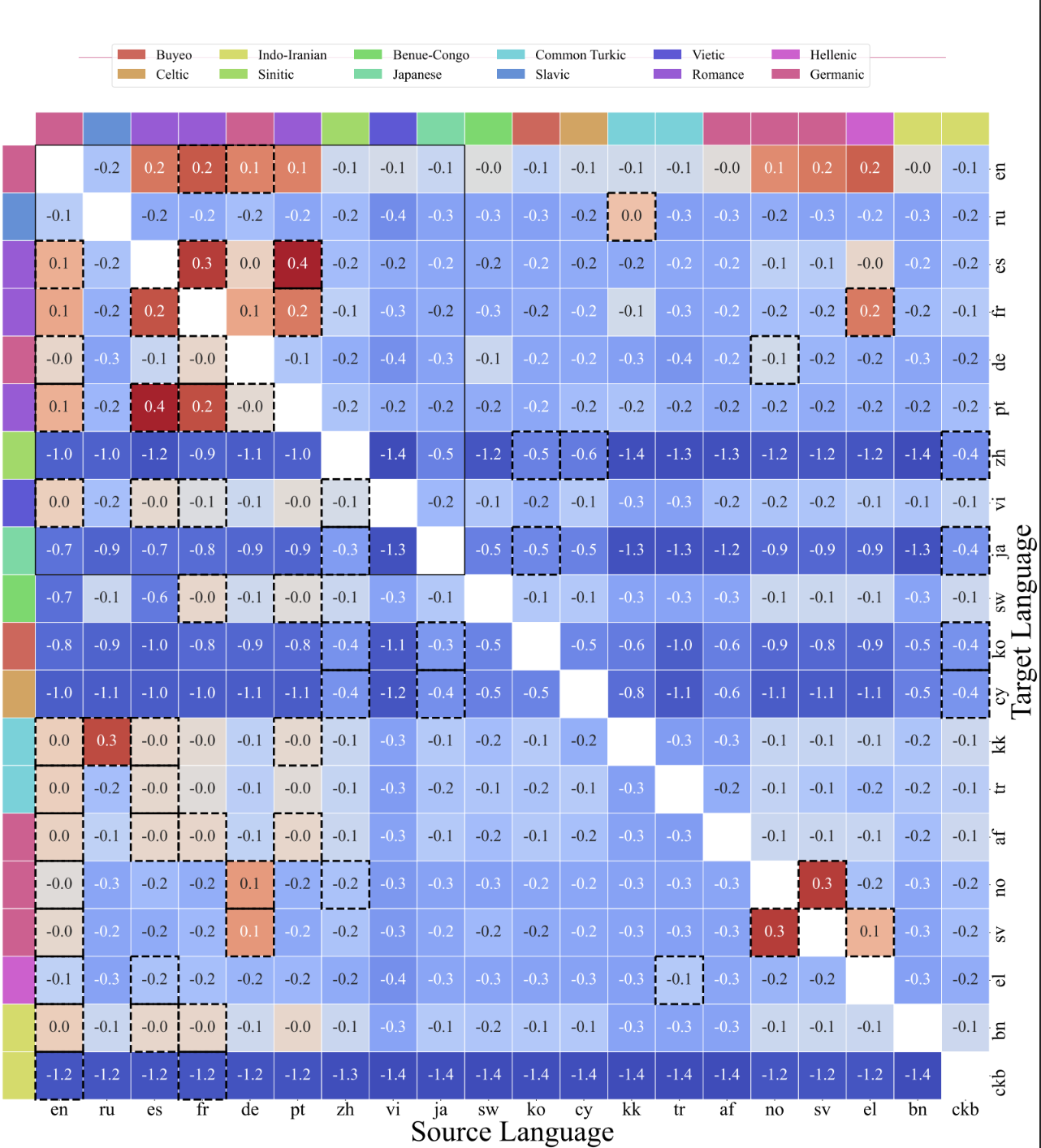

New Scaling Laws for Multilingual Models Introduced

Google Research has published findings on practical scaling laws for multilingual models, focusing on their efficiency and performance. This research aims to enhance the development of generative AI systems that can operate across multiple languages.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Small Models Achieve Superior Intent Extraction

Google Research discusses how smaller models can effectively extract intent through a decomposition approach. This method demonstrates that size does not always correlate with performance in AI tasks.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchother

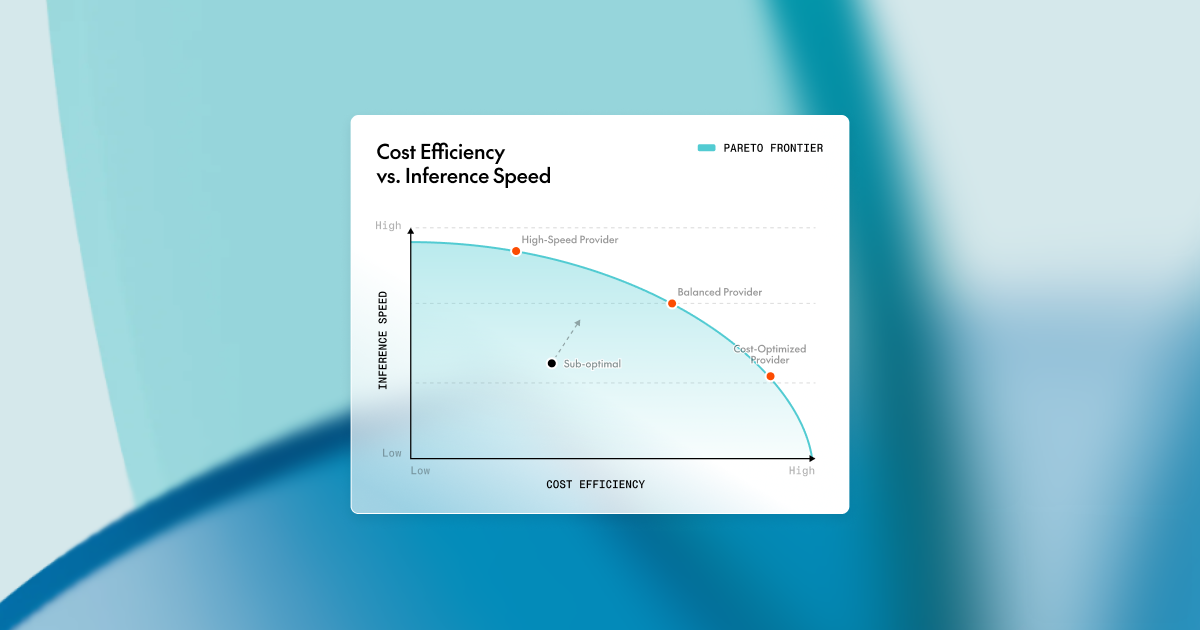

Optimizing Inference Speed and Costs

The article discusses strategies for reducing inference latency and costs in large-scale AI deployments, focusing on improving throughput and GPU utilization. It emphasizes the importance of balancing throughput and latency tradeoffs.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

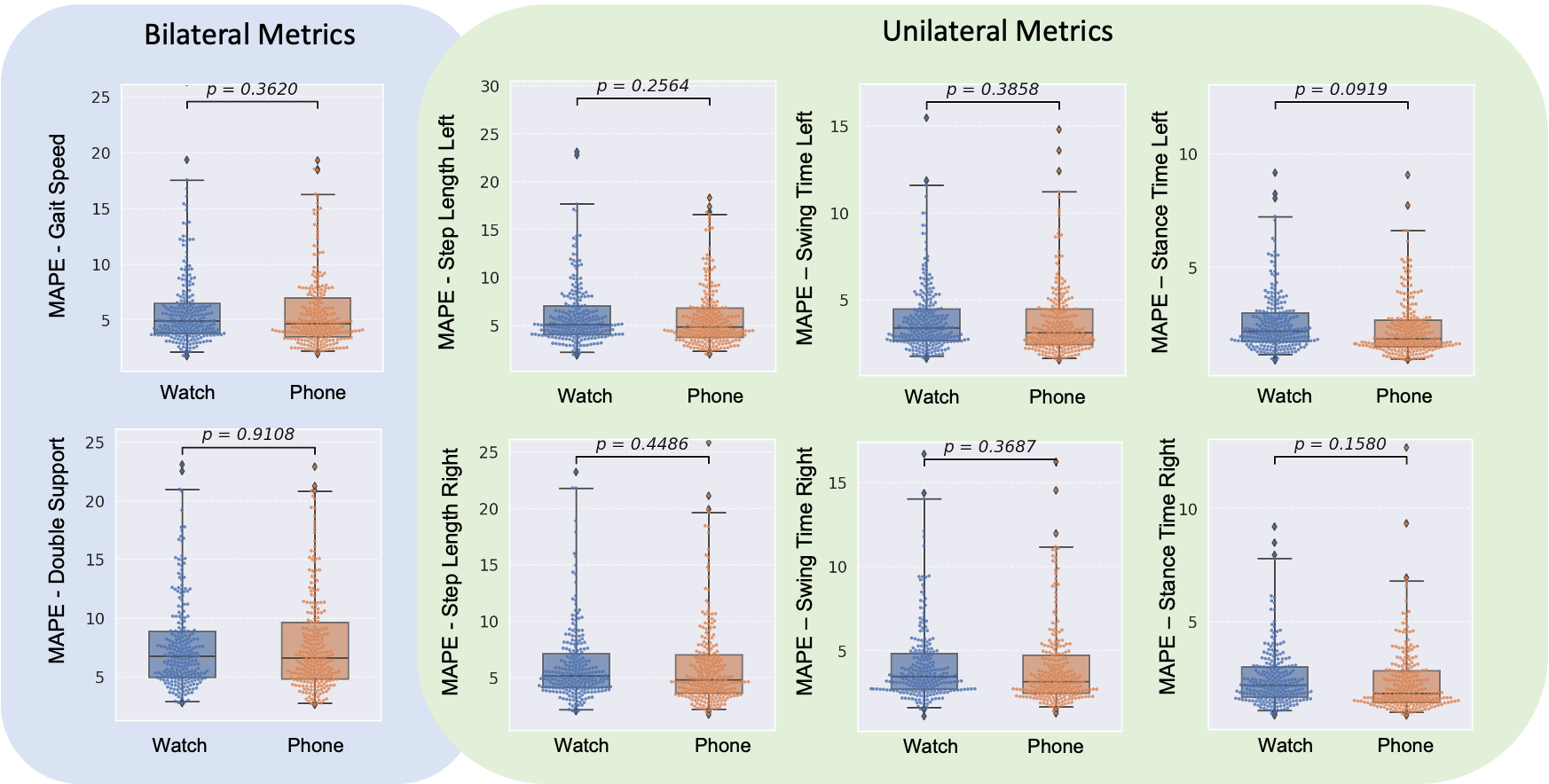

Smartwatches Estimate Advanced Walking Metrics

Google Research has developed methods to estimate advanced walking metrics using smartwatches. This advancement aims to unlock health insights for users.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Hard-braking Events Indicate Crash Risk

A study from Google Research identifies hard-braking events as potential indicators of crash risk on road segments. This research aims to improve road safety through data analysis.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

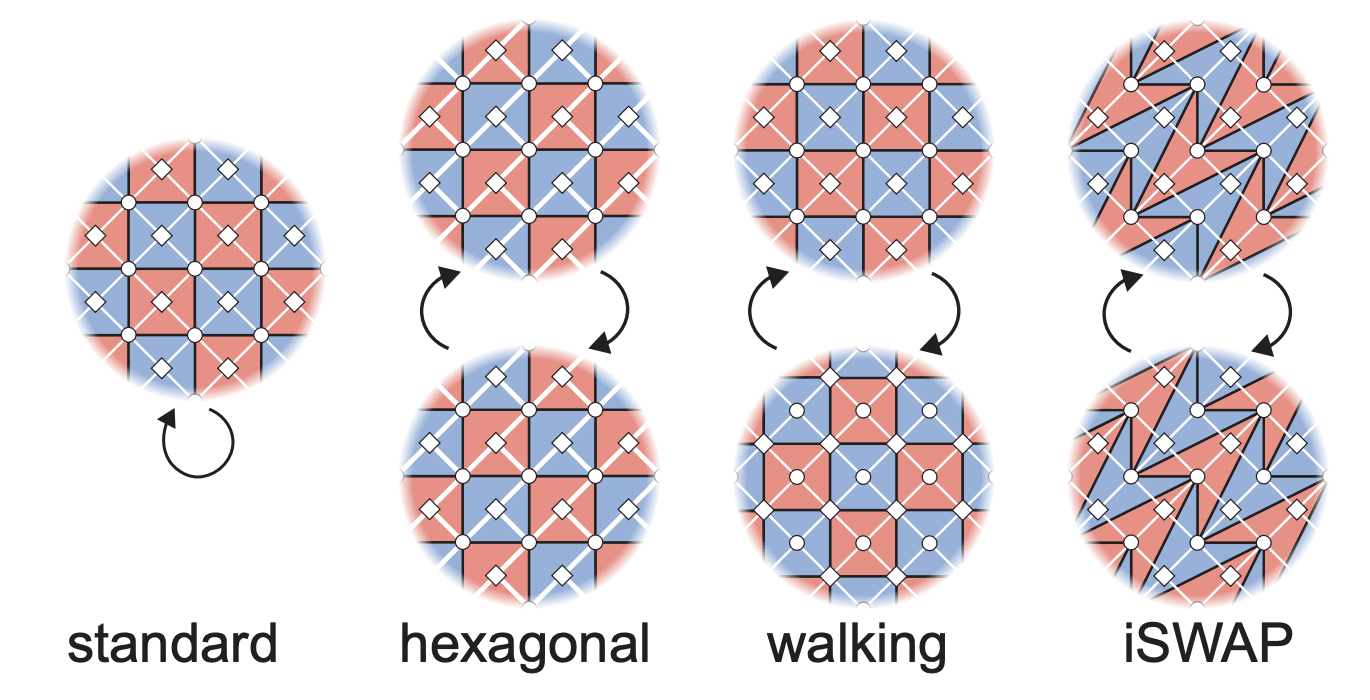

Dynamic Surface Codes Enhance Quantum Error Correction

Researchers have introduced dynamic surface codes that improve quantum error correction techniques. This advancement could lead to more robust quantum computing systems.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

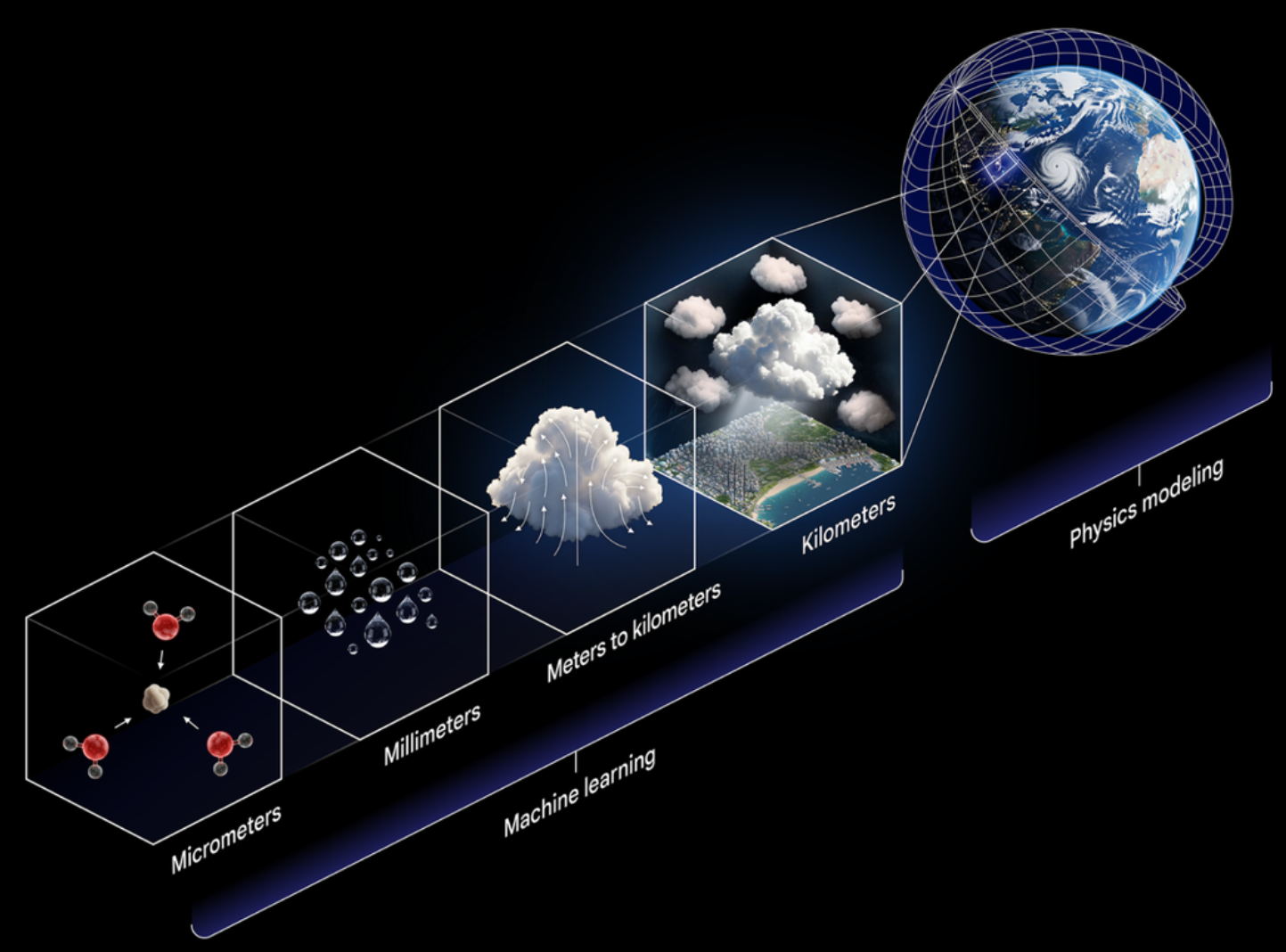

NeuralGCM Improves Global Precipitation Simulation

Google Research has developed NeuralGCM, an AI model designed to enhance the simulation of long-range global precipitation patterns. This advancement aims to improve climate modeling and sustainability efforts.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchresearch

AGI Potential: Hardware Utilization Insights

Dan Fu argues that current AI capabilities are limited by underutilization of existing hardware and advocates for improved software-hardware co-design to enhance performance.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

Gemini Offers Automated Feedback at STOC 2026

Gemini, a tool developed by Google, provides automated feedback for theoretical computer scientists at the STOC 2026 conference. This innovation aims to enhance the research process in algorithms and theory.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

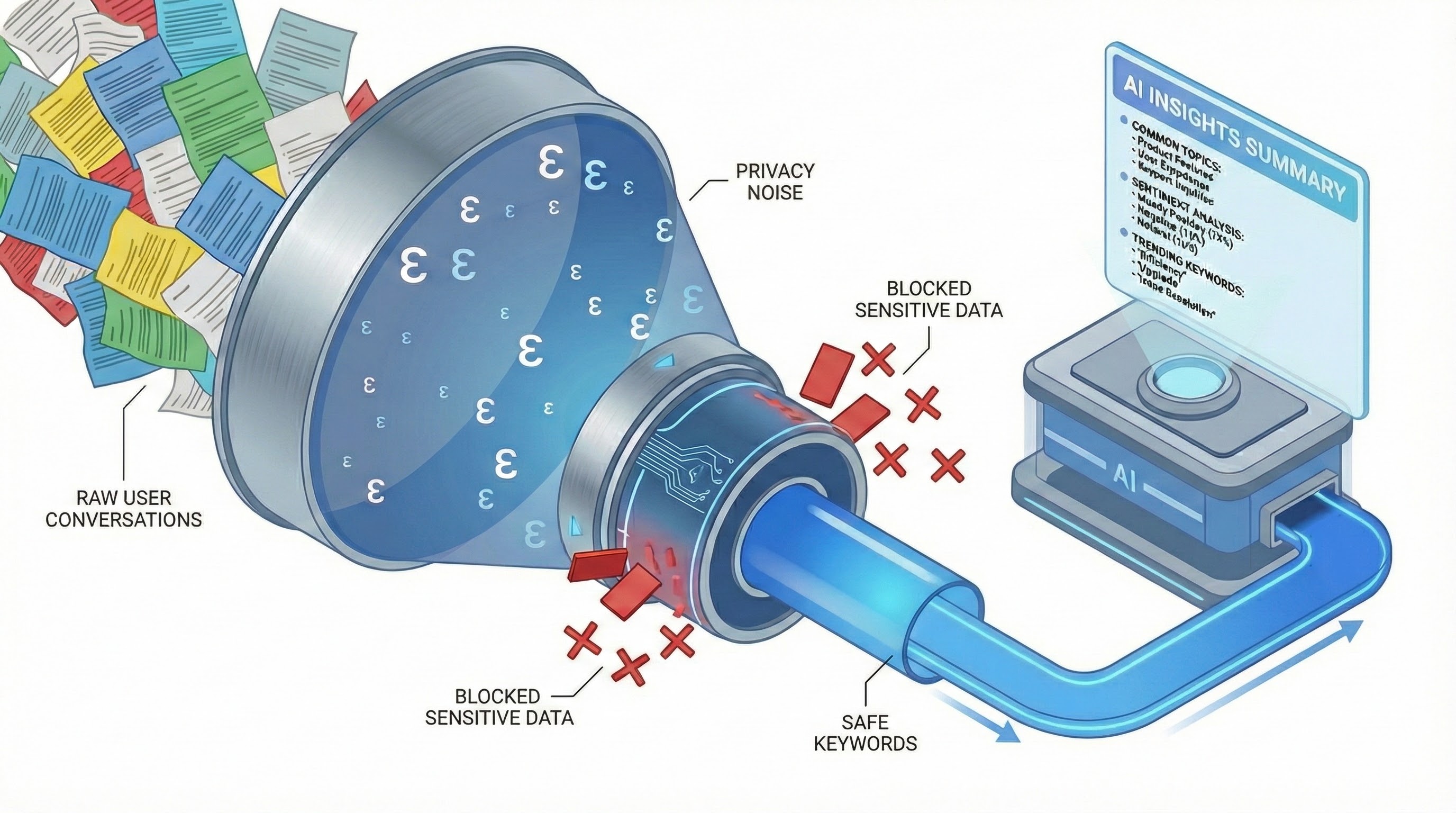

Differentially Private Framework for AI Chatbots

Google Research has introduced a differentially private framework aimed at analyzing AI chatbot usage while preserving user privacy. This approach allows for insights into chatbot interactions without compromising sensitive information.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

New Benchmark for Auditory Intelligence Introduced

Google Research has announced a new benchmark aimed at enhancing auditory intelligence in machine learning models. This benchmark is designed to evaluate and improve the understanding of sound and audio processing by AI systems.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Used to Identify Natural Forests for Sustainability

Google Research has developed an AI model to distinguish natural forests from other types of tree cover. This technology aims to support deforestation-free supply chains.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

New Quantum Toolkit for Optimization Released

Google Research has introduced a new quantum toolkit aimed at optimization problems. This toolkit is designed to enhance the capabilities of quantum computing in solving complex optimization tasks.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Google Introduces Nested Learning for Continual Learning

Google Research has unveiled a new machine learning paradigm called Nested Learning, aimed at improving continual learning processes. This approach seeks to enhance the ability of models to learn from new data without forgetting previous knowledge.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Used for Forest Risk Prediction

Google Research discusses the application of AI in forecasting forest health, focusing on loss assessment and risk prediction. The technology aims to enhance understanding of forest ecosystems and their vulnerabilities.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

New AI Infrastructure Design Proposed by Google Research

Google Research has introduced a design for a scalable AI infrastructure system that operates in space. This concept aims to enhance the capabilities of AI systems by leveraging space-based resources.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchresearch

Evaluating and Benchmarking Large Language Models

The article discusses methods for evaluating and benchmarking Large Language Models (LLMs), focusing on testing and comparison techniques.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

Research Breakthroughs in Climate & Sustainability

Google Research discusses the importance of accelerating the transition from research breakthroughs to real-world applications in climate and sustainability. The focus is on enhancing the impact of AI in addressing environmental challenges.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Provably Private Insights into AI Use Proposed

Google Research has introduced a framework aimed at ensuring privacy in generative AI applications. This framework seeks to provide provable privacy guarantees while utilizing AI technologies.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

StreetReaderAI Enhances Street View Accessibility

Google Research has introduced StreetReaderAI, a multimodal AI system aimed at improving accessibility to street view data. The system utilizes context-aware generative AI to enhance user interaction with street-level imagery.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Google Earth AI Enhances Geospatial Insights

Google has introduced AI capabilities in Google Earth that leverage foundation models and cross-modal reasoning to provide enhanced geospatial insights. This development aims to improve understanding of climate and sustainability issues.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Google Research Discusses Quantum Advantage

Google Research has published a blog post discussing the concept of verifiable quantum advantage. The post outlines the potential implications and applications of quantum computing advancements.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchresearch

Benchmark Study on Large Reasoning Models

A study by ReasonIF reveals that frontier large reasoning models (LRMs) fail to follow reasoning instructions over 75% of the time, introducing a new benchmark across various parameters.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

Gemini Learns to Identify Exploding Stars

Google's Gemini has been trained to recognize exploding stars using a limited number of examples. This development showcases advancements in machine learning for astronomical applications.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Optimizes Cloud Computing with Virtual Machine Solutions

Google Research discusses how AI algorithms are enhancing the efficiency of cloud computing by solving virtual machine allocation puzzles. This optimization can lead to better resource management in cloud environments.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Identifies Genetic Variants in Tumors

Google Research has developed DeepSomatic, an AI tool designed to identify genetic variants in tumors. This advancement aims to enhance precision medicine by improving the understanding of tumor genetics.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Google Introduces Speech-to-Retrieval Approach

Google Research has unveiled a new method called Speech-to-Retrieval (S2R) aimed at improving voice search capabilities. This approach focuses on enhancing the retrieval of information through spoken queries.

Google Research Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Reward Hacking Research Update

EleutherAI released an interim report on their ongoing research into reward hacking in AI systems.

EleutherAI Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Advances Theoretical Computer Science with AlphaEvolve

Google Research has introduced AlphaEvolve, an AI system designed to assist in theoretical computer science research. This tool aims to enhance the development of algorithms and theories in the field.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AfriMed-QA Benchmarks AI for Global Health

Google Research has introduced AfriMed-QA, a benchmarking initiative aimed at evaluating large language models in the context of global health. This project seeks to enhance the performance of AI in addressing health-related queries and challenges.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Time Series Models as Few-Shot Learners

Google Research has explored the capabilities of time series foundation models in few-shot learning scenarios. This development highlights the potential for generative AI to adapt with limited data.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Deep Researcher Introduces Test-Time Diffusion

Google Research has unveiled a new approach called test-time diffusion, which enhances machine intelligence capabilities. This method aims to improve the adaptability of models during inference.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Improving LLM Accuracy with Layer Utilization

Google Research discusses methods to enhance the accuracy of large language models (LLMs) by leveraging all of their layers. This approach aims to optimize performance in various applications.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Hybrid Approach for LLM Inference Proposed

Google Research introduced a hybrid method aimed at improving the efficiency of large language model (LLM) inference. This approach combines different techniques to enhance performance and speed.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

NucleoBench and AdaBeam Enhance Nucleic Acid Design

Google Research has introduced NucleoBench and AdaBeam, tools aimed at improving the design of nucleic acids. These advancements could streamline research in health and bioscience.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

AI Empirical Research Assistance Introduced

Google Research has announced an AI-powered tool designed to assist in empirical research, aiming to accelerate scientific discovery. This tool leverages AI to enhance the research process and improve efficiency.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Framework for Evaluating Health Language Models Released

Google Research has introduced a scalable framework designed for the evaluation of health language models. This framework aims to enhance the assessment processes in the healthcare AI sector.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Differentially Private Partition Selection Introduced

Google Research has introduced a method for securing private data at scale using differentially private partition selection. This approach aims to enhance data privacy while maintaining utility in data analysis.

Google Research Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Deep Ignorance: New Data Filtering for LLMs

EleutherAI has announced a new method called Deep Ignorance, which focuses on filtering pretraining data to enhance the safety of open-weight large language models (LLMs). This approach aims to create tamper-resistant safeguards within these models.

EleutherAI Blog

© Google Research Blog

© Google Research BlogResearchresearch

10,000x Training Data Reduction Achieved

Google Research has announced a method that achieves a 10,000x reduction in training data while maintaining high-fidelity labels. This advancement could streamline the data preparation process in machine learning.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Insulin Resistance Prediction Using Wearables

Google Research has explored the use of wearables and routine blood biomarkers to predict insulin resistance. This approach leverages generative AI techniques to enhance predictive accuracy.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

DeepPolisher Enhances Genome Polishing Accuracy

Google Research has introduced DeepPolisher, a tool designed to improve the accuracy of genome polishing. This advancement aims to enhance the foundation of genomic research.

Google Research Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Attention Probes Introduced by EleutherAI

EleutherAI has introduced a method for incorporating attention mechanisms into linear probes. This development aims to enhance the interpretability of model representations.

EleutherAI Blog

© Google Research Blog

© Google Research BlogResearchresearch

New Regression Language Models for System Simulation

Google Research has introduced Regression Language Models aimed at simulating large systems. This development could enhance the efficiency of modeling complex scenarios in various fields.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Privacy-Preserving Domain Adaptation with LLMs

Google Research has introduced a method for privacy-preserving domain adaptation using large language models (LLMs) tailored for mobile applications. This approach combines synthetic data generation and federated learning techniques.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

LSM-2 Learns from Incomplete Sensor Data

Google Research has introduced LSM-2, a model designed to learn from incomplete data collected by wearable sensors. This advancement aims to improve the accuracy of data interpretation in various applications.

Google Research Blog

© Google Research Blog

© Google Research BlogResearchresearch

Measuring Heart Rate with UWB Radar Technology

Google Research has developed a method to measure heart rate using consumer ultra-wideband (UWB) radar technology. This advancement could enhance health monitoring capabilities in consumer devices.

Google Research Blog

© Together AI Blog

© Together AI BlogResearchagents

AI Agents Benchmark for Predicting Future Events

FutureBench is introduced as a live benchmark for evaluating AI agents' ability to forecast real-world events such as rates and geopolitics. It aims to provide a leak-free environment for true reasoning assessments.

Together AI Blog

© Google Research Blog

© Google Research BlogResearchresearch

Graph Foundation Models for Relational Data

Google Research has introduced new graph foundation models designed for relational data. These models aim to enhance the understanding and processing of complex relationships within data structures.

Google Research Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Research Update on Local Volume Measurement

A research update discusses the applications of local volume measurement in various downstream tasks.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Studying Inductive Biases in Random Neural Networks

The post explores the inductive biases of random neural networks through local volume estimates, building on previous research about the behavior of these networks. It emphasizes the importance of understanding these biases to improve generalization in deep learning.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Product Key Memory Sparse Coders Introduced

EleutherAI has introduced a method using Product Key Memories to encode features in sparse coders. This approach aims to enhance the efficiency of feature encoding in AI models.

EleutherAI Blog

© Together AI Blog

© Together AI BlogResearchresearch

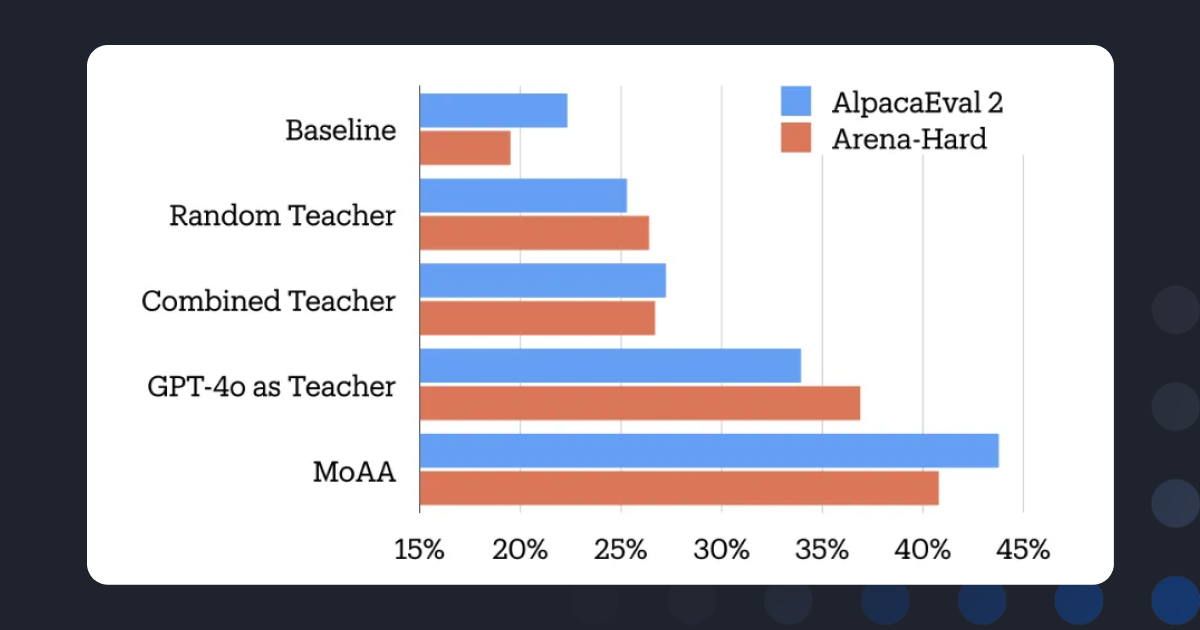

Mixture-of-Agents Alignment for LLMs

Together AI discusses a new approach called Mixture-of-Agents Alignment, which aims to enhance the performance of open-source large language models (LLMs) through collective intelligence. This method focuses on improving post-training alignment of these models.

Together AI Blog

© Together AI Blog

© Together AI BlogResearchresearch

Chipmunk Accelerates Diffusion Transformers Training

The blog discusses a new method called Chipmunk that accelerates the training of diffusion transformers without requiring traditional training processes. This approach utilizes dynamic column-sparse deltas to enhance efficiency.

Together AI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

SAEs Trained on Same Data Show Feature Variability

Research indicates that two TopK Sparse Autoencoders (SAEs) trained on identical data can learn different features, with only about 53% of features being shared. The study also finds that narrower SAEs exhibit higher feature overlap compared to larger ones.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Partially rewriting LLMs in natural language

The EleutherAI Blog discusses a method for partially rewriting large language models (LLMs) using interpretations of SAE latents to simulate activations.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogInvestment

Researchresearch

Evaluation of Risks in LLM Training Data

The EleutherAI Blog discusses the minetester tool and its preliminary work aimed at identifying risks in the training data of large language models (LLMs).

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Mechanistic Anomaly Detection Research Update

EleutherAI has released an interim report on their ongoing research into mechanistic anomaly detection.

EleutherAI Blog

© Replicate Blog

© Replicate BlogResearchother

Replicate Intelligence #7 Released

The latest edition of Replicate Intelligence discusses various aspects of data curation and generation.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Experiments in Weak-to-Strong Generalization

EleutherAI shares results from a recent project focused on weak-to-strong generalization in AI models.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Concept Erasure Without Oracle Labels Achieved

Researchers have developed a method for concept erasure that allows for more precise edits than previous techniques, specifically LEACE, without requiring oracle concept labels during inference. This advancement could enhance the flexibility of model adjustments in AI applications.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

VINC-S Project Results Published

EleutherAI has published results from their VINC-S project, which focuses on optionally-supervised knowledge elicitation with paraphrase invariance. The project was conducted in Spring 2023.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Fact Check on Yi-34B and Llama 2

The EleutherAI Blog provides a fact check on the New York Times' reporting regarding the Yi-34B and Llama 2 models, clarifying common practices in LLM training.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Least-Squares Concept Erasure with Oracle Labels

The article discusses advancements in achieving precise edits in AI models using concept labels during inference, surpassing previous methods like LEACE.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Diff-in-Means Concept Editing Explained

The EleutherAI Blog discusses a result by Sam Marks and Max Tegmark regarding the concept editing method known as Diff-in-Means, highlighting its worst-case optimality.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

New England RLHF Hackathon Showcases Projects

The third New England RLHF Hackathon featured various projects focused on machine learning and reinforcement learning, including a model trained via ILQL. Participants are encouraged to join the Discord community for updates on future events.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

EleutherAI Updates on RoPE Developments

EleutherAI shares insights on their activities over the past year, focusing on advancements related to RoPE (Rotary Position Embedding).

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Foundation Model Transparency Index Critique

The article discusses the challenges and potential distortions in evaluating transparency within foundation models, emphasizing the need for precision in such assessments.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Second New England RLHF Hackathon Held

The New England RLHF Hackers hosted their second hackathon at Brown University on October 8th, 2023, focusing on challenges in reinforcement learning from human feedback. The event aimed to foster collaboration among contributors from EleutherAI.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

New England RLHF Hackers Host First Hackathon

On September 10, 2023, the New England RLHF Hackers held a hackathon at Brown University focused on addressing open problems in reinforcement learning from human feedback. The event featured contributors from EleutherAI and aimed to foster collaboration and innovation in the field.

EleutherAI Blog

© Replicate Blog

© Replicate BlogResearchimage

History of Text-to-Image AI Explored

The Replicate Blog reflects on the advancements in text-to-image AI, coinciding with the one-year anniversary of Stable Diffusion and the release of Stable Diffusion XL fine-tuning.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Alignment Research @ EleutherAI

EleutherAI provides an overview of its approach to alignment research in AI. The blog discusses the methodologies and principles guiding their alignment efforts.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchother

Transformer Math 101 Released

EleutherAI Blog presents foundational math concepts related to computation and memory usage for transformers.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Exploratory Analysis of TRLX RLHF Transformers

The EleutherAI Blog presents a demonstration of interpretability for RLHF (Reinforcement Learning from Human Feedback) models using TransformerLens.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchother

EleutherAI Retrospective Overview

EleutherAI shares insights on its activities over the past year-and-a-half.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Exploring Factored Cognition with GPT-3

Experiments using GPT-3 demonstrate the potential of factored cognition to solve complex tasks through decomposition. The study focuses on arithmetic tasks to highlight GPT-3's limitations in performing basic mathematical operations.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogInvestment

Researchresearch

Normalization Methods for LM Evaluation Discussed

The EleutherAI Blog outlines various normalization methods for evaluating multiple choice tasks on autoregressive language models such as GPT-3 and Neo. The post aims to clarify the current prevalent techniques in this area.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Evaluating Rotary Position Embeddings

The article compares Rotary Position Embedding with GPT-style learned position embeddings, focusing on their performance in downstream tasks.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

OpenAI API Models Size Analysis

The EleutherAI Blog discusses how to deduce the sizes of OpenAI API models based on their performance using an evaluation harness.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Evaluating Fewshot Prompts on GPT-3

The article assesses various fewshot description prompts used with GPT-3 to analyze their impact on performance.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Finetuning GPT-Neo on Eval Harness Tasks

EleutherAI conducted experiments to finetune GPT-Neo on various eval harness tasks to assess performance changes.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

Ablation Study on Activation Functions in GPT Models

The EleutherAI Blog discusses an ablation study focusing on activation functions in GPT-like autoregressive language models. This research aims to understand the impact of different activation functions on model performance.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogResearchresearch

New Rotary Positional Embedding Introduced

The EleutherAI Blog discusses Rotary Positional Embedding (RoPE), a novel position encoding method that combines absolute and relative approaches, and shares test results.

EleutherAI Blog