General AI

Optimizing Inference Speed and Costs in AI Deployments

Together AI Blogmedium confidence

Why it matters

- →Optimizing inference can lead to significant cost savings for AI deployments.

- →Improved latency and throughput enhance user experience in real-time applications.

- →Efficient resource utilization allows teams to handle unpredictable traffic without overprovisioning.

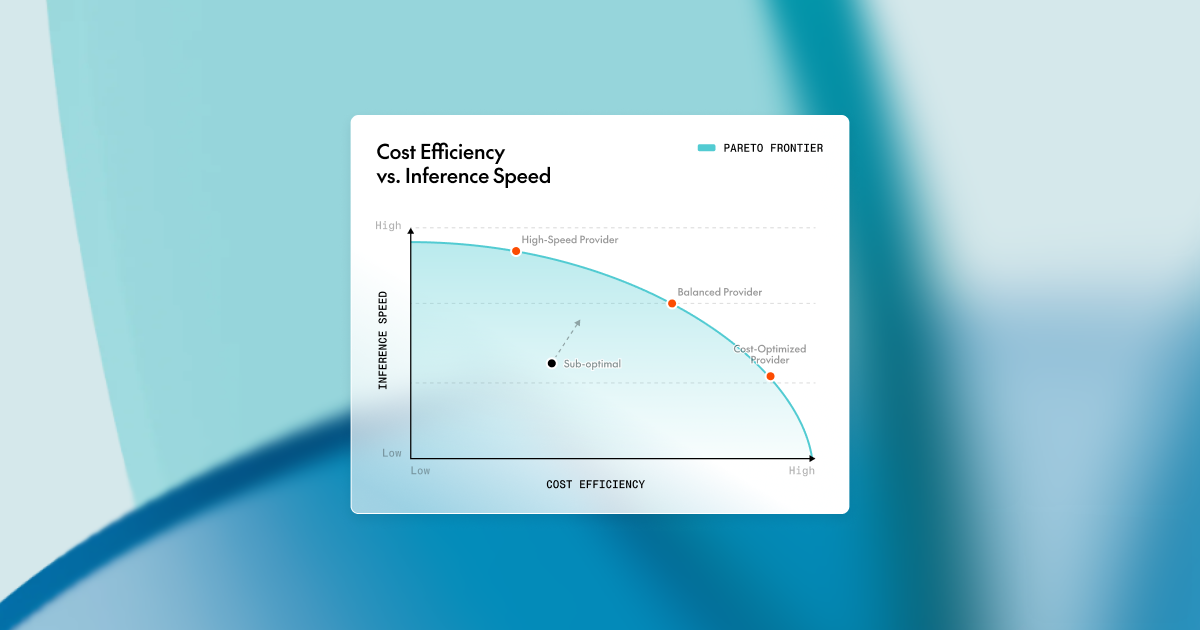

Together AI has outlined key strategies for optimizing inference speed and costs in AI deployments. The company emphasizes maximizing GPU utilization, eliminating compute stalls, and selecting appropriate decoding techniques to achieve low latency and cost efficiency. Techniques such as quantization and distillation can significantly improve throughput while maintaining output quality. By implementing these optimizations, teams can enhance user experience and manage costs effectively in competitive AI environments.

Read originalMore from Together AI Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsother

Together AI Partners with Adaption

Together AI and Adaption have formed a partnership to integrate Together Fine-Tuning into Adaptive Data, enabling teams to optimize datasets and deploy stronger open models.

Together AI Blog

© Together AI Blog

© Together AI BlogGeneral AIother

Together AI addresses Copy Fail vulnerability

More in General AI

© The Verge AI

© The Verge AIGeneral AIagents

Microsoft launches AI agent for legal teams in Word

Microsoft introduces a new AI agent in Word tailored for legal teams, enhancing document management and review processes. The Legal Agent utilizes structured workflows to assist with contract analysis and risk identification.

The Verge AI

© WIRED AI

© WIRED AIGeneral AIagents