Research

Mixture-of-Agents Alignment for LLMs

Together AI Blogmedium confidence

Why it matters

- →This approach could lead to significant improvements in the effectiveness and reliability of open-source LLMs, benefiting AI practitioners working with these models.

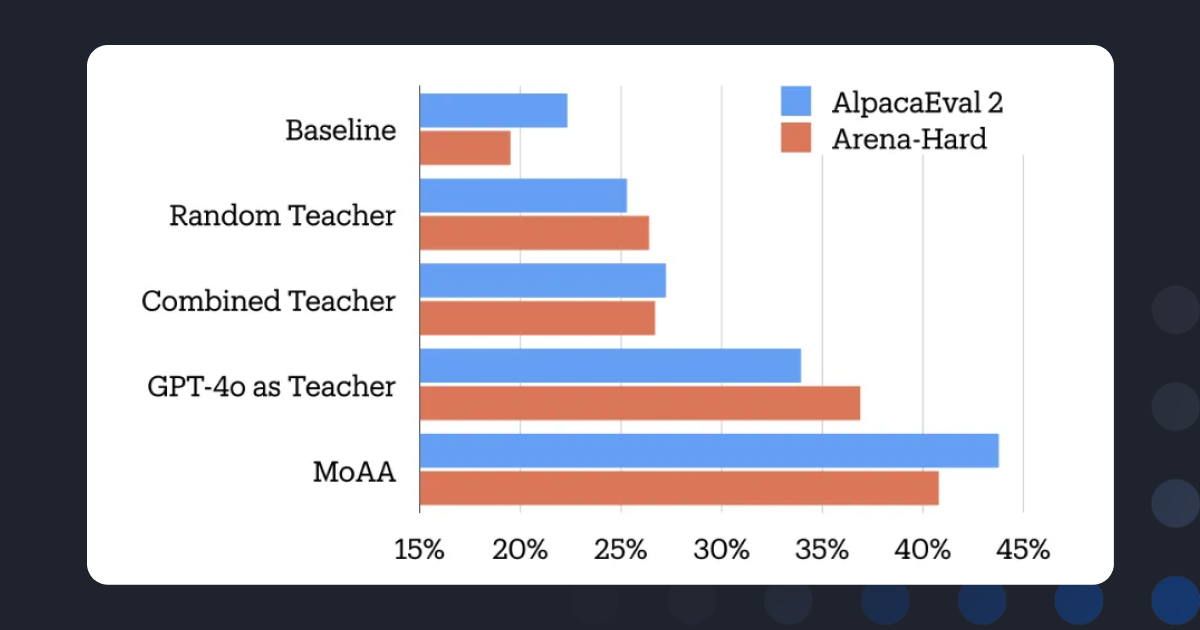

Together AI discusses a new approach called Mixture-of-Agents Alignment, which aims to enhance the performance of open-source large language models (LLMs) through collective intelligence. This method focuses on improving post-training alignment of these models.

Read originalMore from Together AI Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsother

Together AI Partners with Adaption

Together AI and Adaption have formed a partnership to integrate Together Fine-Tuning into Adaptive Data, enabling teams to optimize datasets and deploy stronger open models.

Together AI Blog

© Together AI Blog

© Together AI BlogGeneral AIother