Research

Weak Models Excel in Long Context Tasks

Together AI Blogmedium confidence

Why it matters

- →Smaller models can be more cost-effective while maintaining performance.

- →The 'Divide & Conquer' approach offers a new strategy for handling long context tasks.

- →This research could influence future model design and task processing methodologies.

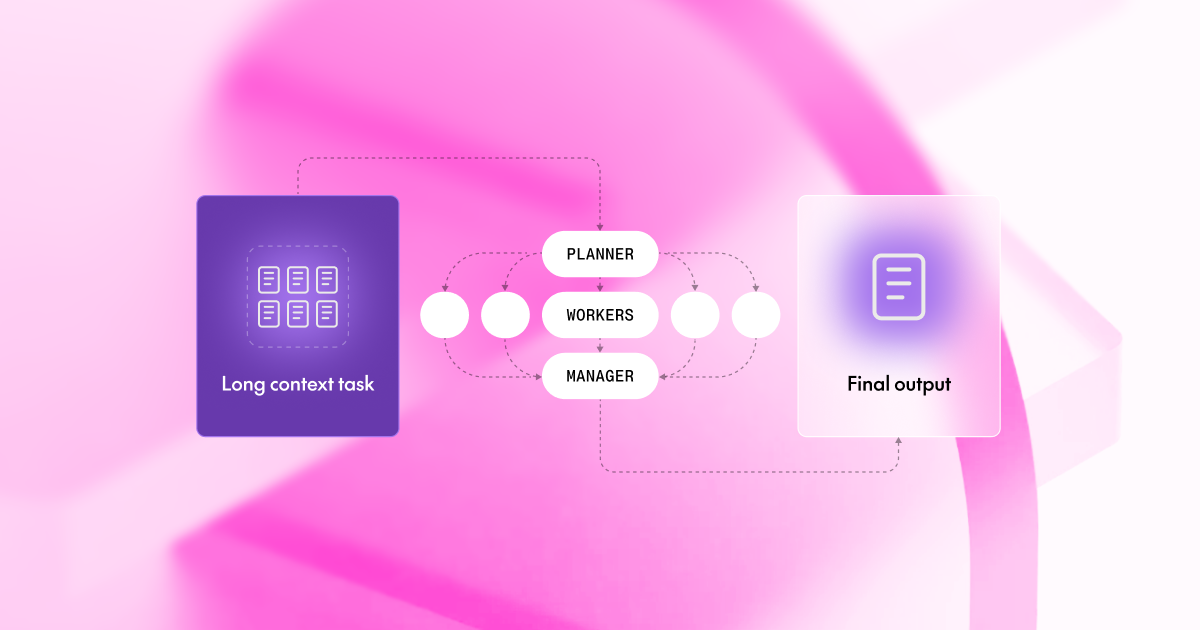

A recent study titled 'When Does Divide and Conquer Work for Long Context LLM?' presented at ICLR 2026 reveals that smaller models can effectively tackle long context tasks by employing a 'Divide & Conquer' strategy. This approach allows these models to match or exceed the performance of larger models like GPT-4 when processing extensive inputs. The research identifies three types of noise that affect performance and suggests that by strategically dividing tasks, the overall efficiency and accuracy can be improved. This framework not only reduces costs but also enhances processing speed, making it a valuable method for various applications.

Read originalMore from Together AI Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsother

Together AI Partners with Adaption

Together AI and Adaption have formed a partnership to integrate Together Fine-Tuning into Adaptive Data, enabling teams to optimize datasets and deploy stronger open models.

Together AI Blog

© Together AI Blog

© Together AI BlogGeneral AIother