Open Source

llama.cpp b9105 Release Enhances CUDA Integration

llama.cpp Releaseshigh confidence

Why it matters

- →Direct inclusion of cuda/iterator improves CUDA stability and reliability.

- →Supports a wide range of platforms, enhancing versatility for developers.

- →Strengthens llama.cpp's position as a reliable tool for AI development.

The b9105 release of llama.cpp introduces a crucial update by directly including cuda/iterator, enhancing the stability of CUDA operations. Previously, the software relied on a transient import from cub/cub.cuh, which posed potential reliability issues. This update is part of a broader release that supports multiple platforms, including macOS, Linux, and Windows, with configurations for Vulkan, SYCL, and more. While the release doesn't introduce new model architectures, it strengthens llama.cpp's utility for developers working with diverse hardware setups.

Read originalMore from llama.cpp Releases

Models & Labsmodels

llama.cpp b9103 Release Expands Platform Support

The b9103 release of llama.cpp continues its trend of broadening platform compatibility, making it a versatile tool for developers across various systems. With this update, Apple Silicon users benefit from KleidiAI support, enhancing performance on M-series Macs. The inclusion of ROCm 7.2 for Ubuntu x64 further narrows the gap between AMD and NVIDIA GPUs, offering more options for local inference. This release doesn't introduce new models but solidifies llama.cpp's position as a go-to runtime for diverse hardware configurations, ensuring developers can deploy AI models efficiently across multiple environments.

llama.cpp Releases

Models & Labsmodels

Llama.cpp b9109 Release Enhances Drafting Support

The b9109 release of llama.cpp brings notable advancements in parallel drafting, enhancing the efficiency of model processing. By refining speculative contexts and supporting multiple spec types, the update optimizes the acceptance of tokens and the drafting process. This release ensures compatibility with macOS, Linux, and Windows, including specific support for Apple Silicon with KleidiAI, ROCm 7.2, and CUDA 12 and 13. While it doesn't introduce new model architectures, the focus on refining existing capabilities makes llama.cpp a more robust tool for developers. The improvements in speculative processing and platform-specific enhancements make it a valuable update for those working with AI models.

llama.cpp Releases

Models & Labsmodels

llama.cpp b9112 Release Fixes CUDA Limitations

The b9112 release of llama.cpp tackles a crucial issue with CUDA's im2col operations, which previously struggled with output widths exceeding 65535. By adjusting grid dimensions and incorporating an in-kernel loop, the update allows models like SEANet to process longer audio sequences without errors. This fix has been validated on T4 and Jetson Orin, ensuring that llama.cpp can now handle extensive audio data efficiently. The update retains compatibility with existing test cases, providing a more robust solution for developers working with large-scale audio processing.

llama.cpp Releases

More in Open Source

© Matt Wolfe

© Matt WolfeOpen Sourcemodels

DeepSeek V4 Offers Cost-Effective AI Solution

DeepSeek V4 is an open-source AI model offering near state-of-the-art capabilities at a significantly lower cost than competitors.

Matt Wolfe

Open Sourceimage

vLLM Releases v0.18.2rc0 Update

The v0.18.2rc0 release includes a fix for handling the max_pixels parameter in the PaddleOCR-VL image processor across transformations.

vLLM Releases

© Lev Selector

© Lev SelectorOpen Sourcecoding

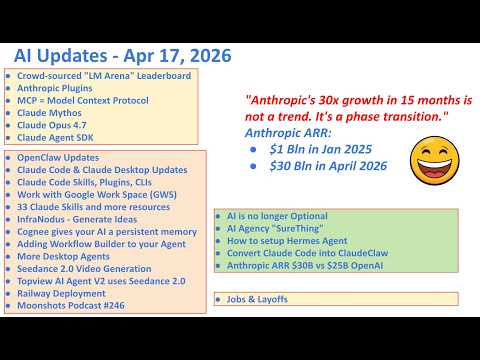

Anthropic Launches 33 Open Source Plugins

Anthropic has released a suite of plugins that enhance the Claude ecosystem.

Lev Selector