Open Source

llama.cpp b9030 Release Expands Platform Support

llama.cpp Releaseshigh confidence

Why it matters

- →Expands platform support, making llama.cpp more versatile for developers.

- →Enhances performance on Apple Silicon with KleidiAI integration.

- →Broadens GPU support on Windows with CUDA 12 and 13.

The latest b9030 release of llama.cpp significantly broadens its platform compatibility. Notably, it includes KleidiAI support for Apple Silicon, enhancing performance for macOS users. Windows users benefit from added support for CUDA 12 and 13, allowing for better integration with NVIDIA GPUs. This update focuses on expanding accessibility across various systems, making llama.cpp a more versatile tool for developers. While no new models are introduced, the release strengthens the software's usability across different environments.

Read originalMore from llama.cpp Releases

Models & Labsmodels

llama.cpp b9041 Release Expands Platform Support

The latest b9041 release of llama.cpp continues its trend of broadening platform compatibility, making it a versatile choice for developers across different environments. Notably, this update includes support for macOS Apple Silicon with KleidiAI enabled, as well as expanded Vulkan and ROCm 7.2 support on Ubuntu. This release doesn't introduce new models but focuses on enhancing the runtime's adaptability across various hardware configurations. By doing so, llama.cpp strengthens its position as a go-to inference runtime for developers seeking flexibility beyond NVIDIA's CUDA ecosystem.

llama.cpp Releases

Models & Labsmodels

Llama.cpp Adds Granite-Speech Support

Llama.cpp's latest update expands its functionality by integrating IBM's Granite-Speech, significantly enhancing its audio processing capabilities. The update features a Conformer encoder with Shaw relative position encoding and a QFormer projector, which efficiently compresses audio data into the LLM embedding space. This ensures precise token-for-token matching with HF transformers on audio clips, demonstrating its robustness. By incorporating these advanced audio processing techniques, llama.cpp becomes a more versatile tool for developers, extending its utility beyond text to include sophisticated audio data handling.

llama.cpp Releases

Open Sourcemodels

llama.cpp b9047 release focuses on device memory handling

The b9047 release of llama.cpp enhances how device memory is managed, particularly for GPUs with unknown configurations. By ensuring that memory fit for unknown GPUs is set to zero and maintaining a fallback for non-GPU devices, the update boosts stability and reliability. This release continues to support a broad array of operating systems, including macOS with KleidiAI enabled, Ubuntu with ROCm 7.2, and Windows with CUDA 12 and 13. While it doesn't introduce groundbreaking features, these refinements make llama.cpp a more dependable tool for developers working across different hardware environments.

llama.cpp Releases

More in Open Source

© Matt Wolfe

© Matt WolfeOpen Sourcemodels

DeepSeek V4 Offers Cost-Effective AI Solution

DeepSeek V4 is an open-source AI model offering near state-of-the-art capabilities at a significantly lower cost than competitors.

Matt Wolfe

Open Sourceimage

vLLM Releases v0.18.2rc0 Update

The v0.18.2rc0 release includes a fix for handling the max_pixels parameter in the PaddleOCR-VL image processor across transformations.

vLLM Releases

© Lev Selector

© Lev SelectorOpen Sourcecoding

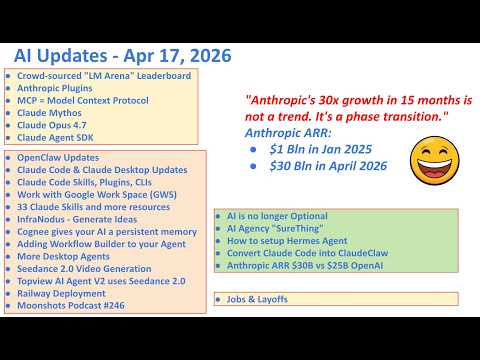

Anthropic Launches 33 Open Source Plugins

Anthropic has released a suite of plugins that enhance the Claude ecosystem.

Lev Selector