Open Source

llama.cpp b9009 Release Expands Platform Support

llama.cpp Releaseshigh confidence

Why it matters

- →Expands platform support, increasing versatility for developers.

- →Improves server efficiency by reducing unnecessary data operations.

- →Strengthens llama.cpp's position as a versatile inference runtime.

The b9009 release of llama.cpp introduces expanded platform support, including macOS Apple Silicon with KleidiAI and various Linux distributions with Vulkan and ROCm 7.2. This update focuses on improving server efficiency by eliminating unnecessary checkpoint data host copies. While no new model architectures are introduced, the release enhances llama.cpp's versatility as an inference runtime. These changes allow developers to optimize AI applications across a broader range of hardware configurations.

Read originalMore from llama.cpp Releases

Models & Labsmodels

llama.cpp b9012 Release Enhances Mistral Format Support

The b9012 release of llama.cpp marks a significant enhancement in handling the Mistral format, particularly with the apply_scale feature, which now functions more reliably thanks to fixes in boolean parameter handling. Developers can now leverage this update across a variety of platforms, including macOS, Linux, and Windows, ensuring compatibility with diverse hardware setups like Apple Silicon and Vulkan. By refining the conversion script, llama.cpp strengthens its infrastructure, making it a more robust tool for AI model deployment. While no new models are introduced, the update focuses on improving the existing framework, enhancing its adaptability and reliability for developers.

llama.cpp Releases

Open Sourcecoding

b9014 Release Adds Layer Norm Ops to ggml-webgpu

The b9014 release of llama.cpp enhances ggml-webgpu by integrating layer normalization operations, boosting its shader functionality. This update stabilizes floating point computations with Kahan summation, though it later reverts to the original method for improved efficiency. By eliminating non-contiguous strides, the release optimizes performance on platforms like macOS with KleidiAI, Ubuntu with ROCm 7.2, and Windows with CUDA 12 and 13. These changes make llama.cpp more adaptable and efficient for developers working with a range of hardware setups.

llama.cpp Releases

Open Sourcemodels

llama.cpp b9008 Release Expands Platform Support

The b9008 release of llama.cpp continues its trend of broadening platform support, making it a versatile tool for developers across various systems. This update includes new builds for macOS, Linux, Windows, and Android, with notable additions like Vulkan support on Ubuntu and Windows, and ROCm 7.2 on Ubuntu. By enhancing compatibility with different architectures, including Apple Silicon and Intel on macOS, and CUDA on Windows, llama.cpp is positioning itself as a go-to runtime for diverse hardware environments. While there are no groundbreaking new features, the release solidifies llama.cpp's role as a flexible and accessible inference tool for developers.

llama.cpp Releases

More in Open Source

© Matt Wolfe

© Matt WolfeOpen Sourcemodels

DeepSeek V4 Offers Cost-Effective AI Solution

DeepSeek V4 is an open-source AI model offering near state-of-the-art capabilities at a significantly lower cost than competitors.

Matt Wolfe

Open Sourceimage

vLLM Releases v0.18.2rc0 Update

The v0.18.2rc0 release includes a fix for handling the max_pixels parameter in the PaddleOCR-VL image processor across transformations.

vLLM Releases

© Lev Selector

© Lev SelectorOpen Sourcecoding

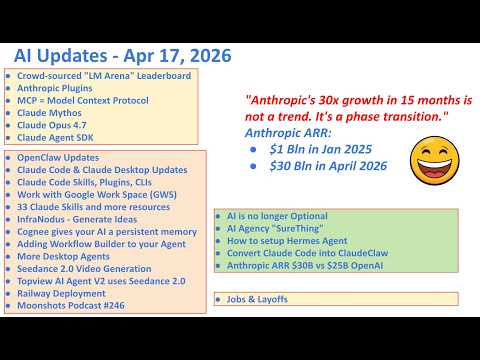

Anthropic Launches 33 Open Source Plugins

Anthropic has released a suite of plugins that enhance the Claude ecosystem.

Lev Selector