Open Source

llama.cpp b9118 Release Expands Platform Support

llama.cpp Releaseshigh confidence

Why it matters

- →Expands platform support, making llama.cpp more versatile.

- →Introduces Vulkan and ROCm 7.2, offering alternatives to CUDA.

- →Enhances performance on Apple Silicon with KleidiAI integration.

The b9118 release of llama.cpp has been announced, featuring expanded support across multiple platforms including macOS, Linux, Windows, and Android. Key updates include Vulkan support on both Ubuntu and Windows, as well as ROCm 7.2 for AMD GPUs, providing more options for users beyond NVIDIA's CUDA. The release also enhances performance on Apple Silicon with KleidiAI integration. This update underscores llama.cpp's commitment to being a versatile inference tool across various hardware setups.

Read originalMore from llama.cpp Releases

Models & Labsmodels

Llama.cpp b9116 Release Adds MiMo v2.5 Vision

The latest b9116 release of llama.cpp introduces MiMo v2.5, enhancing vision support with fused qkv for improved performance. This update addresses previous issues like f16 vision overflow and includes various cleanups for better code maintenance. With expanded platform support, including macOS, Linux, and Windows, this release broadens accessibility for developers working on diverse systems. The focus on vision capabilities marks a significant step in making llama.cpp a more versatile tool for AI developers, particularly those interested in integrating vision functionalities.

llama.cpp Releases

Models & Labsmodels

b9119 release addresses Intel GPU performance

The b9119 release of llama.cpp focuses on fixing a performance regression for Intel GPU BF16 workloads on Windows, specifically targeting Xe2 and newer models. This update ensures that users on these platforms experience improved performance, particularly when using Vulkan. The release also includes a refactor to optimize the use of l_warptile only when coopamt is available for BF16, enhancing efficiency. While the update doesn't introduce new models or groundbreaking features, it solidifies llama.cpp's commitment to maintaining and improving performance across diverse hardware configurations.

llama.cpp Releases

Models & Labsmodels

llama.cpp b9123 release enhances WebGPU support

The b9123 release of llama.cpp makes a notable advancement by enabling the execution of gpt-oss-20b with ggml-webgpu, highlighting its commitment to performance enhancement. This update includes a refined mulmat-q function and turns off test-backend-ops in Ubuntu-24-webgpu, indicating a focus on optimizing specific environments. With support for systems like macOS, Linux, Windows, and Android, the release caters to developers working with a variety of hardware. The integration of Vulkan, ROCm, and CUDA support further establishes llama.cpp as a flexible tool for deploying AI models on different configurations.

llama.cpp Releases

More in Open Source

© Matt Wolfe

© Matt WolfeOpen Sourcemodels

DeepSeek V4 Offers Cost-Effective AI Solution

DeepSeek V4 is an open-source AI model offering near state-of-the-art capabilities at a significantly lower cost than competitors.

Matt Wolfe

Open Sourceimage

vLLM Releases v0.18.2rc0 Update

The v0.18.2rc0 release includes a fix for handling the max_pixels parameter in the PaddleOCR-VL image processor across transformations.

vLLM Releases

© Lev Selector

© Lev SelectorOpen Sourcecoding

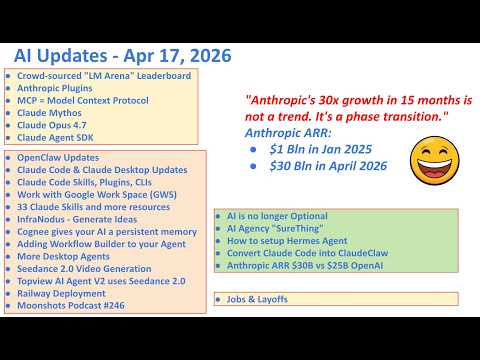

Anthropic Launches 33 Open Source Plugins

Anthropic has released a suite of plugins that enhance the Claude ecosystem.

Lev Selector