Open Source

llama.cpp b9058 Release Expands Platform Support

llama.cpp Releaseshigh confidence

Why it matters

- →Expands llama.cpp's compatibility across major operating systems, enhancing developer accessibility.

- →Introduces Vulkan and ROCm support, improving graphics and AMD GPU performance.

- →Provides CUDA updates for Windows, supporting NVIDIA GPU users.

The b9058 release of llama.cpp introduces expanded support across multiple platforms, including macOS, Linux, Windows, and Android. Key updates include KleidiAI support for macOS Apple Silicon, Vulkan support for both Ubuntu and Windows, and ROCm 7.2 for Ubuntu, which enhances AMD GPU compatibility. The release also provides CUDA 12 and 13 DLLs for Windows, catering to NVIDIA GPU users. These updates make llama.cpp more versatile and accessible for developers working with diverse hardware configurations.

Read originalMore from llama.cpp Releases

Open Sourcemodels

llama.cpp b9056 Release Expands Platform Support

The latest b9056 release of llama.cpp continues its trend of broadening platform compatibility, now including support for macOS Apple Silicon with KleidiAI enabled and a variety of Linux configurations such as Ubuntu with Vulkan and ROCm 7.2. This update also enhances Windows support with CUDA 12 and 13 DLLs, making it more versatile for developers working across different environments. While there are no groundbreaking new features, the release solidifies llama.cpp's position as a flexible inference runtime across diverse hardware setups. Developers can now leverage these updates to optimize performance on their specific systems, whether they're using Apple Silicon, AMD, or NVIDIA GPUs.

llama.cpp Releases

Open Sourcemodels

llama.cpp b9057 Release Expands Platform Support

The latest b9057 release of llama.cpp continues its trend of broadening platform compatibility, now optimizing for RISC-V CPUs with q1_0 dot support. This update enhances performance across a wide array of systems, including macOS, Linux, Windows, and Android, with specific builds for Apple Silicon, Vulkan, and CUDA environments. Notably, the inclusion of ROCm 7.2 for Ubuntu x64 and CUDA 13 for Windows x64 signifies a commitment to supporting diverse hardware configurations. While no new models are introduced, this release solidifies llama.cpp's position as a versatile inference runtime across multiple architectures.

llama.cpp Releases

Models & Labsmodels

llama.cpp b9060 Release Enhances SYCL Operations

The latest b9060 release of llama.cpp introduces several new SYCL operations, including FILL, CUMSUM, and DIAG, which expand the library's computational capabilities. This update also addresses a critical issue that caused aborts during test-backend-ops, ensuring more stable performance. With the addition of scope_dbg_print to both new and existing SYCL operations, developers gain enhanced debugging tools. This release continues to broaden llama.cpp's platform support, making it a more versatile tool for developers working across different environments.

llama.cpp Releases

More in Open Source

© Matt Wolfe

© Matt WolfeOpen Sourcemodels

DeepSeek V4 Offers Cost-Effective AI Solution

DeepSeek V4 is an open-source AI model offering near state-of-the-art capabilities at a significantly lower cost than competitors.

Matt Wolfe

Open Sourceimage

vLLM Releases v0.18.2rc0 Update

The v0.18.2rc0 release includes a fix for handling the max_pixels parameter in the PaddleOCR-VL image processor across transformations.

vLLM Releases

© Lev Selector

© Lev SelectorOpen Sourcecoding

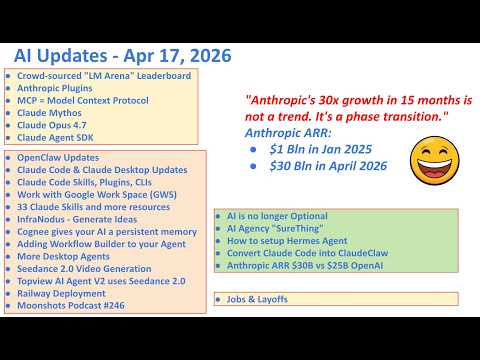

Anthropic Launches 33 Open Source Plugins

Anthropic has released a suite of plugins that enhance the Claude ecosystem.

Lev Selector