Models & Labs

Latest AI signals in this category

Models & Labsother

New release of llama.cpp b8991

The latest version b8991 of llama.cpp has been released, featuring updates for various operating systems.

llama.cpp Releases

Models & Labsother

llama.cpp update enhances compatibility and performance

The latest update to llama-mmap improves compatibility with various platforms and model sizes. Key enhancements include support for 32-bit wasm and updates to gguf.cpp style.

llama.cpp Releases

© TechCrunch AI

© TechCrunch AIModels & Labsimage

ChatGPT Images 2.0 Gains Popularity in India

OpenAI's ChatGPT Images 2.0 has become popular in India, but global engagement remains modest. The tool allows users to create detailed visuals and has seen significant downloads in emerging markets.

TechCrunch AI

© TechCrunch AI

© TechCrunch AIModels & Labsother

OpenAI limits access to GPT-5.5 Cyber tool

OpenAI is rolling out its cybersecurity tool, GPT-5.5 Cyber, initially restricting access to critical cyber defenders only.

TechCrunch AI

© The Verge AI

© The Verge AIModels & Labsother

Musk reveals xAI used OpenAI models for Grok

Elon Musk testified that xAI utilized OpenAI's models to enhance its own AI system, Grok, during a federal court case. This involves model distillation, a method where a larger model teaches a smaller one.

The Verge AI

© TechCrunch AI

© TechCrunch AIModels & Labsother

Musk testifies xAI trained Grok on OpenAI models

Elon Musk has testified that xAI's Grok was trained using models from OpenAI. This revelation highlights ongoing discussions about model distillation and competition in AI.

TechCrunch AI

Models & Labsother

v0.19.0rc0 Release with CPU KV Cache Offloading

The v0.19.0rc0 release introduces a feature for CPU key-value cache offloading, enhancing performance. This update was signed off by Yifan Qiao.

vLLM Releases

Models & Labsother

v0.19.0rc1 Release Announcement

The v0.19.0rc1 release includes a bug fix that restricts TRTLLM attention to SM100, addressing issues with GB300 (SM103).

vLLM Releases

Models & Labsmodels

vLLM v0.19.0 Released with New Features

The vLLM v0.19.0 release includes 448 commits and introduces support for Google Gemma 4 architecture, async scheduling with speculative decoding, and various model enhancements. It also improves compatibility with HuggingFace Transformers v5.

vLLM Releases

Models & Labsother

Gemma4 Implementation Cleaned Up in v0.19.1rc0

The release v0.19.1rc0 includes a cleanup of the Gemma4 implementation, as noted in the commit message. This update was signed off by Isotr0py.

vLLM Releases

Models & Labsother

v0.19.1 Patch Release with Bug Fixes

The v0.19.1 patch release includes an upgrade to Transformers v5.5.3 and various bug fixes for the Gemma4 tool, addressing issues such as JSON errors and streaming tool call corruption.

vLLM Releases

Models & Labsother

v0.19.2rc0 Bugfix for GLM-ASR Released

A bugfix has been released for the k_proj's bias in GLM-ASR as part of version v0.19.2rc0.

vLLM Releases

Models & Labsother

v0.20.0rc1 Release Announcement

The v0.20.0rc1 release reverts a previous change regarding the common requirements for pyav and soundfile.

vLLM Releases

Models & Labsother

vLLM v0.20.0 Released with Major Updates

The vLLM v0.20.0 release includes 752 commits and introduces support for DeepSeek V4, upgrades to CUDA 13.0, and compatibility with PyTorch 2.11. Additionally, it adds support for Python 3.14 and HuggingFace transformers version 5.

vLLM Releases

Models & Labsother

OpenAI-compatible API updates with system_fingerprint

The release of v0.20.1rc0 introduces a new system_fingerprint field to API responses, enhancing compatibility with OpenAI's API. This update was co-authored by Claude from Anthropic.

vLLM Releases

Models & Labsother

New Release of llama.cpp with CUDA Support

The latest release of llama.cpp includes support for various platforms and CUDA enhancements, including fusing operations for improved performance. It is now compatible with multiple operating systems including macOS, Linux, Android, and Windows.

llama.cpp Releases

Models & Labsother

Llama.cpp Releases New Version with Multi-Platform Support

The latest release of Llama.cpp includes updates for various platforms including macOS, Linux, Android, and Windows, with specific enhancements for Apple Silicon and CUDA support.

llama.cpp Releases

Models & Labsother

Llama.cpp Updates Model Checkpoints and Compatibility

The latest release of llama.cpp includes fixes for draft model checkpoints and enhances compatibility across various operating systems and architectures, including macOS, Linux, Android, and Windows.

llama.cpp Releases

Models & Labsother

New Release of llama.cpp with Fast Matmul Iquants

The latest release of llama.cpp introduces fast matmul iquants and expands support across various platforms including macOS, Linux, Android, and Windows.

llama.cpp Releases

© Sifted

© SiftedModels & Labsother

Hertility CEO Discusses Women's Health Model

Helen O’Neill, CEO of Hertility, talks about the development of a foundational model aimed at improving women's health. The initiative focuses on leveraging AI to enhance healthcare solutions for women.

Sifted

© MIT Technology Review AI

© MIT Technology Review AIModels & Labsother

Goodfire launches Silico for LLM debugging

Goodfire has introduced Silico, a mechanistic interpretability tool that allows researchers to debug and adjust AI model parameters during training. This tool aims to enhance the understanding and control over AI model development.

MIT Technology Review AI

© The Verge AI

© The Verge AIModels & Labsother

OpenAI addresses goblin references in models

OpenAI has acknowledged a peculiar trend in its models, particularly the GPT-5.1, where they avoid discussing goblins and similar creatures. This issue was highlighted after a Wired report and OpenAI's subsequent explanation on their website.

The Verge AI

© The Verge AI

© The Verge AIModels & Labsmodels

OpenAI to Launch GPT-5.5-Cyber for Select Users

OpenAI is set to release a new cybersecurity model, GPT-5.5-Cyber, exclusively for trusted cyber defenders. The rollout will begin in the coming days, focusing on enhancing institutional cyber defenses.

The Verge AI

.png) © Together AI Blog

© Together AI BlogModels & Labsother

Together AI Partners with Adaption

Together AI and Adaption have formed a partnership to integrate Together Fine-Tuning into Adaptive Data, enabling teams to optimize datasets and deploy stronger open models.

Together AI Blog

Models & Labsmodels

GPT-5 Goblin Outputs Explained

OpenAI discusses the origins and solutions for personality-driven quirks in GPT-5, referred to as 'goblin outputs'. The timeline and root causes of these behaviors are also outlined.

OpenAI

© WIRED AI

© WIRED AIModels & Labsmodels

SenseTime Releases Speed-Optimized Image Model

Chinese AI firm SenseTime has launched a new image model designed for speed, focusing on compatibility with Chinese-made chips due to US tech restrictions. The model emphasizes open-source development.

WIRED AI

Models & Labsmodels

OpenAI Expands Stargate Compute Infrastructure

OpenAI has scaled its Stargate system to enhance the compute infrastructure necessary for advancing artificial general intelligence (AGI), adding new data center capacity to accommodate increasing AI demand.

OpenAI

© AI News

© AI NewsModels & Labsmodels

OpenAI Launches GPT-5.5 as Advanced AI Model

OpenAI has released GPT-5.5, claiming it to be the most capable agentic AI model to date, designed for independent task execution. The model shows improved performance on various benchmarks compared to its predecessors.

AI News

.png) © Together AI Blog

© Together AI BlogModels & Labsother

DeepSeek-V4 Pro Launches on Together AI

DeepSeek-V4 Pro has been released on Together AI, featuring 512K context, controllable reasoning modes, and cached-input pricing for various applications including code agents and document intelligence.

Together AI Blog

© AI News

© AI NewsModels & Labsmodels

IBM launches AI platform Bob for SDLC governance

IBM has introduced Bob, an AI platform designed to manage software delivery costs and enhance governance in the software development lifecycle (SDLC). The platform aims to address challenges posed by technical debt and fragmented development processes.

AI News

© NVIDIA Blog

© NVIDIA BlogModels & Labsagents

NVIDIA Launches Nemotron 3 Nano Omni Model

NVIDIA has introduced the Nemotron 3 Nano Omni, an open multimodal model that integrates vision, audio, and language capabilities into a single system, enhancing the efficiency of AI agents. This model is designed for enterprises and developers, offering improved accuracy and scalability for multimodal AI applications.

NVIDIA Blog

© NVIDIA Blog

© NVIDIA BlogModels & Labsother

NVIDIA Introduces Simulation-First Era for Manufacturing

NVIDIA's blog discusses the shift in manufacturing towards high-fidelity simulation for AI training, facilitated by OpenUSD and NVIDIA Omniverse. This new approach allows for more accurate perception systems and agentic workflows in factory environments.

NVIDIA Blog

© AI News

© AI NewsModels & Labsmodels

Kakao Mobility Unveils Level 4 Autonomous Driving Roadmap

Kakao Mobility has announced its plans for developing Level 4 autonomous driving technologies as part of its physical AI strategy, presented at the 2026 World IT Show. The roadmap focuses on machine learning models, vehicle redundancy, and validation systems to enhance autonomous driving capabilities.

AI News

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Launches NVIDIA Nemotron 3 Nano Omni

Together AI has made the NVIDIA Nemotron 3 Nano Omni available to developers, a model designed for reasoning across multiple media types including video, images, audio, and text.

Together AI Blog

© The Rundown AI

© The Rundown AIModels & Labsmodels

DeepSeek Launches Affordable V4 AI Model

DeepSeek has introduced its V4 AI model, featuring strong open-source performance and competitive pricing. The model supports Huawei chips and offers a 1M-token context window, positioning it as a cost-effective alternative to leading competitors.

The Rundown AI

© MIT Technology Review AI

© MIT Technology Review AIModels & Labsmodels

DeepSeek launches new open-source AI model V4

Chinese AI firm DeepSeek has released a preview of its new flagship model, V4, which can process longer prompts and is open source. This release follows the success of its previous model, R1, and aims to provide advanced AI capabilities at lower costs.

MIT Technology Review AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

OpenAI launches GPT-5.5 'Spud', surpasses Claude

OpenAI has released its new model, GPT-5.5, codenamed 'Spud', which has achieved high benchmark scores and overtaken Anthropic's Claude in performance. The model is designed to be more efficient and cost-effective compared to its competitors.

The Rundown AI

© AI News

© AI NewsModels & Labsmodels

AI Models Utilize Real-Time Cryptocurrency Data

AI systems are adapting to real-time cryptocurrency data, which presents both challenges and opportunities for market interpretation. The dynamic nature of cryptocurrency markets requires models to process continuous updates rather than relying on static datasets.

AI News

© NVIDIA Blog

© NVIDIA BlogModels & Labscoding

OpenAI's GPT-5.5 Powers NVIDIA's Codex Application

OpenAI's latest model, GPT-5.5, is now powering Codex, NVIDIA's coding application, which is being utilized by over 10,000 employees across various departments. This integration is reported to significantly enhance productivity and reduce debugging times.

NVIDIA Blog

© NVIDIA Blog

© NVIDIA BlogModels & Labsagents

NVIDIA and Google Cloud Enhance AI Infrastructure

NVIDIA and Google Cloud have announced advancements in their collaboration to improve agentic and physical AI, introducing new infrastructure and services at Google Cloud Next. This includes the launch of A5X bare-metal instances powered by NVIDIA Vera Rubin and enhancements to the Google Gemini platform.

NVIDIA Blog

© The Rundown AI

© The Rundown AIModels & Labsimage

OpenAI Launches ChatGPT Images 2.0

OpenAI has released ChatGPT Images 2.0, an upgraded image generation model that plans, searches the web, and checks outputs before generating images. This model has taken the top spot on Arena AI's text-to-image leaderboard, surpassing Google's Nano Banana.

The Rundown AI

© MIT Technology Review AI

© MIT Technology Review AIModels & Labsmodels

Future of LLMs: Introducing LLMs+

OpenAI's ChatGPT has sparked a race for improved LLMs, termed LLMs+, which aim to solve complex problems more efficiently. Innovations include mixture-of-experts and potential shifts to diffusion models for enhanced performance.

MIT Technology Review AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

Brin leads DeepMind to compete with Anthropic's Claude

Sergey Brin is spearheading a new DeepMind team to enhance Gemini's coding capabilities to compete with Anthropic's Claude. This initiative aims to develop self-improving AI systems by focusing on coding as a critical skill.

The Rundown AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

Anthropic launches Claude Design for prototyping

Anthropic has introduced Claude Design, a tool that transforms prompts, screenshots, and codebases into interactive prototypes and marketing materials. This tool integrates with the company's Opus 4.7 vision model and allows users to refine designs collaboratively.

The Rundown AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

OpenAI Updates Codex with Superapp Features

OpenAI has released a significant update to its Codex platform, introducing features such as background computer use, an in-app browser, and parallel agents. This update marks a step towards OpenAI's vision of a comprehensive superapp.

The Rundown AI

© MIT News AI

© MIT News AIModels & Labsmodels

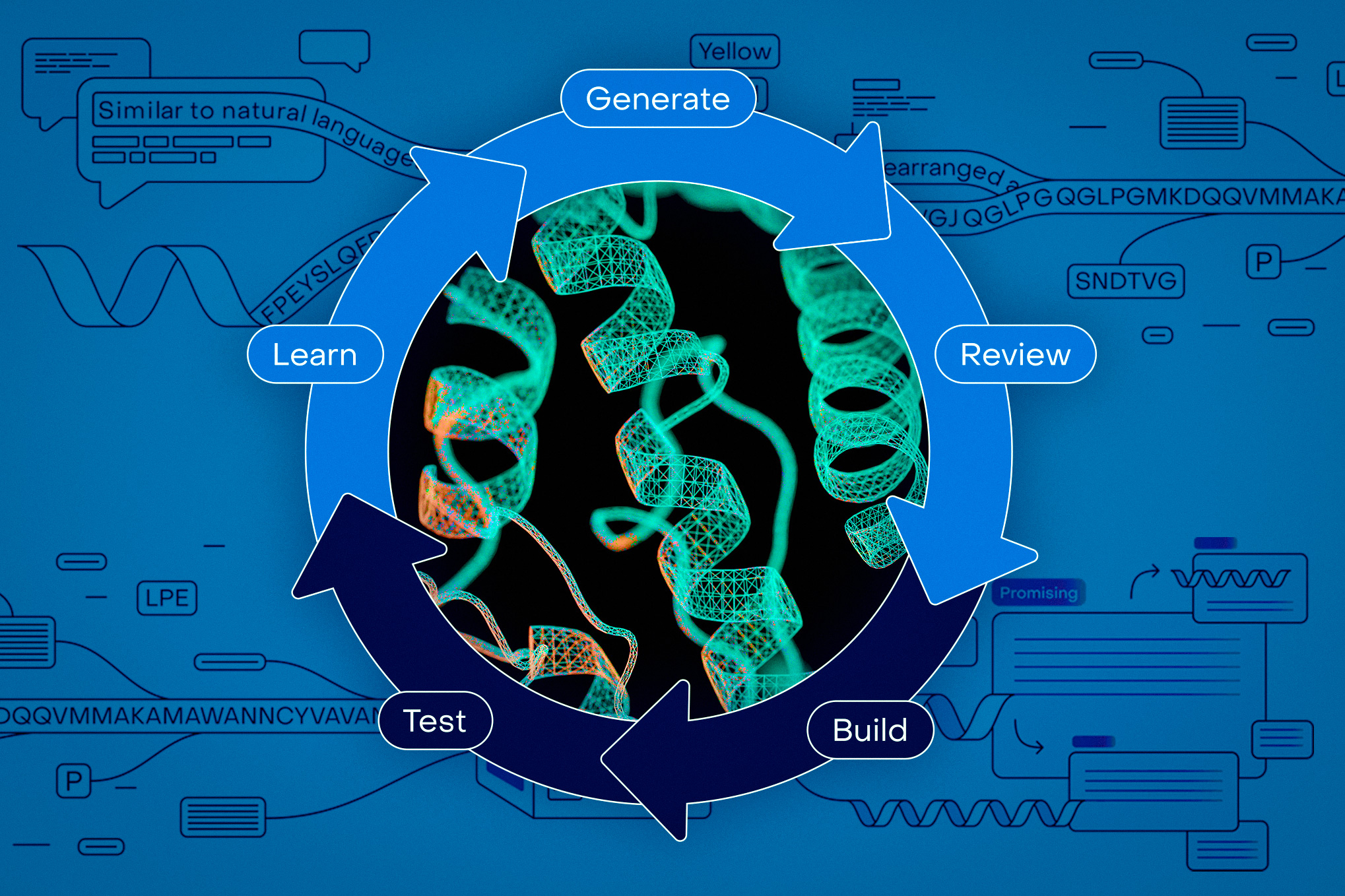

OpenProtein.AI Launches No-Code Protein Design Platform

OpenProtein.AI has introduced a no-code platform that allows biologists to access advanced AI tools for protein design and modeling. The platform aims to bridge the gap between AI technology and biological research, making it easier for scientists without machine-learning expertise to utilize these resources.

MIT News AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

OpenAI Launches GPT-5.4-Cyber for Cyber Defense

OpenAI has introduced GPT-5.4-Cyber, a model designed for defensive security, which allows broader access compared to Anthropic's Mythos, limited to 40 organizations. The new model can reverse-engineer software to identify malware and security vulnerabilities.

The Rundown AI

© Together AI Blog

© Together AI BlogModels & Labsresearch

Parcae Model Achieves High Performance with Fewer Parameters

Parcae is a stable looped language model that delivers performance comparable to larger models while using fewer parameters. The introduction of scaling laws for looping suggests that increasing recurrence can enhance efficiency in model training.

Together AI Blog

© NVIDIA Blog

© NVIDIA BlogModels & Labsother

NVIDIA Highlights Robotics Breakthroughs for National Robotics Week

NVIDIA is showcasing advancements in AI for robotics during National Robotics Week, emphasizing new technologies that enhance robot learning and deployment. Key announcements include new models for natural language understanding and improved simulation tools for robotic systems.

NVIDIA Blog

© The Rundown AI

© The Rundown AIModels & Labsmodels

Meta Superintelligence Labs launches Muse Spark model

Meta's Superintelligence Labs has released Muse Spark, a multimodal reasoning model capable of processing voice, text, and image inputs. While it competes with leading models in reasoning, it falls short in coding capabilities.

The Rundown AI

© The Rundown AI

© The Rundown AIModels & Labsmodels

Anthropic unveils powerful AI through Project Glasswing

Anthropic has introduced Claude Mythos Preview, a powerful AI model, as part of Project Glasswing, a cybersecurity coalition with major tech partners. Access to Mythos is restricted to select organizations for defensive security purposes.

The Rundown AI

© NVIDIA Blog

© NVIDIA BlogModels & Labsagents

NVIDIA Optimizes Gemma 4 for Local AI Execution

NVIDIA has announced enhancements to the Gemma 4 family of models, optimized for efficient local execution on various devices, including NVIDIA GPUs. These models support a wide range of tasks, from coding to multimodal interactions.

NVIDIA Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Deepgram STT and TTS Models on Together AI

Deepgram's speech-to-text (STT) and text-to-speech (TTS) models are now available natively on Together AI for real-time voice agents.

Together AI Blog

© Hugging Face Blog

© Hugging Face BlogModels & Labsother

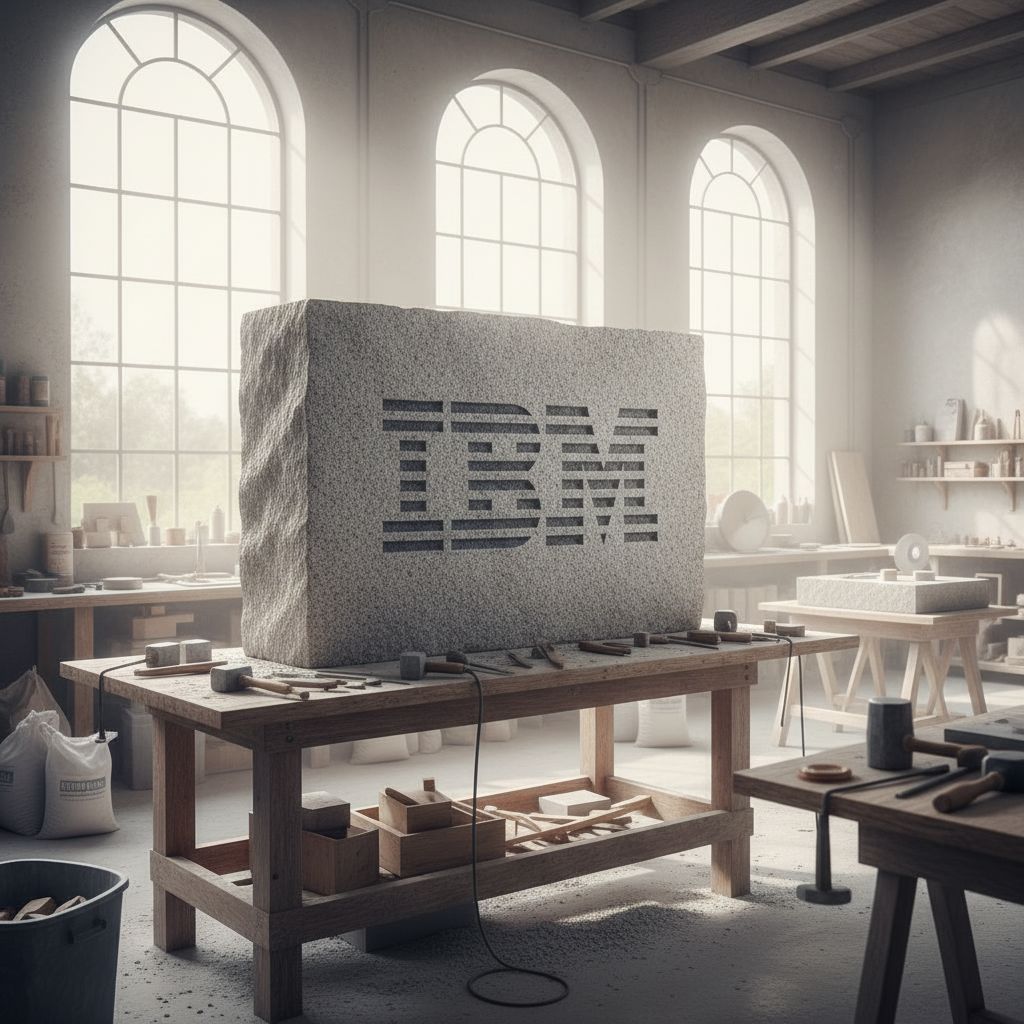

Hugging Face Introduces Falcon Perception Model

Hugging Face has unveiled Falcon Perception, a new early-fusion Transformer model designed for open-vocabulary grounding and segmentation. The model achieves significant performance improvements in image processing tasks.

Hugging Face Blog

© MIT News AI

© MIT News AIModels & Labsmodels

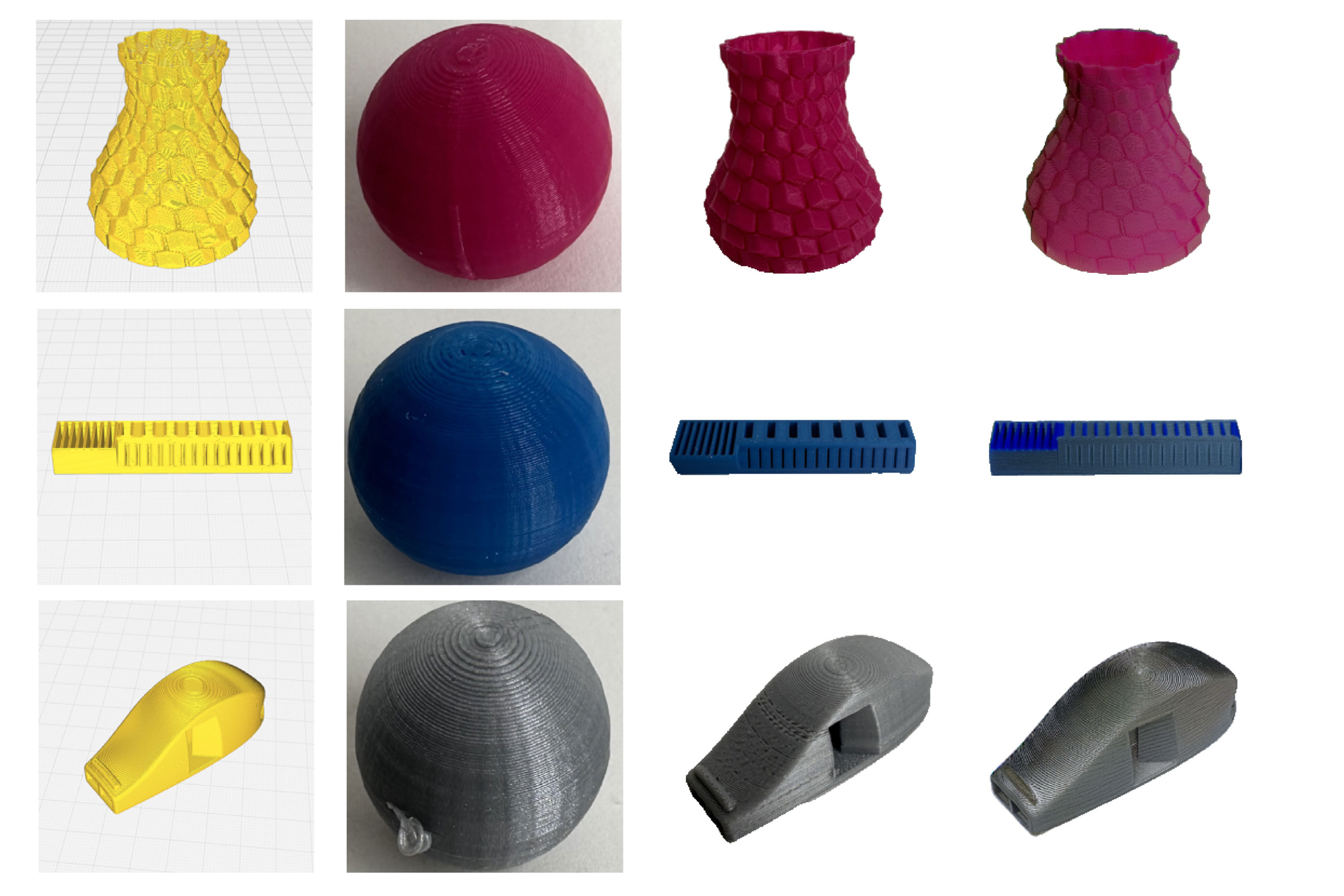

MIT Develops 3D Printing Preview Tool VisiPrint

Researchers at MIT have created VisiPrint, a tool that generates accurate visual previews of 3D-printed objects based on user inputs. This AI-powered system aims to reduce waste in 3D printing by helping users better visualize the final appearance of their prototypes.

MIT News AI

© Together AI Blog

© Together AI BlogModels & Labsresearch

Inside Together AI's Kernels Team

The Together AI blog discusses the team responsible for FlashAttention and ThunderKittens, focusing on their efforts to bridge the gap between GPU hardware and production AI.

Together AI Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Preview on Apple Silicon with MLX

Ollama has announced a preview of its platform optimized for Apple Silicon, utilizing Apple's MLX machine learning framework for improved performance.

Ollama Blog

© MIT News AI

© MIT News AIModels & Labsmodels

AI Optimizes Robot Traffic in Warehouses

MIT researchers and Symbotic developed an AI system that improves the efficiency of warehouse robots by managing traffic flow and preventing congestion. The system uses deep reinforcement learning to prioritize robot movements, achieving a 25% increase in throughput during simulations.

MIT News AI

© Google Research Blog

© Google Research BlogModels & Labsmodels

TurboQuant Introduces Extreme Compression for AI

Google Research has unveiled TurboQuant, a new approach aimed at enhancing AI efficiency through extreme compression techniques. This development could significantly reduce the resource requirements for AI models.

Google Research Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI expands fine-tuning service capabilities

Together AI has enhanced its fine-tuning service by adding support for tool calling, reasoning, and vision-language models, along with improved training capabilities and cost estimates.

Together AI Blog

.jpg) © Together AI Blog

© Together AI BlogModels & Labsmodels

Mamba-3: New Open-Source Inference Model

Mamba-3 is introduced as a new SSM designed for inference, claiming to be faster than Transformers during decoding and stronger than its predecessor, Mamba-2. It is open-source from its launch.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsagents

Together AI Launches Innovations at NVIDIA GTC 2026

Together AI showcased new developments in inference, agents, voice AI, and open models at NVIDIA GTC 2026, along with technical sessions from its leaders.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Google Introduces Groundsource for News Data Conversion

Google has launched Groundsource, a tool that converts news reports into structured data using its Gemini model. This initiative aims to enhance the accessibility and usability of news information for various applications.

Google Research Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsmodels

NVIDIA Nemotron 3 Available on Together AI

Together AI has launched NVIDIA Nemotron 3 Super on its Dedicated Inference platform, featuring multi-agent reasoning and a 1M-token context window.

Together AI Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsother

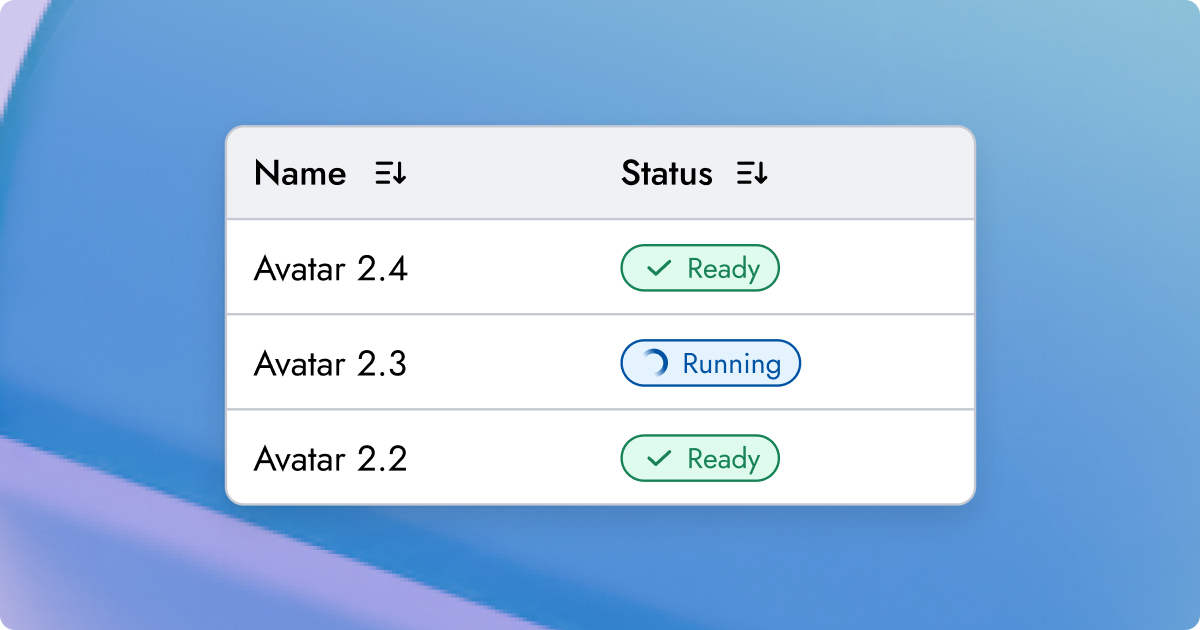

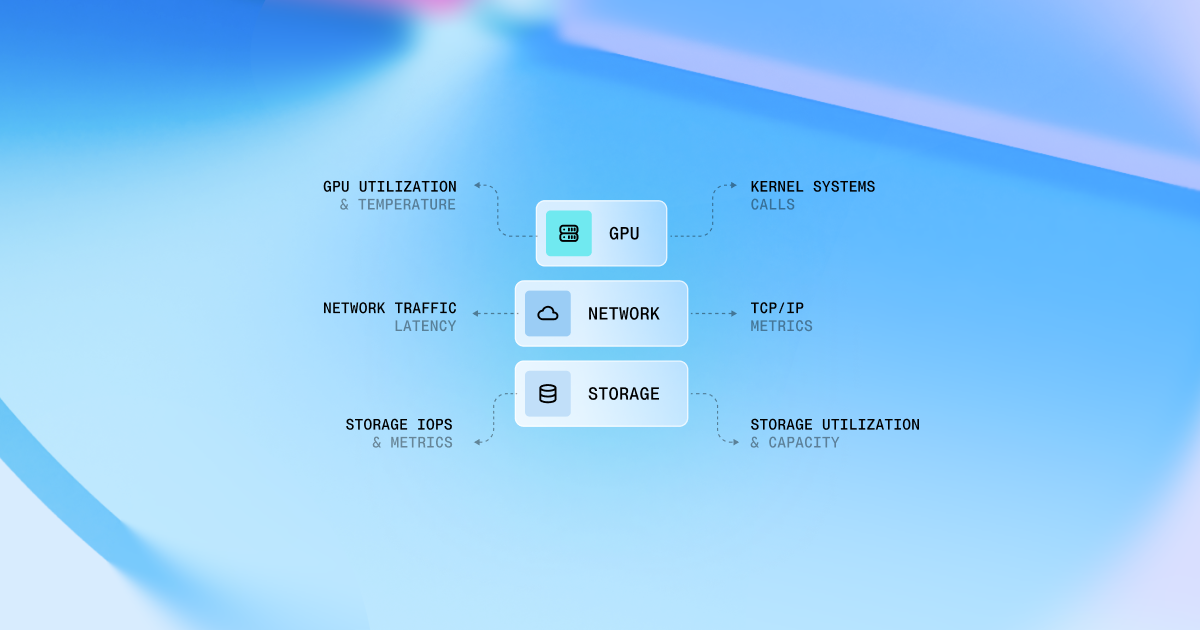

Together GPU Clusters Introduce New Features

Together GPU Clusters have added autoscaling, RBAC, full-stack observability, and self-healing capabilities to enhance production-ready GPU infrastructure for enterprise workloads.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

AI Native Conf Announces Key Research Breakthroughs

Together AI unveiled significant advancements in kernels, reinforcement learning, and inference optimization at the AI Native Conf, including FlashAttention-4, ThunderAgent, and together.compile.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Introduction of FlashAttention-4 Algorithm

FlashAttention-4 introduces new pipelining techniques and hybrid approaches to optimize GPU performance by addressing memory bandwidth limitations.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

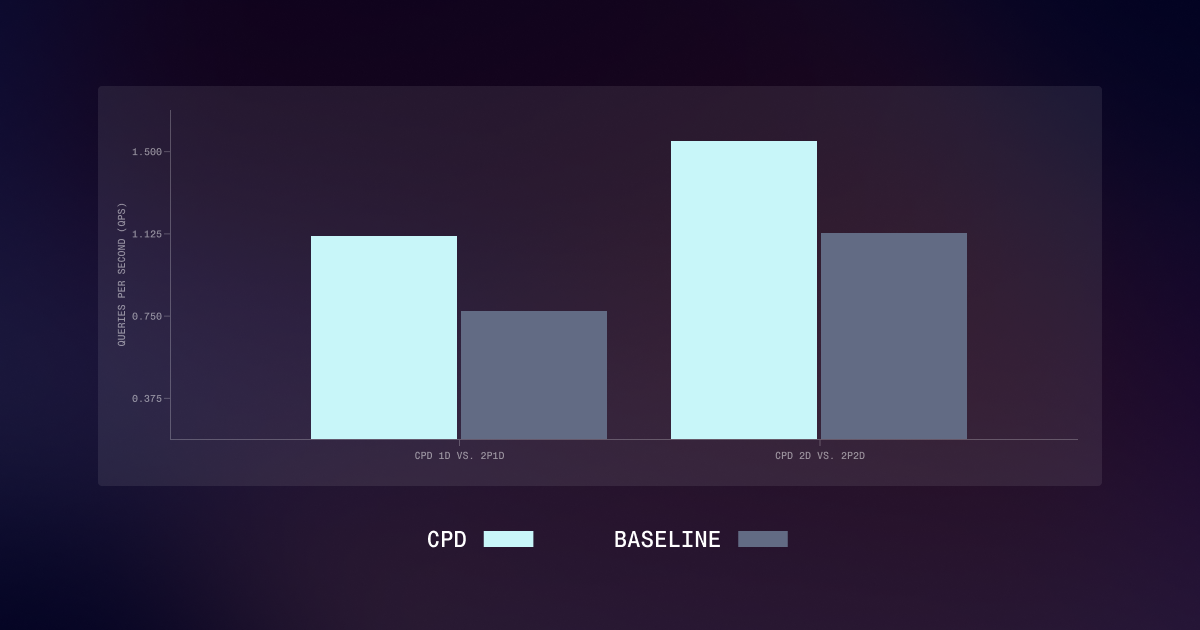

New CPD Architecture Boosts LLM Serving Speed

Together AI introduces a cache-aware architecture called CPD that improves throughput by 40% for long-context LLM serving by separating warm and cold inference workloads.

Together AI Blog

© MIT News AI

© MIT News AIModels & Labsmodels

New Method Enhances LLM Training Efficiency

Researchers from MIT developed a method to improve the training efficiency of reasoning large language models (LLMs) by utilizing idle computational resources. This approach can double training speed while maintaining accuracy, potentially reducing costs and energy consumption.

MIT News AI

© Replicate Blog

© Replicate BlogModels & Labsimage

Prompting Seedream 5.0 for Image Generation

Seedream 5.0 introduces features like multi-step reasoning, example-based editing, and enhanced domain knowledge for image generation. The blog provides insights on how to effectively prompt this model.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

New CDLM Model Offers Faster Inference

The Consistency Diffusion Language Model (CDLM) improves inference speed by up to 14.5 times without compromising quality, addressing limitations of standard diffusion models regarding KV caching and refinement steps.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Recraft V4 Launches for Image Generation

Recraft V4 introduces the ability to generate art-directed images and editable SVGs with strong composition and accurate text rendering. Four models are now available on Replicate.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Launches Faster Inference for Custom Models

Together AI has introduced a production-grade orchestration that delivers 1.4x to 2.6x faster inference for custom AI models.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Rime Arcana V3 Turbo Released on Together AI

Together AI has announced the availability of Rime Arcana V3 and Rime Arcana V3 Turbo, enhancing their offerings in AI tools.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Open LLM Judges Outperform GPT-5.2

Fine-tuned open-source LLM judges have been shown to outperform GPT-5.2 in evaluating model outputs using Direct Preference Optimization. This was achieved with significantly lower costs and faster inference speeds.

Together AI Blog

© Together AI Blog

© Together AI BlogInvestment

Models & Labsother

Together Evaluations Adds Support for Major APIs

Together Evaluations has expanded its platform to include benchmarking for OpenAI, Anthropic, and Google models, allowing users to compare various models side-by-side. This feature aims to facilitate data-driven decisions regarding model quality, cost, and performance.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsagents

DSGym Framework for Data Science Agents Introduced

DSGym is a new evaluation and training framework designed for LLM-based data science agents, featuring over 90 bioinformatics tasks and 92 Kaggle competitions. The framework claims to achieve state-of-the-art performance with its 4B model among open-source models.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Google Introduces GIST for Smart Sampling

Google Research has unveiled GIST, a new algorithm designed to enhance smart sampling techniques. This development aims to improve efficiency in data processing and analysis.

Google Research Blog

© Ollama Blog

© Ollama BlogModels & Labsimage

Ollama Introduces Local Image Generation for macOS

Ollama has launched an experimental feature that allows users to generate images locally on macOS, with plans for Windows and Linux support in the future.

Ollama Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

MedGemma 1.5 and MedASR Introduced

Google Research has announced the release of MedGemma 1.5 for medical image interpretation and MedASR for medical speech-to-text applications. These tools leverage generative AI to enhance medical diagnostics and documentation.

Google Research Blog

© Together AI Blog

© Together AI BlogModels & Labscoding

Cursor and Together AI Enhance Real-Time Inference

Together AI has partnered with Cursor to develop a real-time inference stack aimed at improving the performance of in-editor AI agents. This collaboration involves optimizing NVIDIA Blackwell hardware and software for low latency and efficient model deployment.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Scaling Model Training with Multi-Node GPU Clusters

The article discusses techniques for training foundation models at scale using multi-node GPU clusters, covering distributed training methods and infrastructure needs.

Together AI Blog

© VentureBeat AI

© VentureBeat AIModels & Labscoding

NousCoder-14B Released as Open-Source Coding Model

Nous Research launched NousCoder-14B, an open-source coding model that reportedly matches or exceeds larger proprietary systems. The model was trained in four days using Nvidia's B200 processors and achieved a 67.87% accuracy rate on LiveCodeBench v6.

VentureBeat AI

.png) © Together AI Blog

© Together AI BlogModels & Labsmusic

MiniMax Speech 2.6 Turbo Launches on Together AI

Together AI has released MiniMax Speech 2.6 Turbo, a multilingual text-to-speech (TTS) system featuring human-level emotional awareness and low latency. The system supports over 40 languages.

Together AI Blog

.png) © Together AI Blog

© Together AI BlogModels & Labsmusic

Rime TTS Models Launch on Together AI

Together AI has released two enterprise-grade Rime text-to-speech models that can be co-located with large language models and speech-to-text systems on dedicated infrastructure.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsmodels

NVIDIA Nemotron 3 Nano Now Available

NVIDIA has announced the availability of its latest reasoning model, Nemotron 3 Nano, on Together AI, the AI Native Cloud.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Titans and MIRAS Enhance AI Long-Term Memory

Google Research has introduced Titans and MIRAS, technologies aimed at improving long-term memory capabilities in generative AI systems. This development could lead to more contextually aware AI interactions.

Google Research Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Introducing AutoJudge for Inference Acceleration

AutoJudge enhances LLM inference by identifying significant token mismatches and utilizes self-supervised learning for improved performance. It achieves speedups of 1.5–2× over traditional speculative decoding methods.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

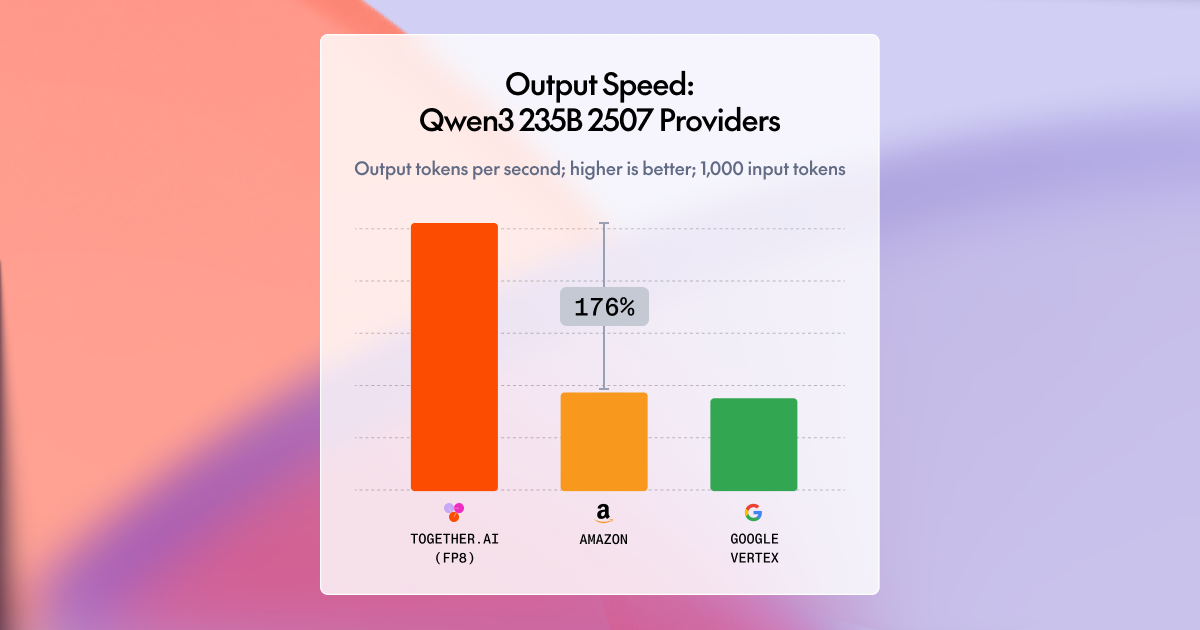

Together AI Achieves Fastest Inference for Open-Source Models

Together AI has reported up to 2x faster inference for popular open-source models such as Qwen, DeepSeek, and Kimi, utilizing GPU optimization and advanced techniques. They ranked #1 in speed benchmarks on NVIDIA Blackwell architecture.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Run Isaac 0.1 on Replicate

Isaac 0.1 is a lightweight, grounded vision-language model designed for real-world perception, now available on Replicate.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

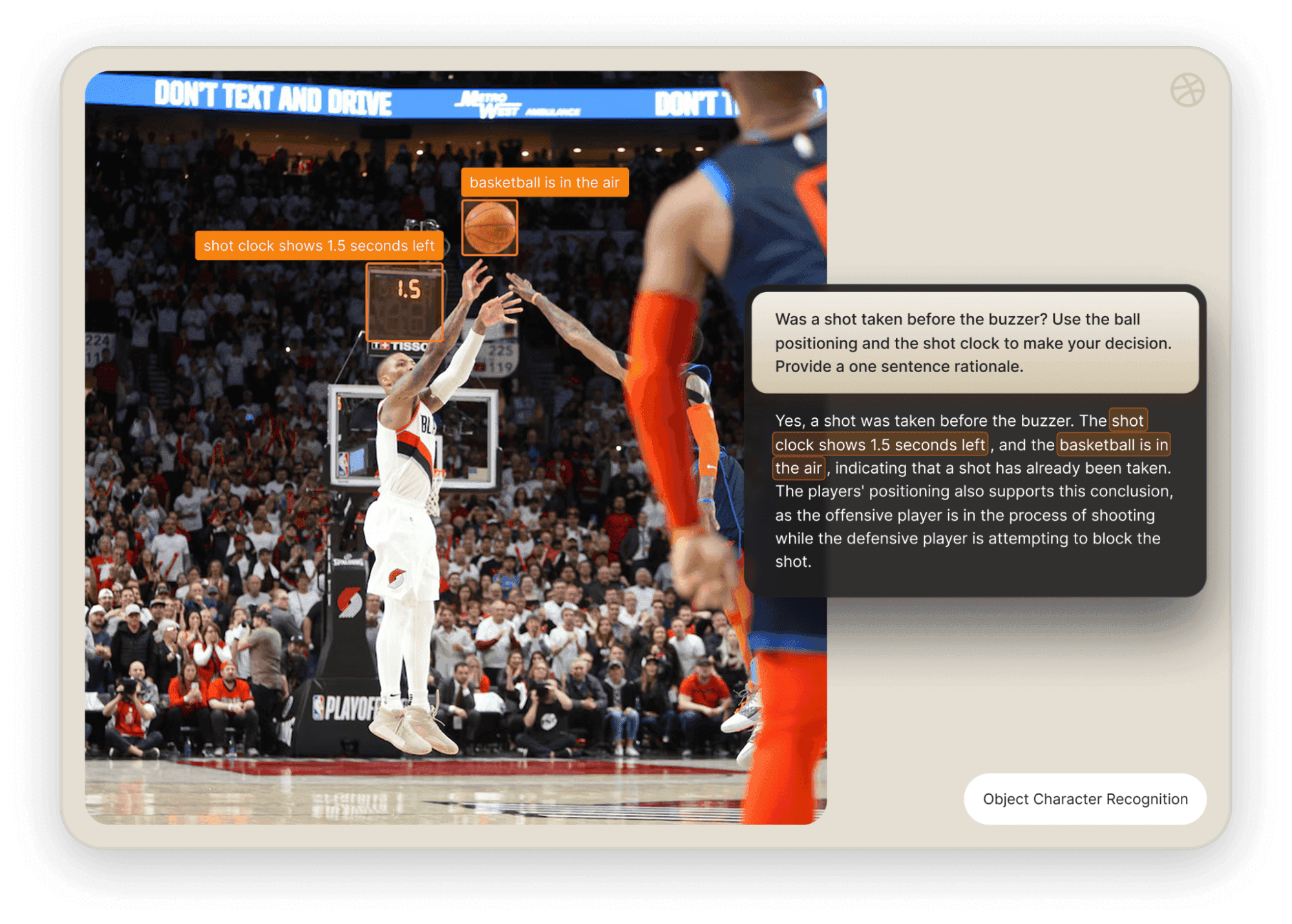

Run FLUX.2 on Replicate

FLUX.2 offers advanced image generation and editing capabilities with high detail and multi-reference support, now available on Replicate.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsimage

FLUX.2 Launches for Multi-Reference Image Generation

Together AI has launched FLUX.2, a tool for production-grade image generation that ensures multi-reference consistency, accurate brand colors, and reliable text rendering.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

AI Model Predicts Port Availability for EVs

A new AI model has been developed to predict port availability, aiming to reduce range anxiety for electric vehicle (EV) users. This model could help optimize charging infrastructure and improve user experience.

Google Research Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Prompting Techniques for Nano Banana Pro

The Replicate Blog discusses effective prompting strategies for the Nano Banana Pro, which enhances image generation and editing capabilities.

Replicate Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Real-time speech-to-speech translation developed

Google Research has announced advancements in real-time speech-to-speech translation technology. This development aims to enhance communication across language barriers.

Google Research Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Retro Diffusion's Pixel Art Models on Replicate

Retro Diffusion has released a suite of models for generating game assets, sprites, tiles, and pixel art on the Replicate platform.

Replicate Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Differentially Private ML with JAX-Privacy Released

Google Research has introduced JAX-Privacy, a framework for implementing differentially private machine learning at scale. This tool aims to enhance privacy in machine learning applications.

Google Research Blog

© Ollama Blog

© Ollama BlogModels & Labsother

OpenAI Launches gpt-oss-safeguard Models

Ollama is collaborating with OpenAI and ROOST to introduce gpt-oss-safeguard reasoning models for safety classification tasks, available in two sizes and under an Apache 2.0 license.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labscoding

MiniMax M2 Released on Ollama Cloud

MiniMax M2 is now available on Ollama's cloud, designed for coding and agentic workflows.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labsother

NVIDIA DGX Spark Performance Tested

Performance tests were conducted on the NVIDIA DGX Spark using release day firmware and an updated version of Ollama. The results provide insights into the performance capabilities of the system.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Datalab Introduces OCR Models for Document Processing

Datalab has released two new models that allow users to extract text from documents and images, converting them into markdown or capturing line-level polygons.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsimage

Together AI Adds 40+ New Image and Video Models

Together AI has expanded its model library by adding over 40 new image and video models, including Sora 2 and Veo 3, aimed at facilitating the development of multimodal applications with OpenAI-compatible APIs.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Hierarchical Generation of Synthetic Photo Albums

Google Research has introduced a method for generating coherent synthetic photo albums using hierarchical generation techniques. This approach aims to enhance the quality and relevance of generated images in a structured format.

Google Research Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Google Introduces Coral NPU for Edge AI

Google has launched the Coral NPU, a full-stack platform designed for Edge AI applications. This platform aims to enhance the deployment of AI models on edge devices.

Google Research Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Ollama Supports Alibaba's Qwen3-VL

Ollama has announced support for Alibaba's Qwen3-VL model.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labsother

NVIDIA DGX Spark Launch Announced

NVIDIA has launched the DGX Spark, optimized for performance with a partnership with Ollama for efficient operation.

Ollama Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

New Runtime-Learning Accelerator for LLM Inference

The AdapTive-LeArning Speculator System (ATLAS) enhances LLM inference speed by adapting to workloads, achieving 500 TPS on DeepSeek-V3.1, which is a 4x improvement over baseline performance without manual tuning.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsimage

Collaborative Image Generation Approach Introduced

Google Research has unveiled a new collaborative approach to image generation using generative AI techniques. This method aims to enhance the quality and creativity of generated images.

Google Research Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

IBM's Granite 4.0 Released on Replicate

IBM has launched Granite 4.0, now available on the Replicate platform, enhancing its capabilities for AI model deployment.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Enhances Model Scheduling System

Ollama has introduced an improved model scheduling system that aims to reduce crashes from out of memory issues and enhance GPU utilization and performance, particularly on multi-GPU setups.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Comparison of Latest Image Editing Models

The Replicate Blog provides a comprehensive comparison of various image editing models currently available.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Cloud Models Now in Preview

Ollama has announced the preview of cloud models, enabling users to run larger models on datacenter-grade hardware while still utilizing local tools.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsother

New Search API Launched by Replicate

Replicate has introduced a new search API that allows users to find models and collections with a single API call.

Replicate Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

VaultGemma: New Differentially Private LLM Released

Google Research has introduced VaultGemma, a new large language model that emphasizes differential privacy. This model aims to enhance user data protection while maintaining generative capabilities.

Google Research Blog

© Together AI Blog

© Together AI BlogModels & Labsmodels

Together AI Expands Fine-Tuning Platform Features

Together AI has upgraded its Fine-Tuning Platform to support training of models over 100 billion parameters, extended context lengths, and improved integration with Hugging Face Hub, along with new DPO options.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Launches Instant Clusters for GPU Access

Together AI has announced the general availability of Instant Clusters, which provide self-service access to NVIDIA H100/B200 GPUs for training or inference. These clusters can be set up in minutes.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

DeepSeek-V3.1 Model Released on Together AI

Together AI has launched DeepSeek-V3.1, a hybrid model featuring thinking and non-thinking modes, with a 66% SWE-bench verification and serverless deployment capabilities.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Fine-Tune OpenAI Models with Together AI

Together AI offers fine-tuning services for OpenAI's gpt-oss models, enabling users to create domain-specific experts efficiently. This service emphasizes enterprise reliability and cost-effectiveness.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Open-Source LLMs Outperform Closed-Source Models

A 27B open-source model was fine-tuned to outperform Claude Sonnet 4 by 60% on a healthcare task, demonstrating significant cost efficiency. This achievement highlights the potential of smaller models in specialized applications.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

New Conditional Generator for Data Synthesis

Google Research has introduced a conditional generator aimed at improving data synthesis beyond the limitations of billion-parameter models. This development could enhance the efficiency and effectiveness of generative AI applications.

Google Research Blog

Models & Labsother

OpenAI's New Open gpt-oss Models vs o4-mini

The article compares OpenAI's new open-source gpt-oss models with the o4-mini model, evaluating their performance in real-world applications.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Replicate Launches Remote MCP Server

Replicate has announced a new remote MCP server that allows users to discover, compare, and run models from various applications including Claude, Cursor, and VS Code.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Partners with OpenAI for gpt-oss

Ollama has announced a partnership with OpenAI to introduce gpt-oss to its community.

Ollama Blog

© Together AI Blog

© Together AI BlogModels & Labsmodels

OpenAI's Models Now Available on Together AI

Together AI has announced the availability of OpenAI's gpt-oss-120B model, which features open weights and serverless endpoints with specific pricing. The model is licensed under Apache-2.0.

Together AI Blog

© Google Research Blog

© Google Research BlogModels & Labsmodels

Google Introduces SensorLM for Wearable Sensors

Google Research has unveiled SensorLM, a generative AI model designed to understand and process data from wearable sensors. This model aims to enhance the interaction between users and wearable technology.

Google Research Blog

© Together AI Blog

© Together AI BlogInvestment

Models & Labsother

Together Evaluations Framework for Benchmarking LLMs

Together Evaluations is a new framework designed for benchmarking large language models (LLMs) using open-source models as judges, allowing for customizable insights into model quality without manual labeling.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labscoding

Qwen3-Coder Launches on Together AI

Together AI has released Qwen3-Coder, a coding model with a 256K context and capabilities rivaling Claude Sonnet 4, allowing for zero-setup instant deployment.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Comparing Image Models for Character Consistency

The article reviews various image models that generate consistent characters based on a single reference image. It highlights the strengths and weaknesses of each model in this context.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Bria Launches Image Tools on Replicate

Bria has partnered with Replicate to offer commercial-grade image generation and editing models. These tools are built on licensed data and aim to support enterprises and developers in using visual AI safely.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Achieves Fast Inference with DeepSeek-R1

Together AI has launched a new inference engine optimized for NVIDIA HGX B200, enhancing the performance of open-source reasoning models like DeepSeek-R1. This positions Together AI among the fastest platforms for such models.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Optimization of FLUX.1 Kontext Explained

The Replicate Blog provides an in-depth analysis of the Taylor Seer optimization technique used to enhance FLUX.1 Kontext.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsagents

Kimi K2 Open-Source Model Released

The Kimi K2 model, featuring 1 trillion parameters, is now available on Together AI, offering capabilities for agentic reasoning and coding with serverless deployment options.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Launches Speech-to-Text APIs

Together AI has introduced high-performance Whisper APIs for speech-to-text conversion. This launch aims to enhance accessibility and usability in various applications.

Together AI Blog

Models & Labsother

Together AI Launches Batch API for LLM Requests

Together AI has introduced a Batch API that allows users to process thousands of large language model (LLM) requests at a reduced cost of 50%. This development aims to enhance efficiency for users managing high volumes of LLM interactions.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Tips for Google Veo 3 Model

The Replicate Blog shares experiments and tips on using Google's new Veo 3 model.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

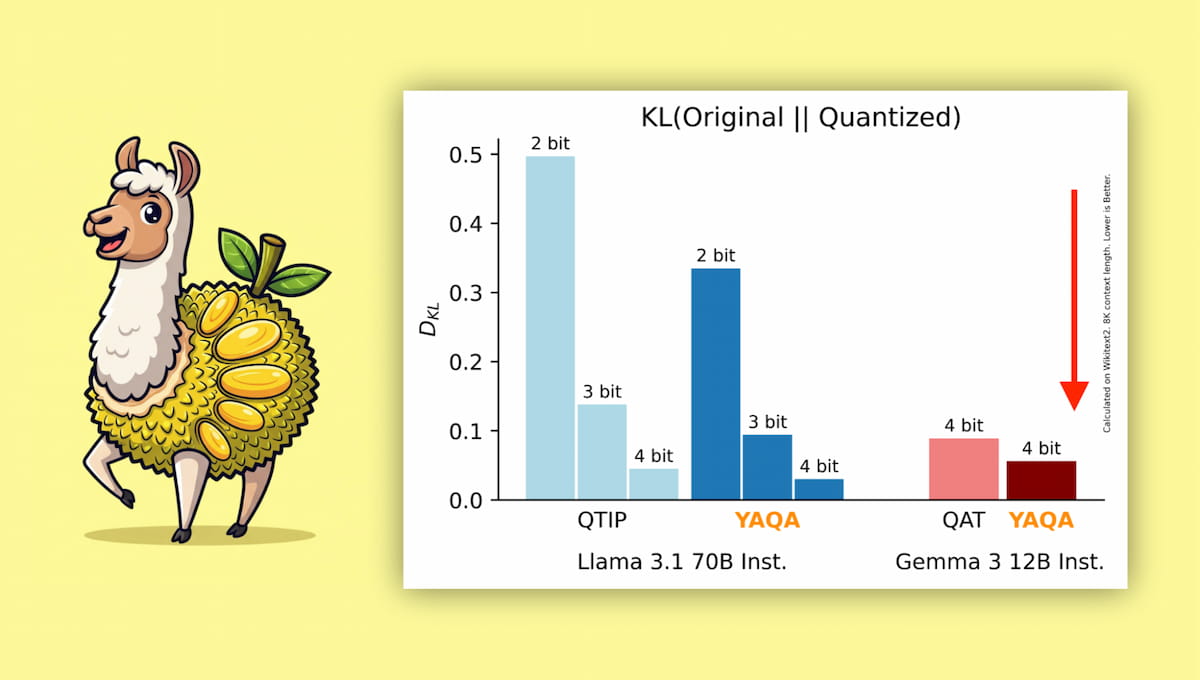

Model-Preserving Adaptive Rounding Introduced

Together AI has announced a new technique called Model-Preserving Adaptive Rounding (YAQA) aimed at improving model performance during quantization. This method seeks to maintain the integrity of machine learning models while reducing their size.

Together AI Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Introduces Thinking Toggle Feature

Ollama has launched a new feature that allows users to enable or disable the model's thinking behavior, providing flexibility for various applications. This update aims to enhance user control over the model's performance.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

FLUX.1 Kontext for Image Editing Released

Black Forest Labs has launched FLUX.1 Kontext, a new model for image editing that utilizes text prompts. The blog provides guidance on how to effectively use this model.

Replicate Blog

Models & Labsimage

FLUX.1 Kontext models announced

Together AI has introduced FLUX.1 Kontext models, which focus on character consistency and precise image editing without the need for fine-tuning. This development aims to enhance the capabilities of image generation tools.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Google's Imagen 4 Now Available on Replicate

Google's Imagen 4 image generation model can now be accessed on Replicate, allowing users to create detailed images with various styles and enhanced typography.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

OpenAI Models Now Available on Replicate

OpenAI has made its latest models, including GPT-4.1, GPT-4o, and the o-series, accessible on the Replicate platform.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

NVIDIA H100 GPUs Released

NVIDIA has announced the release of H100 GPUs, which offer improved performance at a lower cost.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Introduces New Multimodal Models Engine

Ollama has launched a new engine that supports multimodal models, enhancing its capabilities. This development allows for the integration of various data types in AI applications.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Replicate Partners with Hugging Face for Inference

Replicate has announced a partnership with Hugging Face to enable the running of over 30,000 LoRAs on their platform.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

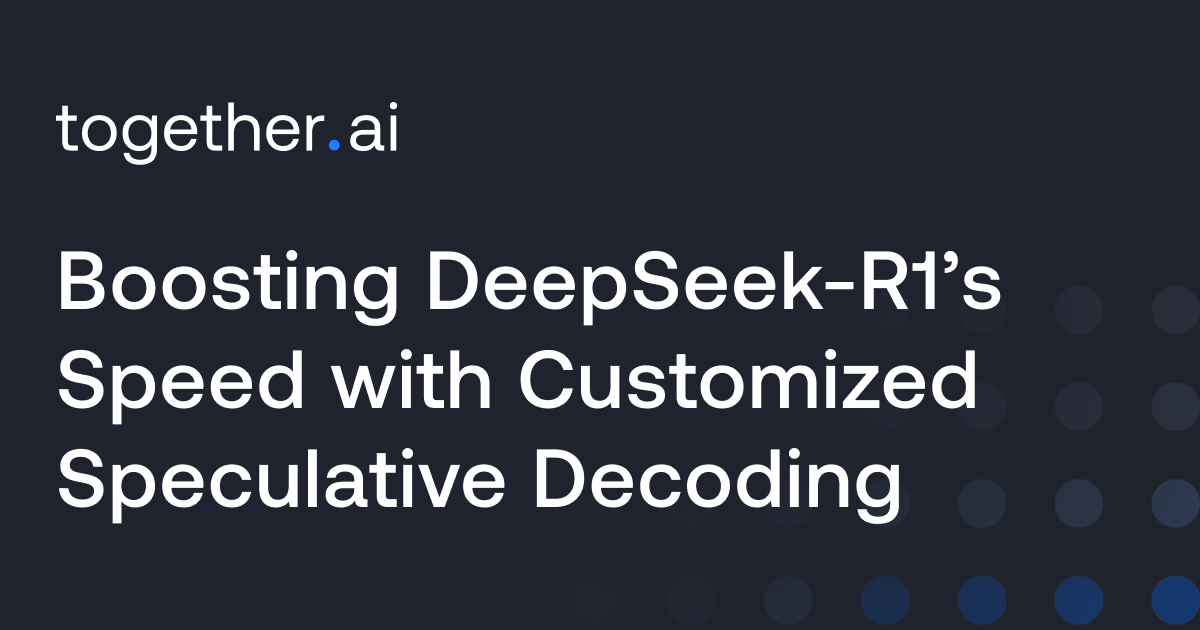

Boosting DeepSeek-R1’s Speed with Customized Decoding

Together AI has announced improvements to the DeepSeek-R1 model by implementing customized speculative decoding techniques to enhance its processing speed.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Ideogram 3.0 Released on Replicate

Ideogram 3.0 introduces enhanced design, style transfer, and realism features. It is now available on the Replicate platform.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmusic

Run MiniMax Speech-02 models with an API

MiniMax has released Speech-02 models that offer high-quality text-to-speech capabilities, including voice cloning, emotional expression, and multilingual support via an API.

Replicate Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Arcee AI's Journey to Inference Flexibility

Arcee AI has transitioned from AWS to Together Dedicated Endpoints to enhance its inference capabilities. This move aims to provide greater flexibility in AI model deployment.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Salesforce, Zoom, InVideo Enhance Training with NVIDIA

Together AI has announced that Salesforce, Zoom, and InVideo are utilizing their Turbocharged platform powered by NVIDIA's Blackwell architecture for faster training processes. This collaboration aims to improve the efficiency of AI model training for these companies.

Together AI Blog

Models & Labsother

Together Fine-Tuning Platform Enhancements Announced

Together AI has updated its fine-tuning platform to include preference optimization and continued training features. These enhancements aim to improve the customization and performance of AI models.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsresearch

Together AI Introduces Direct Preference Optimization

Together AI has announced support for Direct Preference Optimization (DPO) fine-tuning, which aligns language models with human preferences, accompanied by code examples and technical details.

Together AI Blog

Models & Labsresearch

Fine-tuning Techniques for LLMs Explored

Together AI discusses the process of fine-tuning large language models (LLMs) using checkpoints, providing insights on iterative fine-tuning methods.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labscoding

DeepCoder: Open-Source 14B Coder Released

Together AI has announced the release of DeepCoder, a fully open-source coding model with 14 billion parameters, designed to operate at the O3-mini level. This model aims to enhance code generation capabilities for developers.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI partners with Meta for Llama 4

Together AI has announced a partnership with Meta to offer Llama 4, a state-of-the-art multimodal mixture of experts (MoE) model. This collaboration aims to enhance AI capabilities in various applications.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Dippy AI Achieves 4 Million Tokens/Minute

Dippy AI has scaled its processing capabilities to handle over 4 million tokens per minute using Together's dedicated endpoints. This enhancement aims to improve the performance of AI companions.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

Together AI Showcases Innovations at GTC

Together AI presented new capabilities including NVIDIA Blackwell GPUs and instant GPU clusters aimed at enhancing AI development. They also introduced a full-stack solution for AI innovation.

Together AI Blog

© Together AI Blog

© Together AI BlogModels & Labsother

NVIDIA NIM Accelerates AI Model Deployment

Together AI has announced the integration of NVIDIA's NIM to enhance the deployment of leading AI models. This collaboration aims to streamline the process for developers and researchers.

Together AI Blog

© Replicate Blog

© Replicate BlogModels & Labsvideo

Exploring Alibaba's WAN2.1 Text-to-Video Model

The Replicate Blog discusses experiments with Alibaba's WAN2.1 text-to-video model, focusing on the effects of parameter adjustments. The article aims to uncover insights from these parameter tweaks.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Minions: Local and Cloud LLMs Collaboration

Researchers from Stanford have developed a method to shift LLM workloads to consumer devices by enabling small on-device models to collaborate with larger cloud models.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsvideo

AI Video Models Reach New Quality Benchmark

Recent developments indicate that several AI video models have achieved quality levels comparable to OpenAI's Sora. This suggests a significant advancement in the capabilities of AI video generation.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

New FLUX.1 Tools Enhance Image Generation

Replicate has introduced a new set of image generation capabilities for FLUX models, which includes features like inpainting, outpainting, canny edge detection, and depth maps.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

NVIDIA L40S GPUs Released

NVIDIA has announced the release of L40S GPUs, which offer improved performance at a lower cost.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Llama 3.2 Vision Models Released

Ollama has announced the availability of Llama 3.2 Vision models, specifically the 11B and 90B versions.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Ideogram v2 Inpainting Model on Replicate API

Replicate has partnered with Ideogram to integrate their new inpainting model into its API.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Stable Diffusion 3.5 Released

Stability AI has launched its latest text-to-image model, Stable Diffusion 3.5, which is now accessible via an API on Replicate.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

IBM Granite 3.0 models launched with Ollama

Ollama has partnered with IBM to introduce Granite 3.0 models to its platform.

Ollama Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsmodels

GPT-NeoX Supports Post-Training with SynthLabs

GPT-NeoX has introduced support for post-training techniques, specifically RLHF and RLAIF, through a collaboration with SynthLabs.

EleutherAI Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

FLUX1.1 Image Generation Model Released

Black Forest Labs has announced the release of FLUX1.1, their latest image generation model.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Llama 3.2 Launches with Ollama Partnership

Ollama has partnered with Meta to introduce Llama 3.2, which features smaller and multimodal capabilities.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Improving Flux Fine-tunes with Synthetic Data

The blog post discusses techniques for enhancing the performance of fine-tuned Flux models using synthetic training data. It emphasizes the importance of additional work to achieve optimal results.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsresearch

Guide to Maximal Update Parameterization Released

EleutherAI Blog has published a guide detailing the implementation of muTransfer, focusing on maximal update parameterization techniques.

EleutherAI Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Bespoke-Minicheck Reduces AI Hallucinations

Bespoke-Minicheck is a new model from Bespoke Labs designed to fact-check responses from other AI models, aiming to reduce hallucinations. It is now available through Ollama.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Fine-tune FLUX.1 with an API

Replicate has announced the ability to create and run fine-tuned Flux models programmatically using their HTTP API.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Fine-tune FLUX.1 for personalized image generation

Users can create a fine-tuned version of the FLUX.1 model to generate images of themselves. This process allows for personalized image creation using AI.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Fine-tune FLUX.1 with your own images

Replicate has introduced fine-tuning support for the FLUX.1 image generation models, allowing users to train the model on their own images with a single line of code via its API.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Exploring FLUX.1's Unique Strengths

The Replicate Blog provides an overview of FLUX.1, highlighting its strengths and aesthetic capabilities in generation tasks.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

New FLUX Model Released with API Access

FLUX.1 is a new text-to-image model from Black Forest Labs, surpassing previous open-source models and now available via an API.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

New Language Model and Safety Classifiers Released

Replicate has announced a new language model along with safety classifiers and a model search API.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Adds Tool Calling Support

Ollama has introduced tool calling support for models like Llama 3.1, allowing them to utilize external tools to respond to prompts. This enhancement enables models to perform more complex tasks and interact with external systems.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

Run Meta Llama 3.1 405B with API

Meta has released Llama 3.1 405B, its most powerful open-source language model, and provides instructions for running it in the cloud with a single line of code.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Google's Gemma2 Models and Stable Diffusion 3 Tips

The Replicate Blog discusses Google's Gemma2 models and provides insights into the language model leaderboard, along with tips for using Stable Diffusion 3.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Google Gemma 2 Released in Three Sizes

Google has released Gemma 2 on Ollama, available in sizes of 2B, 9B, and 27B parameters.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Custom Stable Diffusion 3 Now Available

Users can create and run custom versions of Stability's latest image generation model, Stable Diffusion 3, on Replicate through web or API access.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Replicate Intelligence #4 Insights

The latest Replicate blog discusses concepts in GPT models, introduces real-time speech-to-text capabilities in the browser, and announces the upcoming availability of H100 GPUs.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

NVIDIA H100 GPUs Coming to Replicate

Replicate will soon support NVIDIA's H100 GPUs for predictions and training, with early access available upon request.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Run Stable Diffusion 3 with an API

Stable Diffusion 3, the latest text-to-image model from Stability, offers enhanced image quality and efficiency. Users can deploy it in the cloud using a simple one-line code.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Replicate Intelligence #3 Released

The latest edition of Replicate Intelligence features Garden State Llama, a guide on applied LLMs, and advancements in real-time image generation.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Replicate Intelligence #2 Released

The latest Replicate Intelligence update features faster image generation, an AI-powered world simulator, and insights into AI dataset complexity.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Run Snowflake Arctic with an API

Snowflake has released Arctic, a new open-source language model, which can be run in the cloud with a single line of code.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Llama 3 Shows Reduced Censorship Compared to Llama 2

Meta's Llama 3 model has significantly lowered false refusal rates, refusing less than one-third of the prompts that Llama 2 would have refused. This indicates a shift towards less censorship in the model's responses.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labsmodels

Llama 3 Now Available on Ollama

Llama 3, the next generation of Meta's large language model, is now available for use on Ollama. It is described as the most capable openly available LLM to date.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labscoding

Run Meta Llama 3 with an API

Meta has released Llama 3, its latest language model, and provides instructions on how to run it in the cloud with a single line of code.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Introduces Embedding Models for RAG Applications

Ollama has launched embedding models that facilitate the generation of vector embeddings for search and retrieval augmented generation applications.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama adds support for AMD graphics cards

Ollama has announced preview support for AMD graphics cards on Windows and Linux, allowing users to accelerate all features of the platform using these GPUs.

Ollama Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsother

New Foundation Model Development Cheatsheet Released

EleutherAI has announced the release of the FM Dev Cheatsheet, a new resource for foundation model development.

EleutherAI Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Ollama Launches Windows Preview for Language Models

Ollama is now available on Windows in preview, allowing users to pull, run, and create large language models with built-in GPU acceleration and access to a full model library.

Ollama Blog

© Ollama Blog

© Ollama BlogModels & Labsother

New Vision Models Released: LLaVA 1.6

Ollama has announced the release of new vision models, LLaVA 1.6, available in 7B, 13B, and 34B parameter sizes, featuring enhancements in image resolution, text recognition, and logical reasoning capabilities.

Ollama Blog

Models & Labscoding

Running Yi chat models via API

The Yi series models, developed by 01.AI, are large language models that can be run in the cloud with a simple API call. The blog provides a guide on how to implement this.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labswriting

Open-source models outperform OpenAI in text embeddings

An interactive example demonstrates how to use an open-source embedding model that offers better price and performance compared to OpenAI's embeddings API.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmusic

MusicGen-Chord Enhances Music Generation Capabilities

Meta's MusicGen model now includes chord conditioning, allowing users to generate backing tracks based on text prompts and chord progressions.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Generate Images in One Second on Mac

A guide on running a latent consistency model on M1 or M2 Macs to generate images quickly.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsresearch

Llemma: New Open Language Model for Mathematics

EleutherAI has released Llemma, a new language model for mathematics with 7 billion and 34 billion parameters, trained on a large dataset of mathematical documents. The models demonstrate enhanced mathematical capabilities and can be fine-tuned for various tasks.

EleutherAI Blog

© Replicate Blog

© Replicate BlogModels & Labsmusic

Fine-tune MusicGen for custom music generation

Replicate has introduced fine-tuning support for MusicGen, allowing users to train its small, medium, and melody models on their own audio files.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Using Llama 2 for Information Extraction

The article discusses how to utilize Llama 2 models in conjunction with grammars for tasks related to information extraction.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labscoding

Running Mistral 7B with an API

Mistral 7B is an open-source large language model, and the blog provides instructions on how to run it in the cloud with a simple command.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

Fine-tuned models boot in under one second

Replicate Blog reports significant improvements in the cold boot time for fine-tuned models, now achieving boot times of less than one second.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Guide to Prompting Llama 2 Released

A new guide has been published on how to effectively prompt Llama 2, an AI model. The guide aims to help users improve their interactions with the model.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Fine-tune SDXL with your own images

Replicate has introduced fine-tuning support for SDXL 1.0, allowing users to train the model on their own images using a simple command via the Replicate API.

Replicate Blog

© Ollama Blog

© Ollama BlogModels & Labsother

Run Llama 2 Uncensored Locally

The blog post discusses comparisons between the uncensored and censored versions of the Llama 2 model when run locally.

Ollama Blog

© Replicate Blog

© Replicate BlogModels & Labscoding

Run Llama 2 with an API

Llama 2, an open-source language model, can now be run in the cloud using a simple API call. This development positions Llama 2 as a competitive alternative to OpenAI's models.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Fine-tune Llama 2 on Replicate

Replicate has announced the ability to fine-tune the Llama 2 model on their platform, providing users with tools to customize the model for specific tasks.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

Recent Developments in Llama 2

The article provides a roundup of updates related to Meta's open-source large language model, Llama 2, following its second major release.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labswriting

Improving Language Models for Poetry

The article discusses alternative methods to enhance the poetic capabilities of large language models beyond prompt engineering and training.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsother

Safetensors Library Audited for Security

The safetensors library has passed an external security audit, paving the way for it to become the default format for saved models on Hugging Face. This audit was conducted by Trail of Bits in collaboration with EleutherAI and Stability AI.

EleutherAI Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Language Model Developments Roundup

The article provides an overview of recent advancements in open-source language models as of April 2023.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

Language Models Now Available on Replicate

Replicate has announced the availability of various language models on its platform, allowing users to access and utilize these models for different applications.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labscoding

Using Alpaca-LoRA for Model Fine-Tuning

The article provides a guide on how to use Alpaca-LoRA to fine-tune models similar to ChatGPT. It outlines the steps and considerations involved in the fine-tuning process.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsmodels

Week 3 of LLaMA Developments

The Replicate Blog provides a roundup of recent developments related to LLaMA, an AI model. This includes updates and insights from the ongoing progress in the llamaverse.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labswriting

Fine-tune LLaMA for Homer Simpson's voice

A new method allows users to fine-tune the LLaMA model to generate text in the voice of Homer Simpson using minimal data and training time. This technique demonstrates the flexibility of LLaMA in adapting to specific styles.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

Train Stanford Alpaca Locally

The article provides a guide on how to train and run Alpaca, a fine-tuned version of the LLaMA model, on personal machines. This model is designed to respond to instructions similarly to ChatGPT.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Introducing LoRA for Stable Diffusion Fine-Tuning

LoRA is a new method for fine-tuning Stable Diffusion models more quickly than traditional methods like DreamBooth, and it can be run in the cloud on Replicate.

Replicate Blog

© Hugging Face Blog

© Hugging Face BlogModels & Labsother

Hugging Face Launches Model Card Creation Tool

Hugging Face has released a new tool for creating model cards, along with an updated template and guide. This initiative aims to enhance machine learning documentation accessibility.

Hugging Face Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Train DreamBooth Model on Replicate Easily

Users can train a DreamBooth model using a few images and a single API call, then deploy it on Replicate for cloud predictions.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Automating Image Collection with CLIP and LAION5B

The Replicate Blog discusses a method for automating the collection of thousands of captioned images using CLIP and the LAION5B dataset.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Exploring Text to Image Models

The article discusses the fundamentals of using an API to generate images from text prompts.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsother

New Template for Model READMEs Introduced

Replicate has launched new templates for documenting models, inspired by model cards. These templates aim to standardize the way models are presented on the platform.

Replicate Blog

© Replicate Blog

© Replicate BlogModels & Labsimage

Introduction to Constraining CLIPDraw

The article discusses differentiable programming and how it can be used to refine generative art models like CLIPDraw.

Replicate Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsmodels

Announcing GPT-NeoX-20B Model Release

EleutherAI has announced the release of GPT-NeoX-20B, a 20 billion parameter model developed in collaboration with CoreWeave.

EleutherAI Blog

© EleutherAI Blog

© EleutherAI BlogModels & Labsother

EleutherAI Reflects on One Year Journey

EleutherAI shares a retrospective on its first year, highlighting key developments and milestones achieved during this period.

EleutherAI Blog