Coding Tools

Latest AI signals in this category

Coding Toolscoding

New upscale shader added to ggml-webgpu

The ggml-webgpu project has introduced an upscale shader with multiple implementations. This update supports various platforms including macOS, Linux, Android, and Windows.

llama.cpp Releases

Coding Toolscoding

Claude Code Releases v2.1.118 Update

The latest update to Claude Code introduces several new features and fixes, including vim visual mode and enhanced theme management.

Claude Code Releases

Coding Toolscoding

Claude Code v2.1.119 Released with New Features

The latest release of Claude Code introduces several enhancements, including persistent config settings and support for multiple code review platforms.

Claude Code Releases

Coding Toolscoding

Claude Code v2.1.121 Released with New Features

The latest release of Claude Code introduces several enhancements, including an alwaysLoad option for server configuration and improved user interface features.

Claude Code Releases

Coding Toolscoding

Claude Code Releases v2.1.122 Update

The latest update to Claude Code introduces several bug fixes and new features, including an environment variable for service tier selection and improved session management.

Claude Code Releases

Coding Toolscoding

Claude Code v2.1.123 Released

The latest release of Claude Code addresses an OAuth authentication issue. The update fixes a 401 retry loop problem when a specific environment variable is set.

Claude Code Releases

Coding Toolscoding

Llama.cpp Releases Update for Multiple Platforms

The latest release of Llama.cpp includes fixes and support for various platforms, including macOS, Linux, Android, and Windows.

llama.cpp Releases

Coding Toolscoding

Llama.cpp Updates Cpp-Httplib to 0.43.2

The Llama.cpp project has released an update to cpp-httplib version 0.43.2, enhancing compatibility across various platforms including macOS, Linux, Android, and Windows.

llama.cpp Releases

© GitHub Changelog

© GitHub ChangelogCoding Toolscoding

GitHub Copilot April 2026 Update for Visual Studio

The April 2026 update for Visual Studio introduces new features for GitHub Copilot, including cloud agent integration, custom agent support, and a new debugger agent for validating fixes. Users can now customize keyboard shortcuts and access a chat history panel for Copilot sessions.

GitHub Changelog

© WIRED AI

© WIRED AICoding Toolscoding

OpenAI Restricts Codex on Creature References

OpenAI has instructed its coding agent, Codex, to avoid mentioning creatures like goblins and trolls unless directly relevant. This directive aims to streamline interactions and improve focus on coding tasks.

WIRED AI

© Google Research Blog

© Google Research BlogCoding Toolscoding

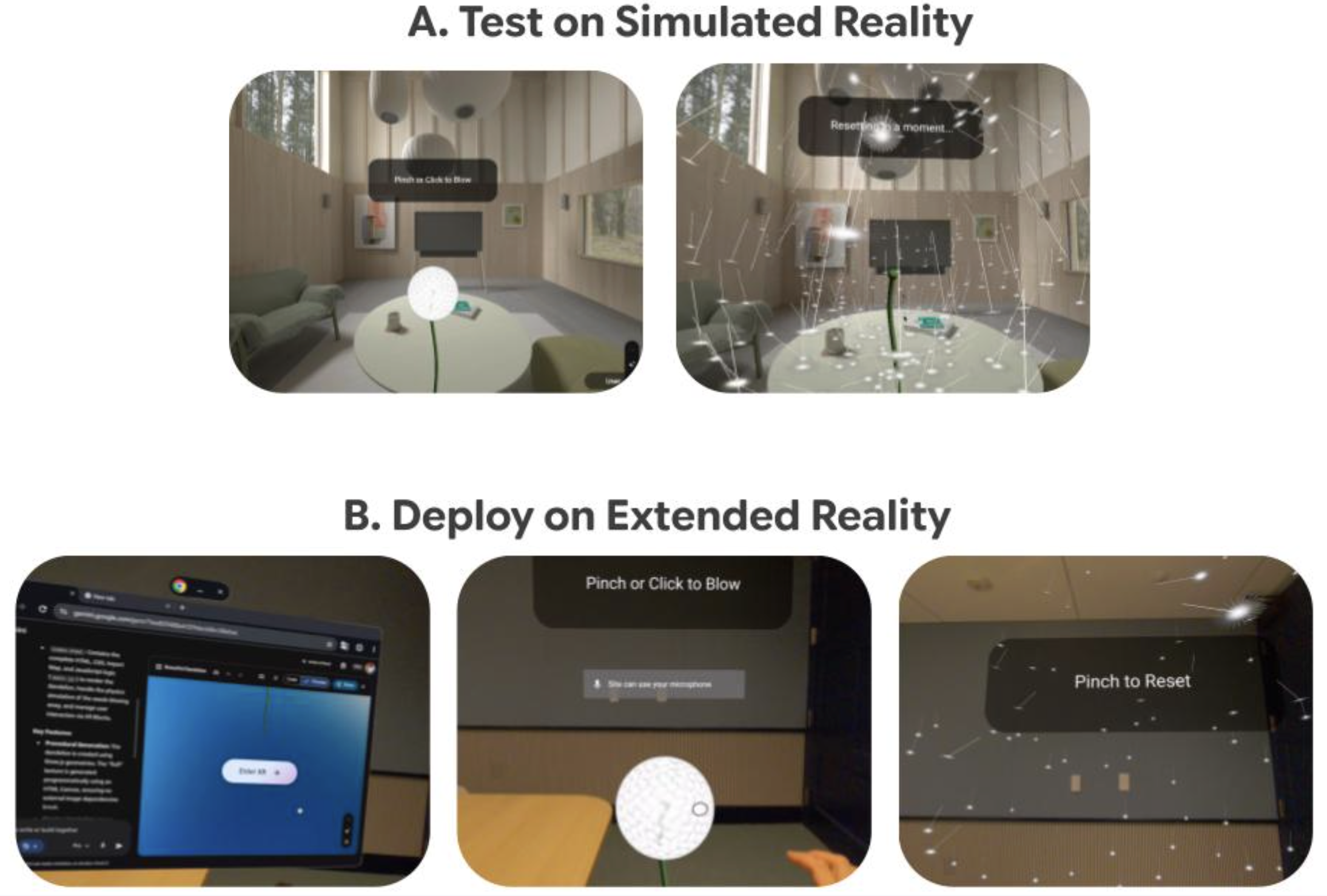

Vibe Coding XR Enhances AI and XR Prototyping

Google Research introduced Vibe Coding XR, a tool designed to accelerate the prototyping of AI and XR applications using XR Blocks and Gemini. This development aims to improve human-computer interaction and visualization in these fields.

Google Research Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Quick Setup for OpenClaw via Ollama Command

Users can set up OpenClaw in under two minutes using a single command from Ollama. This simplifies the installation process significantly.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Adds Subagents and Web Search to Claude Code

Ollama has introduced support for subagents and web search functionalities in Claude Code.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Launch Simplifies Coding Tool Setup

Ollama has introduced a new command called 'ollama launch' that allows users to set up and run coding tools such as Claude Code, OpenCode, and Codex without the need for environment variables or configuration files.

Ollama Blog

© VentureBeat AI

© VentureBeat AICoding Toolscoding

Goose offers free alternative to Claude Code

Claude Code, an AI coding tool by Anthropic, has subscription costs ranging from $20 to $200 per month, leading to dissatisfaction among developers. In contrast, Goose, an open-source AI agent by Block, provides similar functionalities for free, allowing users to run it locally without subscription fees.

VentureBeat AI

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Adds Anthropic API Compatibility

Ollama has announced compatibility with the Anthropic Messages API, allowing users to utilize Claude Code with open models.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

OpenAI Codex Integrates with Ollama Models

Ollama has announced that OpenAI's Codex CLI can now utilize open models, allowing it to read, modify, and execute code in users' working directories. This integration supports models like gpt-oss:20b and gpt-oss:120b.

Ollama Blog

© VentureBeat AI

© VentureBeat AICoding Toolscoding

Boris Cherny Reveals Claude Code Workflow

Boris Cherny, creator of Claude Code at Anthropic, shared his innovative coding workflow on X, which has garnered significant attention from the engineering community. His approach involves running multiple AI agents in parallel, transforming traditional coding practices.

VentureBeat AI

© Together AI Blog

© Together AI BlogCoding Toolscoding

Together Python SDK v2.0 Released

Together AI has announced the release of version 2.0 of its Python SDK, which includes new features and improvements for developers. This update aims to enhance the integration and usability of AI tools within Python applications.

Together AI Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

Running TorchForge in Together AI Cloud

The Together AI Blog provides a guide on executing TorchForge reinforcement learning pipelines within the Together AI Native Cloud environment.

Together AI Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

New coding models & integrations released

Ollama has launched GLM-4.6 and Qwen3-coder-480B on its cloud service, along with an update to Qwen3-Coder-30B for improved tool calling. These models come with easy integrations to familiar development tools.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Launches New Web Search API

Ollama has introduced a new web search API with a free tier for individual users and higher rate limits available through its cloud service.

Ollama Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

Improved Batch Inference API Released

Together AI has launched an enhanced Batch Inference API featuring a new UI, expanded model support, and a significant increase in rate limits to 30B tokens. This update aims to simplify and reduce costs for large-scale AI workloads.

Together AI Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Torch Compile Caching for Faster Inference

The Replicate Blog discusses a new feature that allows users to cache their compiled models, which can lead to improved boot and inference times.

Replicate Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

DeepSWE: Open-sourced Coding Agent Development

Together AI has announced the development of DeepSWE, a fully open-sourced coding agent that utilizes reinforcement learning to enhance its capabilities. This project aims to provide a state-of-the-art tool for developers.

Together AI Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Introduces Streaming Responses with Tool Calling

Ollama has added support for streaming responses alongside tool calling, allowing chat applications to stream content and utilize tools in real time.

Ollama Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

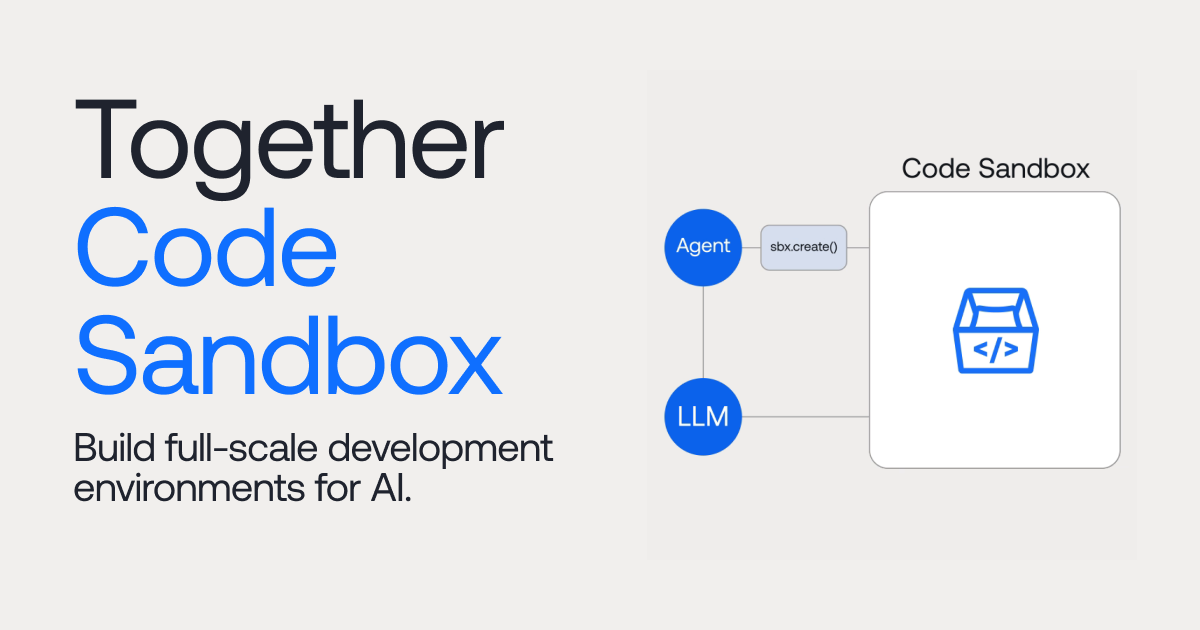

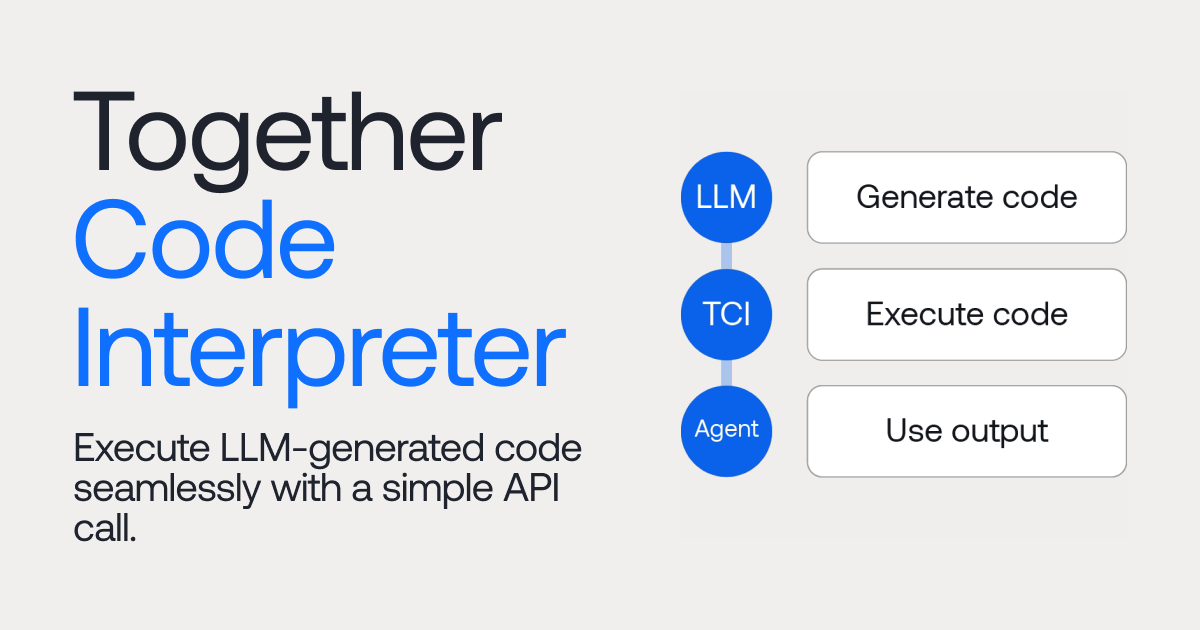

Together AI Launches Code Sandbox and Interpreter

Together AI has introduced two new tools: Together Code Sandbox and Together Code Interpreter, designed for state-of-the-art code execution in AI applications.

Together AI Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

Together Code Sandbox Launches for AI Coding Products

Together AI has introduced the Together Code Sandbox, designed to provide robust infrastructure for developing AI coding products at scale.

Together AI Blog

© Together AI Blog

© Together AI BlogCoding Toolscoding

Together Code Interpreter Launches API for LLM Code Execution

Together AI has introduced a Code Interpreter that allows users to execute code generated by large language models (LLMs) through a simple API call. This tool aims to streamline the process of running LLM-generated code.

Together AI Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Introduces Structured Outputs Support

Ollama has updated its Python and JavaScript libraries to support structured outputs, allowing model outputs to conform to a defined JSON schema.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Python Library 0.4 Released with Improvements

The Ollama Python library has been updated to version 0.4, introducing function calling as tools, full typing support, and new examples.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Open-source AI code assistant for VS Code and JetBrains

Continue allows users to create a coding assistant within Visual Studio Code and JetBrains using open-source LLMs. This integration aims to enhance the coding experience by providing AI support directly in the development environment.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Google announces Firebase Genkit with Ollama support

Google introduced Firebase Genkit, an open-source framework that supports Ollama, aimed at helping developers create AI-powered applications.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Achieves Compatibility with OpenAI API

Ollama has announced initial compatibility with the OpenAI Chat Completions API, allowing users to utilize existing OpenAI tools with local models through Ollama.

Ollama Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Run Code Llama 70B with an API

Code Llama 70B, an open-source code generation model, can be run in the cloud using a simple API call. The blog provides instructions for implementation.

Replicate Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Releases Python and JavaScript Libraries

Ollama has launched initial versions of its Python and JavaScript libraries, allowing easy integration with applications using these languages. The libraries support all features of the Ollama REST API and are compatible with various versions of Ollama.

Ollama Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

New CLI Command for Replicate Apps

Replicate has introduced a new CLI command that simplifies the process of starting applications on their platform.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Using Retrieval Augmented Generation with ChromaDB

The article discusses creating an example app that utilizes retrieval augmented generation with bge-large-en for embeddings, ChromaDB for vector storage, and mistral-7b-instruct for language model generation.

Replicate Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

LLM-Powered Web Apps with Client-Side Tech

The Ollama Blog discusses how to recreate a popular LangChain use-case using open source software for Retrieval-Augmented Generation (RAG), enabling users to interact with their documents.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Ollama Launches Official Docker Image

Ollama is now available as an official Docker image, allowing it to run with Docker Desktop on Mac and inside Docker containers with GPU acceleration on Linux.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolsproductivity

Using LLMs in Obsidian Notes

The blog post discusses integrating a local LLM with Obsidian and other note-taking tools using Ollama. It provides a guide on how to enhance note-taking with AI capabilities.

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Guide to Prompting Code Llama

The guide provides insights on structuring prompts for Code Llama, covering its features like instructions, code completion, and fill-in-the-middle (FIM).

Ollama Blog

© Ollama Blog

© Ollama BlogCoding Toolscoding

Run Code Llama locally

Meta's Code Llama is now available for local use via Ollama.

Ollama Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Streaming Output for Language Models Introduced

Replicate's API now supports server-sent event streams for language models, enhancing app responsiveness. Developers can learn how to implement this feature.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolsimage

Run SDXL with an API

The Replicate Blog provides a guide on how to run Stable Diffusion XL 1.0 using their API.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Guide to Running Llama 2 Locally

The article provides instructions on how to run Llama 2 on various platforms including Mac, Linux, Windows, and mobile devices.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

AutoCog Generates Cog Configurations with GPT-4

AutoCog is a tool that utilizes GPT-4 to generate configuration files for machine learning projects, specifically creating predict.py and cog.yaml files until a successful prediction is achieved.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

Machine learning needs better tools

The Replicate Blog discusses the gap between the demand for machine learning applications and the lack of expertise among potential users. It highlights the need for improved tools to facilitate easier access to machine learning technologies.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolsimage

Run Stable Diffusion on M1 Mac GPU

A guide has been published on how to run Stable Diffusion locally on M1 Mac's GPU, allowing users to modify and experiment with the model.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolsimage

Run Stable Diffusion with an API

The Replicate Blog provides a guide on integrating Stable Diffusion into various applications using their API. This allows developers to leverage Stable Diffusion for creative projects and hacks.

Replicate Blog

© Replicate Blog

© Replicate BlogCoding Toolscoding

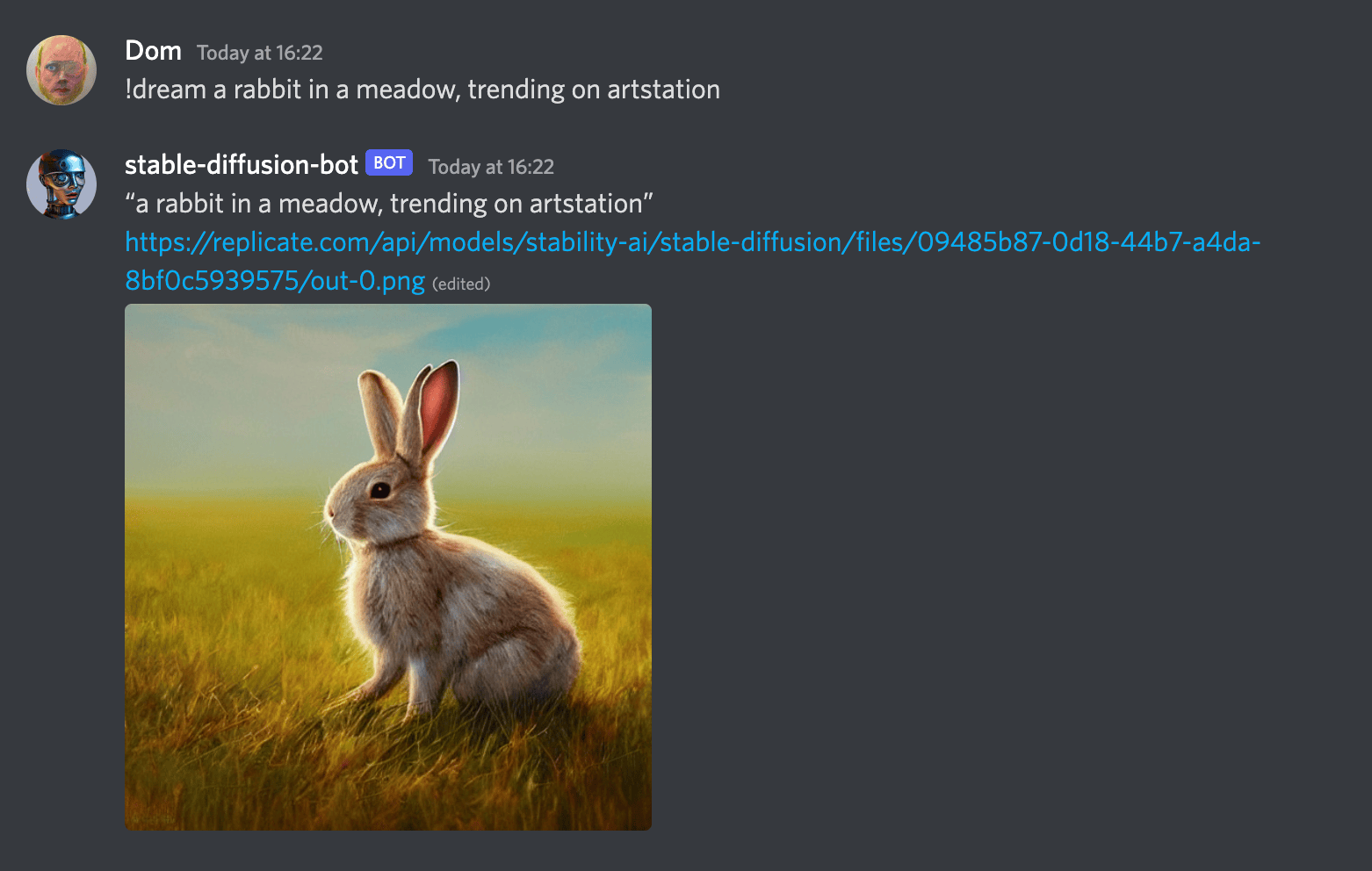

Create a Discord Bot Using Stable Diffusion

A tutorial has been released on how to build a chat bot for Discord that generates images in response to user prompts using Stable Diffusion and Replicate. The tutorial utilizes Fly.io for deployment.

Replicate Blog